Your beautiful resume is being read by a robot, and the robot can't see it

An investigation into ATS systems, Canva's hidden ghost text, the AI detection arms race, and the unglamorous strategies that land interviews

The ghost in the resume

A staffing professional recently shared a story that should unsettle anyone who has ever used a design template for their resume. She was reviewing applicants for a healthcare position when she noticed something odd. The candidate’s resume, built in Canva, looked perfectly professional on screen: clean layout, proper headings, healthcare experience listed in detail. But when the staffing agency’s hiring software tried to read the document, it pulled in something else entirely.

Instead of “registered nurse in Portland,” the system read “circus assistant in Los Angeles.” I mean, come on.

The candidate had been applying for jobs for eighteen months. Eighteen months of silence, zero callbacks, growing despair. The explanation had nothing to do with her qualifications, her cover letter, or the job market.

It was ghost text.

Ghost text is placeholder data left behind inside Canva templates. When a template designer builds a resume layout, they fill it with fake information: a sample name, a sample job title, a sample location. When you replace that text with your own, Canva’s visual editor shows your changes perfectly.

You see your name. You see your job history. Everything looks right. But in the file’s metadata and hidden text layers, the original placeholder content sometimes persists. The software that employers use to sort applications reads those hidden layers. Your resume says one thing to your eyes and something completely different to the machine.

The problem here isn’t about aesthetic preferences or whether “creative” resumes are better than plain ones. A qualified healthcare professional was invisible to employers for a year and a half because of a technical failure in the tool she trusted.

She absolutely had no way of knowing.

A medical education professor found the same thing in a classroom. Students were submitting papers built from Canva templates, and when those papers ran through plagiarism and AI detection software, the tools flagged lorem ipsum and placeholder text that didn’t appear anywhere in the visible document. The ghost text wasn’t just a resume problem.

It was baked into how Canva’s template system handled text layers.

The staffing professional’s story ended with a simple experiment. She helped the candidate rebuild her resume in Microsoft Word, using a plain single-column format. Within two weeks and eight applications, the candidate had a job offer.

A qualified healthcare professional was invisible to employers for eighteen months because of a technical failure in a free design tool. She had no way of knowing.

That difference between “two weeks” and “eighteen months” is the distance between understanding how hiring software works and not understanding it.

And most job seekers, through no fault of their own, are on the wrong side of that gap.

What an ATS does with your resume

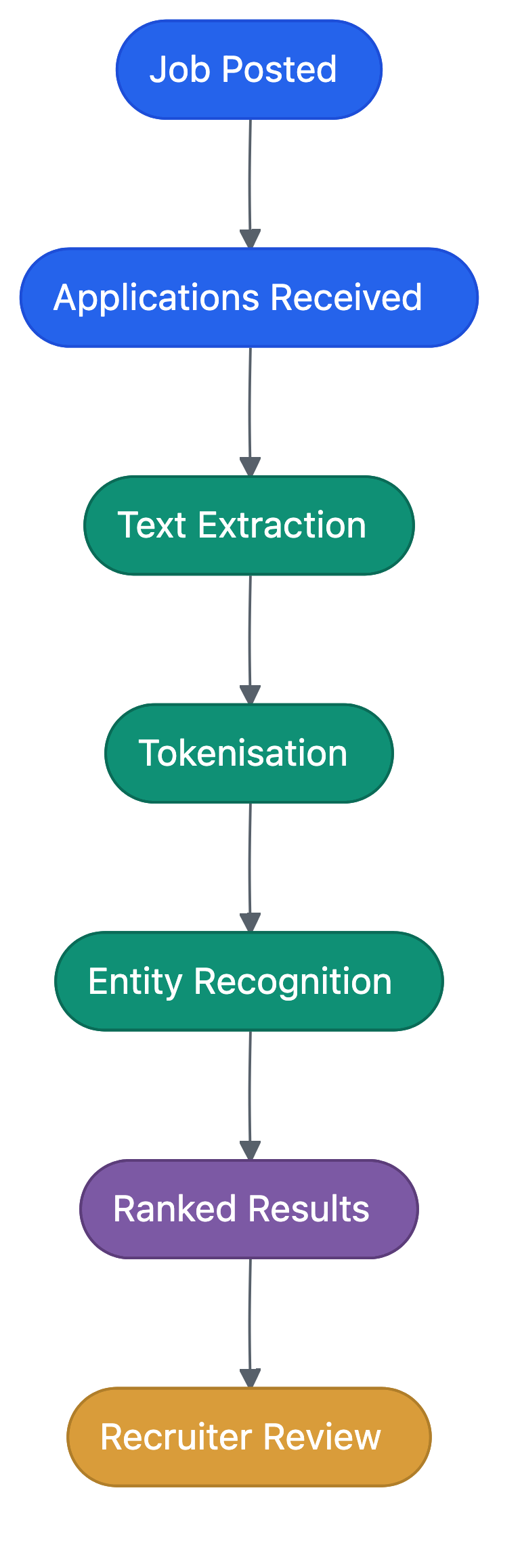

An Applicant Tracking System, or ATS, sits between you and almost every job you apply for. If you’ve applied online, your resume has almost certainly been processed by one.

An ATS is a database. When a company posts a job opening, every application that comes in through their careers page or job board gets funnelled into this system. The ATS stores your resume, your contact details, your cover letter, and any answers you provided on the application form. It organises all of this so recruiters can search, filter, and sort through what might be hundreds or thousands of applicants for a single role.

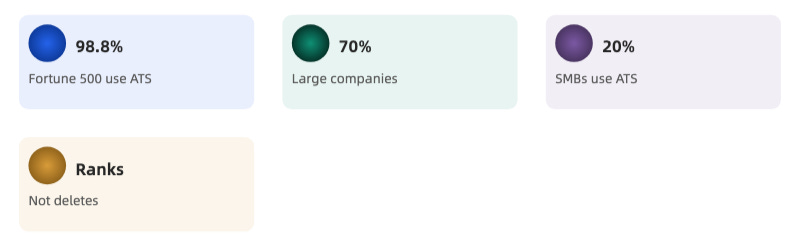

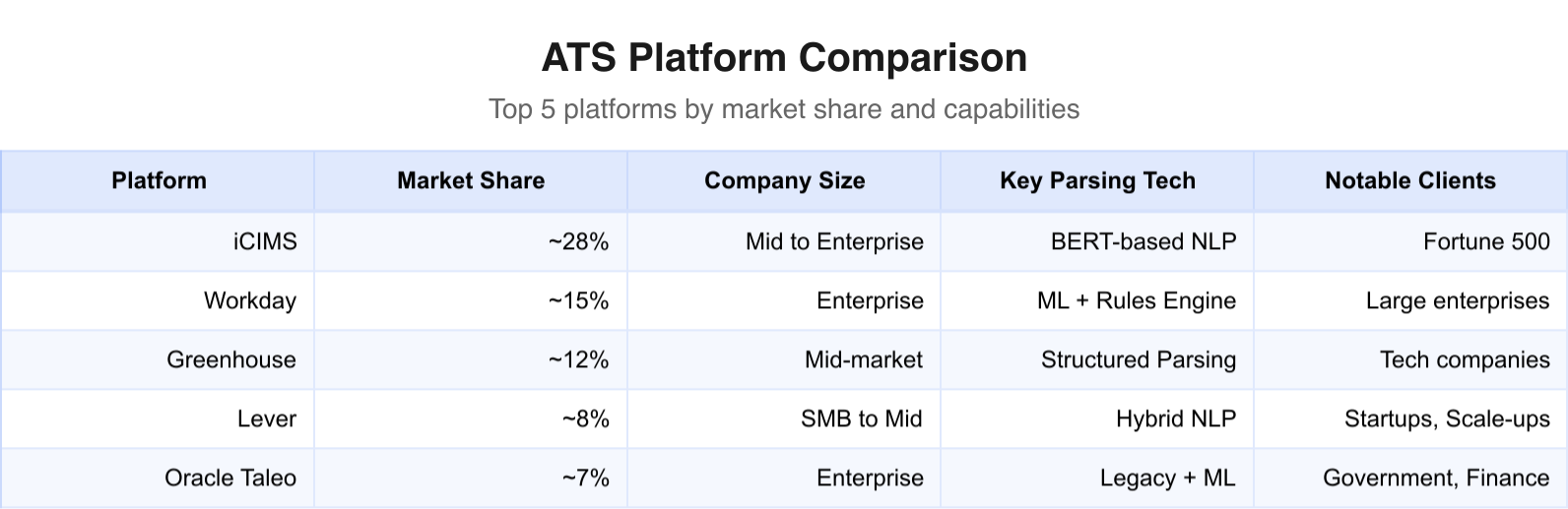

According to industry data, 98% of Fortune 500 companies use an ATS. About 70% of large companies and roughly 20% of small-to-medium businesses use one too. The market leaders include iCIMS (one of the largest providers by market share), Workday, Greenhouse, Lever, and Oracle’s Taleo.

If you’re applying to any company with more than about fifty employees, there’s a good chance an ATS is involved.

How parsing works

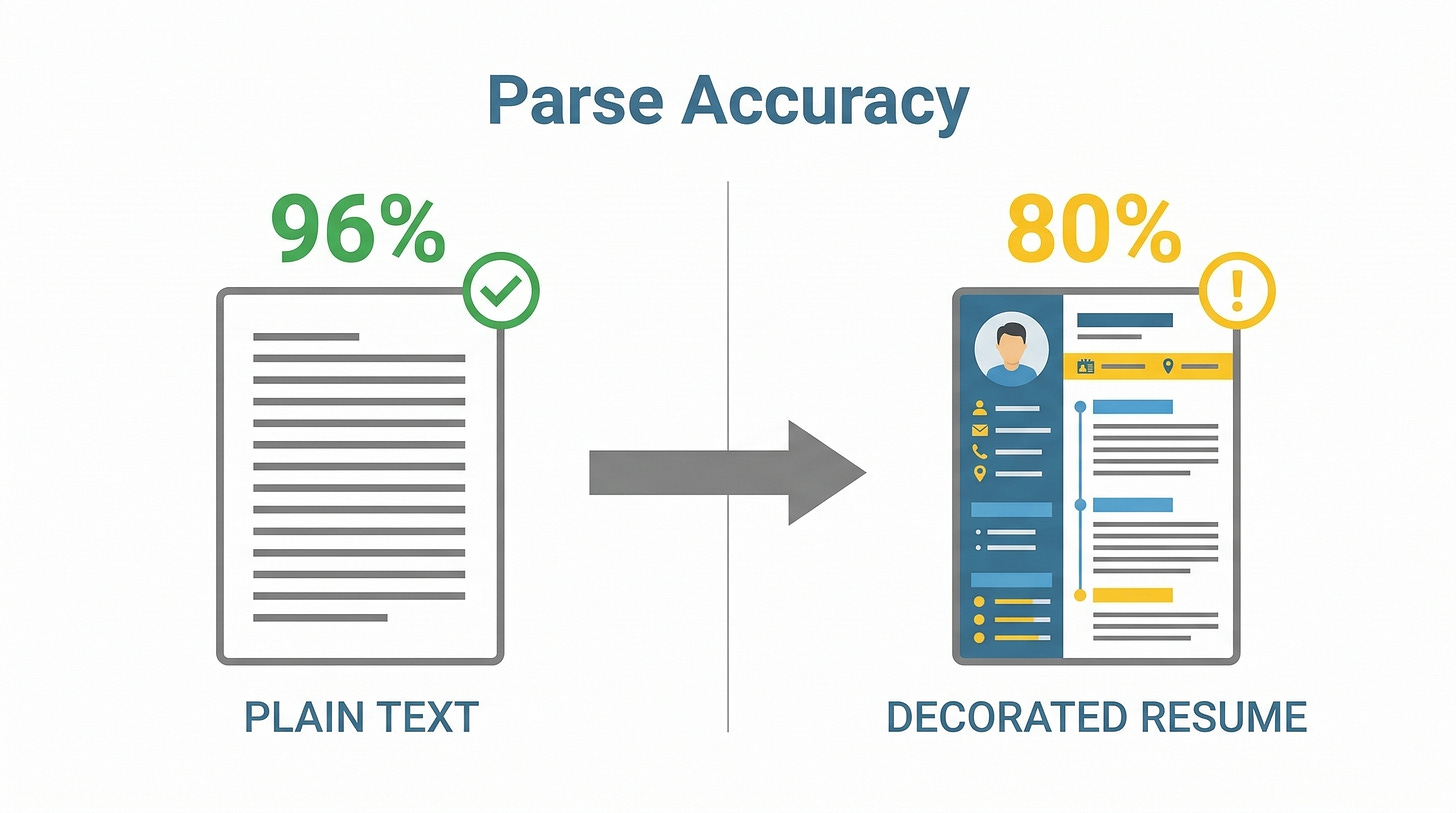

When your resume arrives, the ATS needs to convert it from a document (a PDF, a Word file, whatever you uploaded) into structured data it can store and search. This process is called parsing, and it happens in three stages.

Text extraction comes first. The system pulls all the readable text out of your document. This is where format problems start: if your text is stored as an image, or as vector shapes rather than actual characters, the parser can’t extract anything. It’s like trying to copy text from a photograph.

Tokenisation and segmentation comes second. The parser breaks your text into meaningful chunks and tries to figure out which parts are your name, which are job titles, which are skills, and which are work history. It looks for patterns: dates near company names probably indicate employment history; a cluster of technical terms probably indicates a skills section.

Entity recognition is the final stage. Modern ATS platforms use natural language processing, including BERT-based transformer models (the same underlying technology that powers tools like ChatGPT, adapted for a much narrower task), to identify and categorise the entities in your resume. “Python” gets tagged as a programming skill. “University of Auckland” gets tagged as an educational institution. “2019-2023” gets tagged as a date range.

The organiser, not the gatekeeper

Most career advice gets this wrong. An ATS is an organising tool. It’s not an autonomous gatekeeper that deletes your resume before anyone sees it.

Think of it like a library catalogue. The catalogue helps the librarian find books about a specific topic, published in a specific year, by a specific author. The catalogue doesn’t throw books away. It doesn’t decide which books are good. It organises, it sorts, and it surfaces results based on what the librarian asks for. The librarian (in this case, the recruiter) makes the decisions.

When a recruiter opens their ATS, they typically search for candidates matching certain criteria: specific skills, a certain number of years of experience, maybe a particular location. The ATS returns results ranked by how well each candidate matches. Recruiters then review those results and decide who to contact.

Think of an ATS like a library catalogue. It helps the recruiter find what they’re looking for. It doesn’t throw books away.

The ATS ranks and sorts. A human makes the call. But if the ATS can’t read your resume properly, if it can’t extract your skills or identify your experience, you won’t show up in the recruiter’s search results. You won’t be actively rejected. You’ll be invisible. That’s worse.

The zombie statistic: where the 75% myth came from

You’ve almost certainly seen this claim: “75% of resumes are rejected by ATS before a human ever sees them.” It appears in career coaching advertisements, in resume service sales pages, in news articles, and across social media.

It sounds alarming. It feels true. And it has no factual basis whatsoever.

The origin is a 2012 sales pitch by a company called Preptel. Preptel sold resume optimisation services, and the 75% figure was part of their marketing copy. No published methodology, no disclosed sample size, no peer review. Preptel shut down in August 2013, less than a year after the statistic began circulating. The company disappeared. The number didn’t.

Following the citation chain

Career researcher Christine Assaf of HRTact.com traced the citation chain and found a pattern that should embarrass every outlet that repeated it. TopResume, a paid resume-writing service, cited Forbes. Forbes cited no one. CIO.com misattributed the figure to Bersin, the Deloitte-owned research firm. Bersin never published any such finding. The statistic had no source, but each new citation made it look more credible because it now appeared alongside recognisable brand names.

Good grief.

This is how misinformation about hiring becomes gospel. A company invents a number to sell a service. The company dies. The number keeps bouncing between outlets, picking up authority with each bounce, until it’s so embedded in the hiring conversation that questioning it feels contrarian.

Where the myth lives now

The 75% figure propagates through three main channels: 68% of citations appear on social media, where it’s shared as received wisdom; 20% come from career coaches and resume-writing services, who have a direct financial interest in making ATS sound as threatening as possible; and 12% come from media outlets that repeat it without verification.

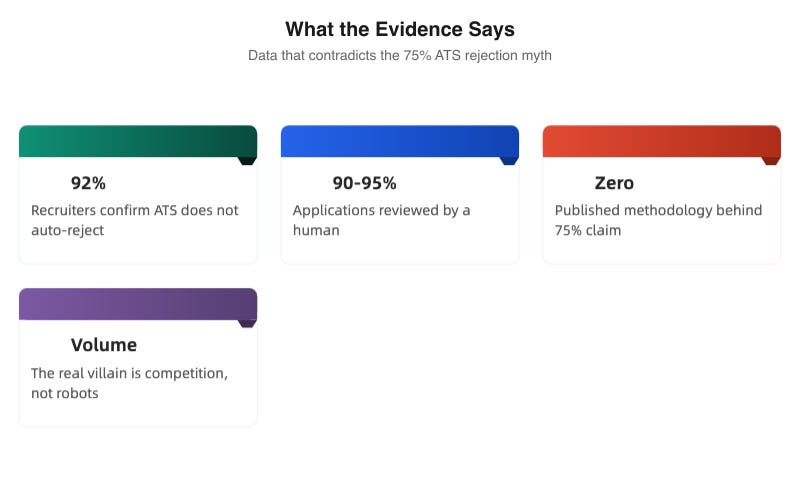

What the evidence says

The most direct test of the 75% claim comes from an Enhancv study that tested resume templates across multiple ATS platforms and surveyed recruiters, finding that the vast majority confirmed their ATS doesn’t automatically reject applications. The system ranks, filters, and organises. It doesn’t delete.

Recruiter Jan Tegze has publicly estimated that 90-95% of applications are reviewed by a human at some point in the process. Amy Miller, a former recruiter at Amazon, Google, and Microsoft, has called the idea of ATS as a “mythical AI-infused tool” that auto-rejects candidates “crazy.”

“The notion of ATS as some mythical AI-infused tool that auto-rejects candidates is crazy.”

— Amy Miller, former recruiter at Amazon, Google, and Microsoft

So why do so many people apply for jobs and hear nothing back? The explanation is simpler than a robot conspiracy. The job market is brutally competitive.

Employers are drowning in applications. Your resume may well have been seen by a human, but that human had 256 other resumes to review the same day and yours didn’t make the shortlist.

The villain is volume, not robots. But “volume competition” doesn’t sell resume-writing services. “A robot is deleting your resume” does.

How Canva breaks your resume

Let’s go back to Canva.

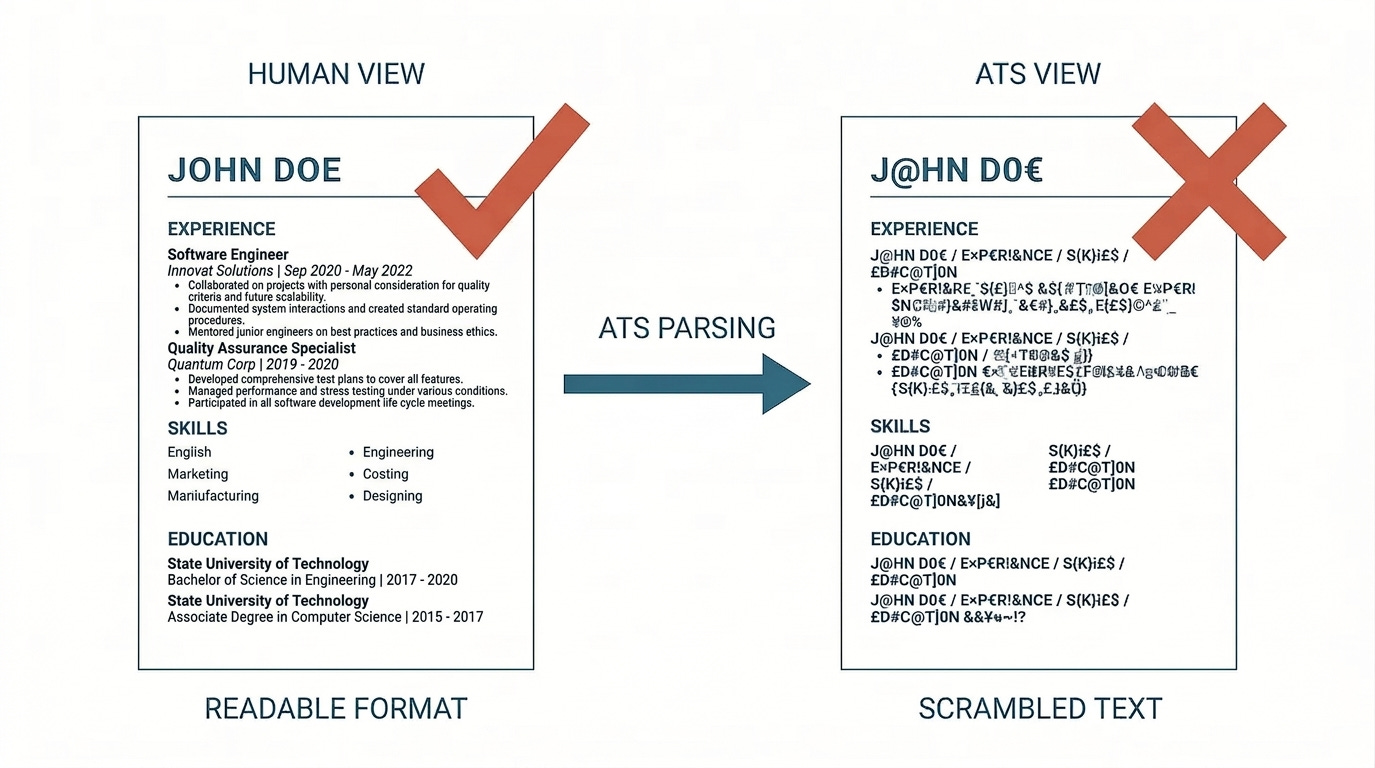

Canva resumes fail in ATS systems because of how Canva constructs documents at the file level. The visual output looks professional. The underlying file structure is a minefield. Four distinct failure modes exist, and most Canva templates trigger at least two of them.

Text stored as vector paths

When you type “Sarah Johnson” into a Canva text box, Canva may render that text as a series of vector shapes rather than searchable character data. To a human eye, the PDF looks identical either way. To an ATS parser, vector paths are just shapes: tiny drawings of letters. The system can’t read them, can’t search them, can’t extract them. Your name, your job titles, your skills, your entire professional history might as well be an abstract painting.

Fragmented text layers

Even when Canva does store text as text, it often splits a single sentence across multiple separate objects in the file. “Managed a team of 12 engineers” might be stored as three disconnected fragments: “Managed a team,” “of 12,” and “engineers.” The ATS tries to read the document in order, but “order” in a multi-layered design file isn’t the same as “order” on a printed page. The parser might reassemble your sentence as “of 12 Managed a team engineers.”

Custom font encoding

Canva’s library of decorative fonts doesn’t always map to standard character encodings. When the ATS extracts text from a PDF that uses a non-standard font, the characters can come through garbled. Your “B.Sc. in Computer Science” might parse as a string of unrecognisable symbols.

Image-based PDF export

Some Canva export settings produce PDFs where the entire page is rendered as a flat image. There’s no text layer at all. To an ATS, this document is blank. It’s a picture of a resume, not a resume. You might as well have mailed them a photograph.

The numbers

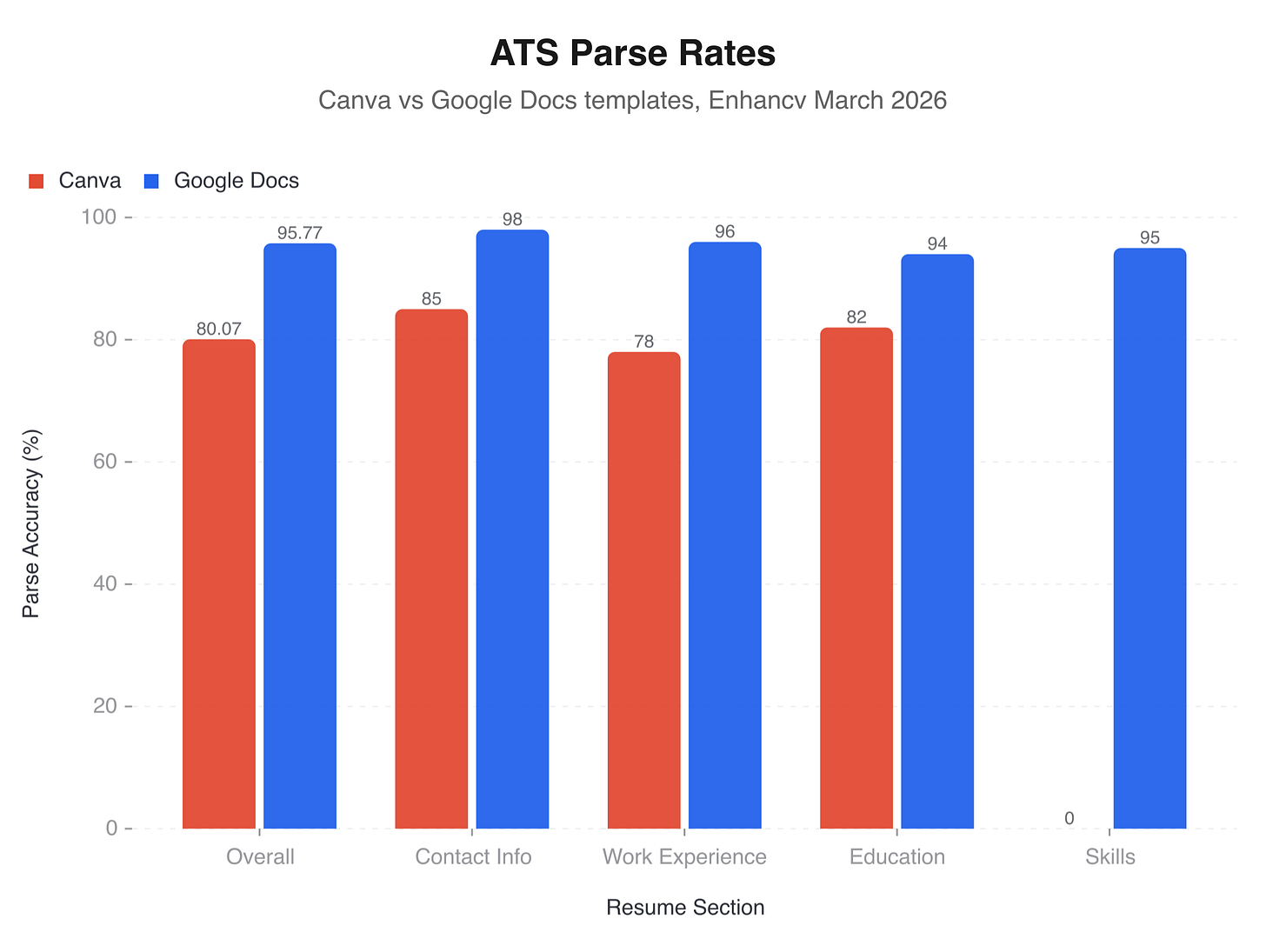

Enhancv tested Canva templates against Google Docs templates (a study originally conducted in late 2023 and updated through 2026), measuring how accurately each format parsed through common ATS systems. Canva averaged an 80.07% parse accuracy rate. Google Docs averaged 95.77%. That fifteen-percentage-point gap represents a substantial chunk of your professional history that the ATS either misreads or ignores entirely.

The most striking finding: skills sections in Canva templates had a 0% parse rate. Zero. The section of your resume that recruiters use most often to filter candidates was completely invisible.

Honestly, that’s staggering.

Out of 50 popular Canva resume templates tested, 72% failed ATS parsing in ways that would materially affect a candidate’s visibility.

The ghost text problem, revisited

Beyond parsing failures, the ghost text problem appears across Canva templates broadly. The original placeholder content (fake names, fake job titles, fake locations from the template designer) can persist in hidden text layers even after you’ve replaced all the visible content. Some ATS systems read these hidden layers. When they do, your resume becomes a palimpsest: your real experience buried under someone else’s fictional career.

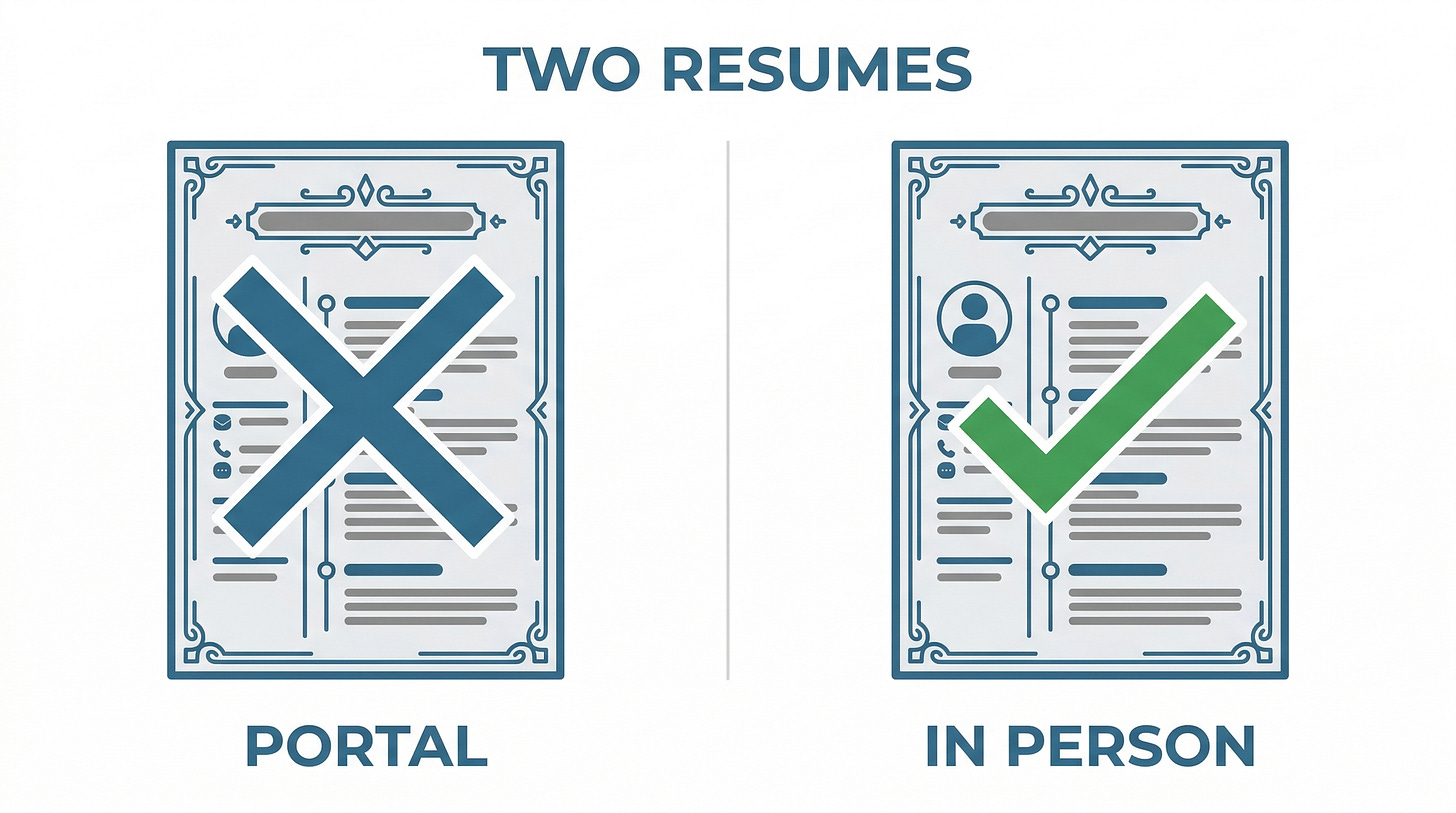

The copy-paste test

You can check this yourself right now. Open your Canva resume PDF. Select all the text (Ctrl+A or Cmd+A). Copy it. Paste it into a blank text document.

If what you see in the text document matches what you see in the PDF, your resume is probably parsing correctly. If you see garbled text, missing sections, reordered content, or placeholder data you didn’t write, your resume is broken and has been broken for every job you’ve applied to with it.

Open your Canva resume PDF. Select all. Copy. Paste into a blank document. If what you see doesn’t match what you wrote, your resume has been broken for every job you’ve applied to.

One person helped a friend run exactly this experiment. The friend had been using a Canva resume and getting zero callbacks. They rebuilt it in Word, submitted eight applications, and had a job within two weeks. Same qualifications. Same market. The only thing that changed was whether the ATS could read the file.

The AI resume arms race

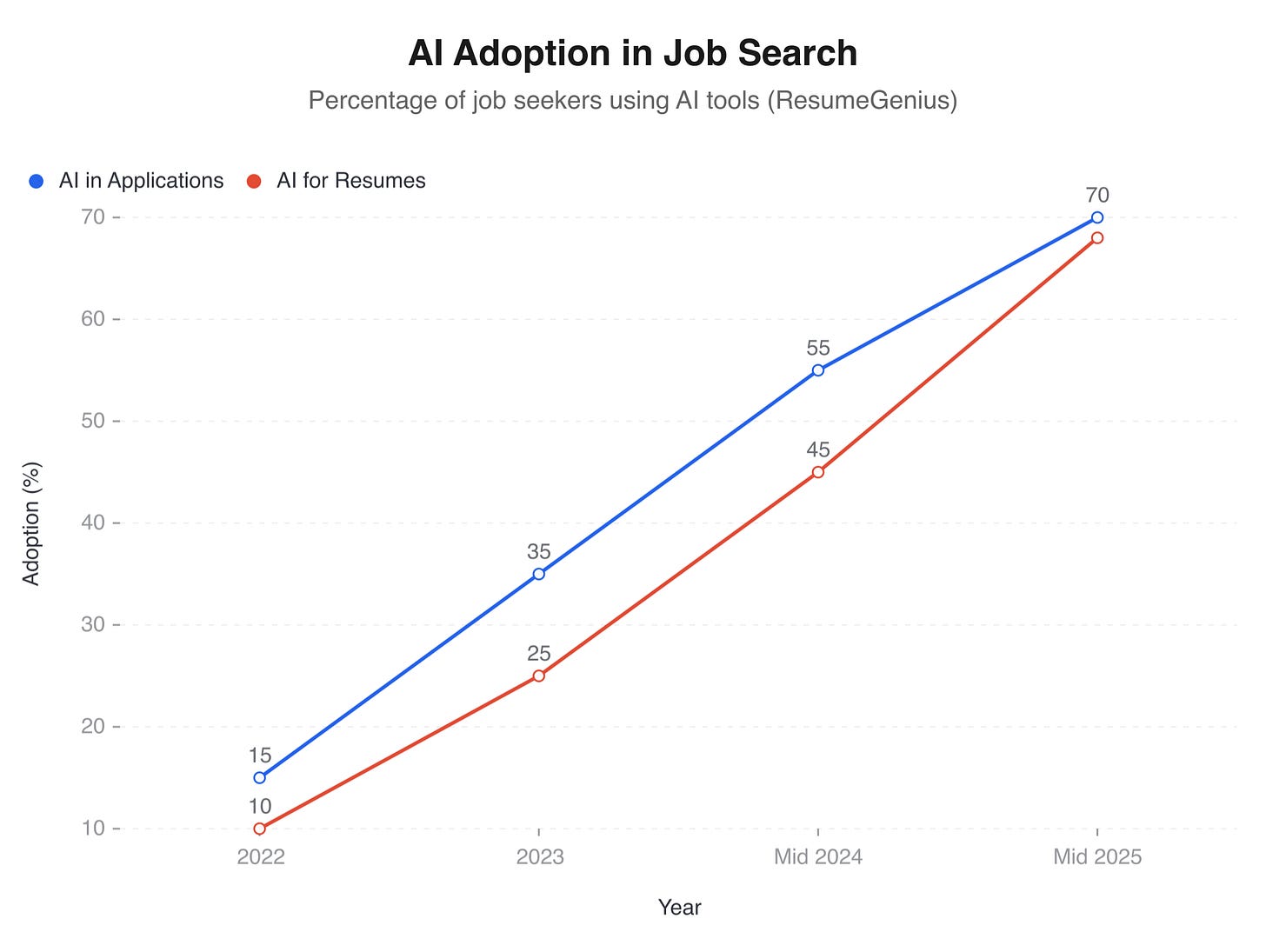

By mid-2025, 70% of job seekers reported using AI in some part of their application process. Of those, 68% used AI specifically to write their resumes. ChatGPT, Claude, and Google’s NotebookLM became the default first step for a generation of applicants.

The appeal is obvious. Upload a job description to NotebookLM or paste it into ChatGPT, and within seconds you have a tailored resume with all the right keywords, polished bullet points, and professional-sounding language. You can generate cover letters, simulate interview questions, and produce application materials for dozens of roles in a single afternoon. The friction of applying has been reduced to nearly zero.

That friction was there for a reason.

The homogeneity trap

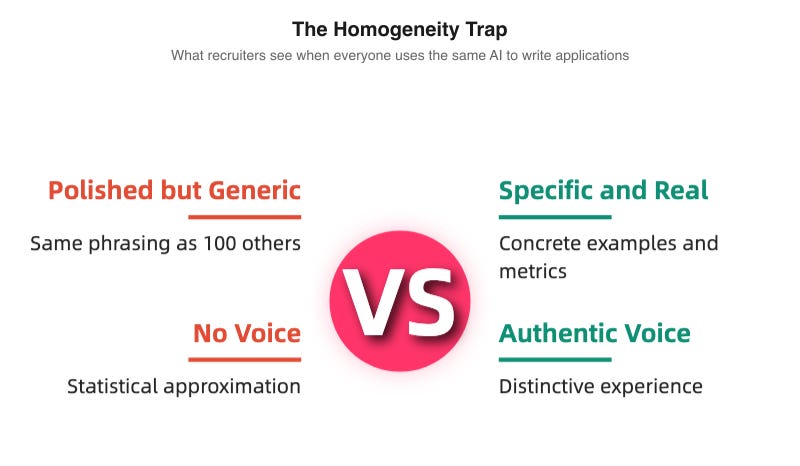

A hiring manager who’s reviewed applications for over a decade described a new phenomenon. In a single week, she received six applications for one position, and nearly every resume looked the same. Same templates. Same phrasing. Same “results-driven professional with a proven track record of delivering cross-functional solutions.” The resumes had been generated by the same handful of AI tools. It showed.

When everyone uses the same AI to write their applications, everyone sounds like the same person. A recruiter scanning a stack of AI-generated resumes is reading variations on a single voice. Individual qualifications blur together. Distinctive experiences vanish behind a wall of polished, interchangeable prose.

As Tomas Chamorro-Premuzic wrote in the Harvard Business Review in January 2026:

“AI has enabled the mass production of artificially polished candidates.”

If you’ve used ChatGPT to “improve” your resume, the improvement is real in isolation: your bullet points are crisper, your language is more professional, your formatting is cleaner. But when every applicant does the same thing, the improvement cancels itself out. You’ve joined an arms race where the weapons are identical and nobody gains an advantage.

The personalisation gap

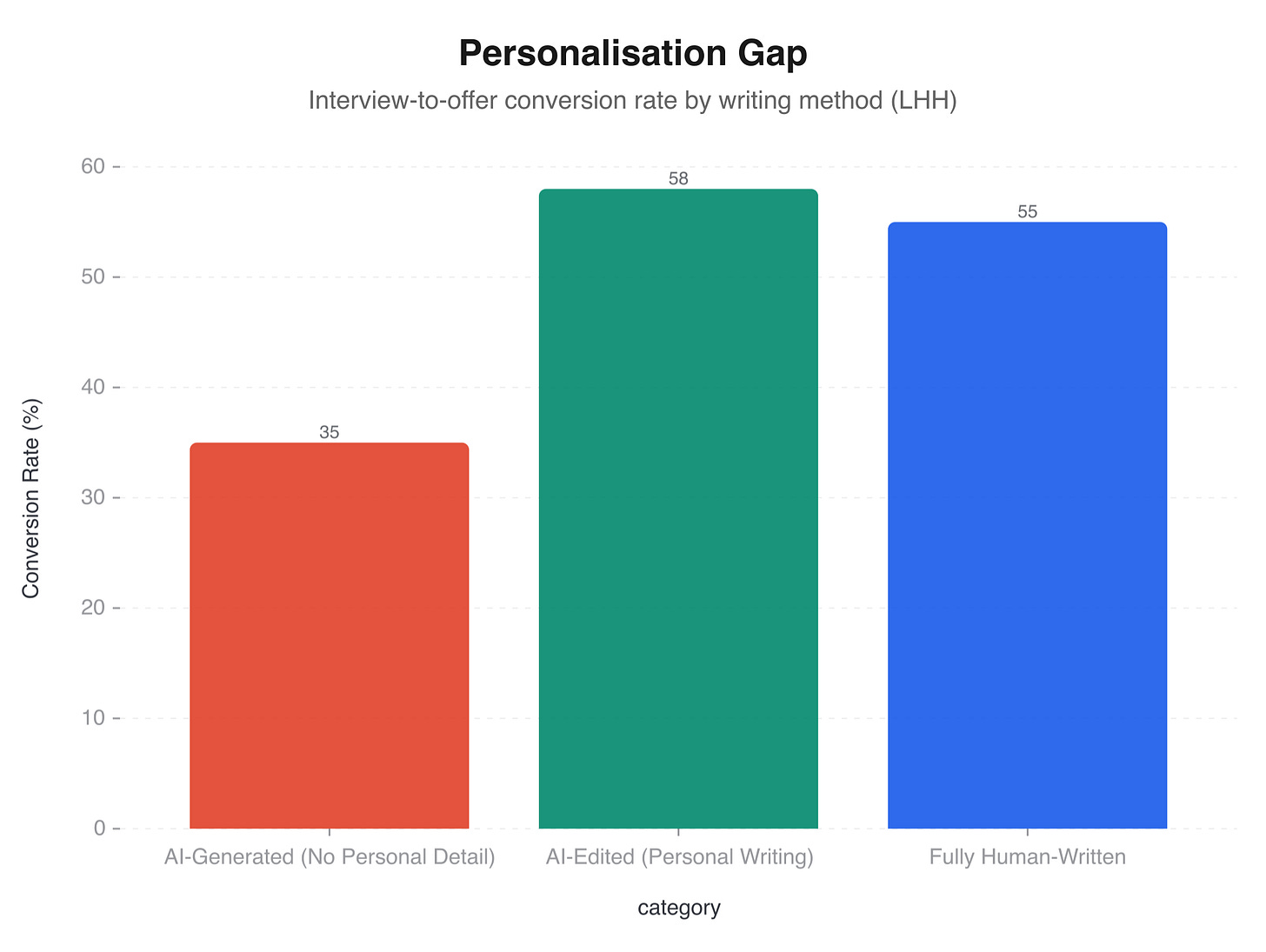

An LHH study on AI-assisted job applications found that candidates who used AI to generate application materials without adding personal detail or genuine examples experienced a 40% lower interview-to-offer conversion rate compared to candidates who wrote their own applications or who used AI as an editing tool rather than a ghostwriter.

The distinction is real. Using AI to check your grammar, suggest stronger verbs, or identify gaps in your experience is editing. Using AI to write your resume from scratch, based on nothing but a job description, is outsourcing your professional identity to a statistical model that produces whatever sounds most “normal.”

Hiring managers can feel the difference. An AI-generated resume is technically flawless and emotionally empty. No voice, no texture, no evidence of a specific human being who did specific things. It reads like a press release about a person who doesn’t exist.

The NotebookLM question

NotebookLM, Google’s AI research tool, has become popular among job seekers for a slightly different reason. You can upload multiple job descriptions and have NotebookLM identify common requirements, suggest keywords, and even generate study materials for interview preparation. The tool is genuinely useful for research and preparation.

The problem arises when people use it to generate the application itself. NotebookLM’s output is sophisticated, well-structured, and unmistakably not you. A recruiter reading a NotebookLM-generated cover letter isn’t reading about your career; they’re reading NotebookLM’s statistical approximation of what a career like yours should sound like.

A recruiter reading an AI-generated cover letter is reading a statistical approximation of what a career like yours should sound like, not your career itself.

And recruiters are getting very good at spotting it.

The detection tools are here

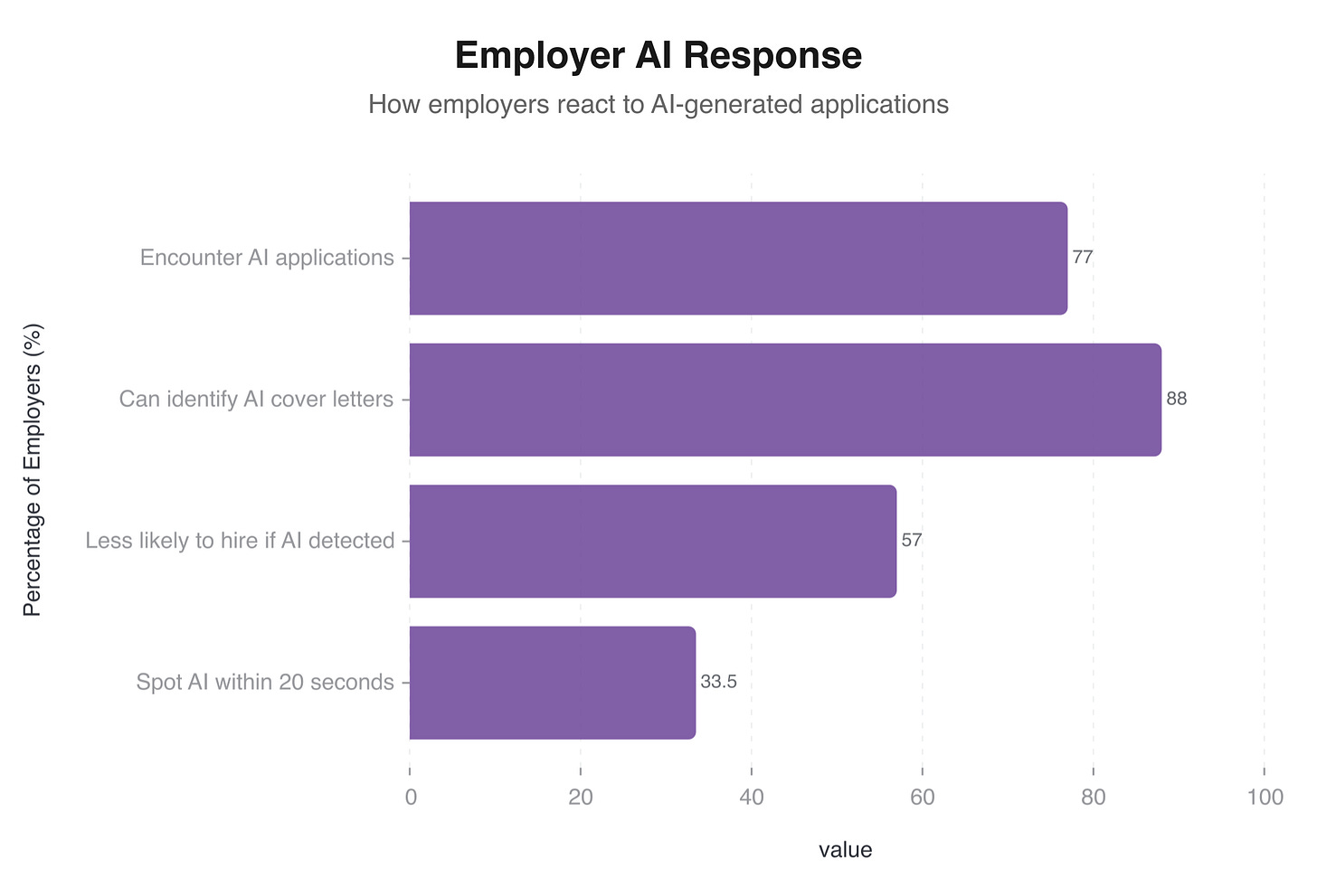

The other half of the arms race is already underway. As applicants adopt AI to write their materials, employers are adopting AI to detect it.

Pangram Labs, an AI detection platform, claims 99.98% accuracy in identifying AI-generated text. It’s SOC 2 certified (meaning it meets recognised security standards for handling data) and is being marketed directly to recruiters and hiring teams. GPTZero, another detection service, offers batch processing specifically designed for application screening: upload a stack of cover letters and get a report flagging which ones were likely AI-generated.

The numbers on detection

77% of hiring professionals say they now encounter AI-generated application materials regularly. Most recruiters believe they can spot AI-generated text, but the data disagrees. A ResumeBuilder.com study found that 82% of hiring managers couldn’t reliably distinguish AI-generated cover letters from human-written ones. Another study put detection accuracy at just 52%, even when 67% of the recruiters surveyed claimed they could tell the difference.

That gap between confidence and accuracy is telling. Recruiters are making snap judgements about which applications “feel” AI-generated, but they’re wrong nearly half the time.

The consequence is real regardless. A ResumeBuilder.com survey found that a significant proportion of hiring managers say they’d deprioritise applications they suspect are AI-generated. Whether or not they’re right about which ones are AI doesn’t change the risk to you. Yikes.

A hiring manager with over a decade of experience put it bluntly: she can tell which resumes were AI-generated, and those go straight to the bottom of the pile. The data suggests she may be wrong about which ones are AI as often as she’s right. But her perception is what counts when you’re the applicant.

The institutional response

Major employers are adjusting their processes in response. Google, Cisco, and McKinsey have all expanded their use of in-person interviews and live assessments, partly as a response to the difficulty of evaluating candidates whose written materials may not reflect their genuine abilities. “Do you use AI tools?” is increasingly a core competency question in interviews. The test isn’t whether candidates use AI (most do). It’s whether they can articulate what they personally contributed versus what the tool generated.

Some people have pushed back by testing Canva resume PDFs through AI chatbots and noting that the chatbots parsed them fine. But an AI chatbot isn’t an ATS. Chatbots are designed to extract meaning from messy input; they’re trained to be forgiving. ATS systems are structured parsers with rigid expectations about document formatting. Testing your resume through ChatGPT tells you whether ChatGPT can read it. It tells you nothing about whether Workday or iCIMS can.

There’s also a privacy concern worth flagging. When you paste someone else’s resume into an AI tool to “test” it, you’re feeding that person’s name, address, phone number, work history, and educational background into a third-party system. Some of these tools use submitted data for model training. Running a friend’s personal information through an AI tool without their knowledge raises questions that go well beyond resume formatting.

257 applicants per opening

The tools are part of the problem, but they operate inside a job market that has become measurably harder in ways that rarely make it into career advice columns.

Application volume surged roughly ninefold between 2022 and 2025, according to Joveo, a recruitment advertising platform. In 2024, there were 207.2 applications per job opening on average. By 2025, that number had risen to 257.6 applications per opening.

For every job posting you apply to, roughly 257 other people are applying at the same time. A meaningful portion of those competitors are using AI to apply faster and to more roles simultaneously, which feeds right back into the volume problem.

The supply side

The American economy added only 181,000 jobs in 2025, a figure that was revised down from an initially reported 584,000. That revision alone should give you a sense of how uncertain the employment data is. As of November 2025, there were 1.1 unemployed workers for every job opening, meaning that even if every open position were filled instantly, there would still be more job seekers than jobs.

Ghost jobs

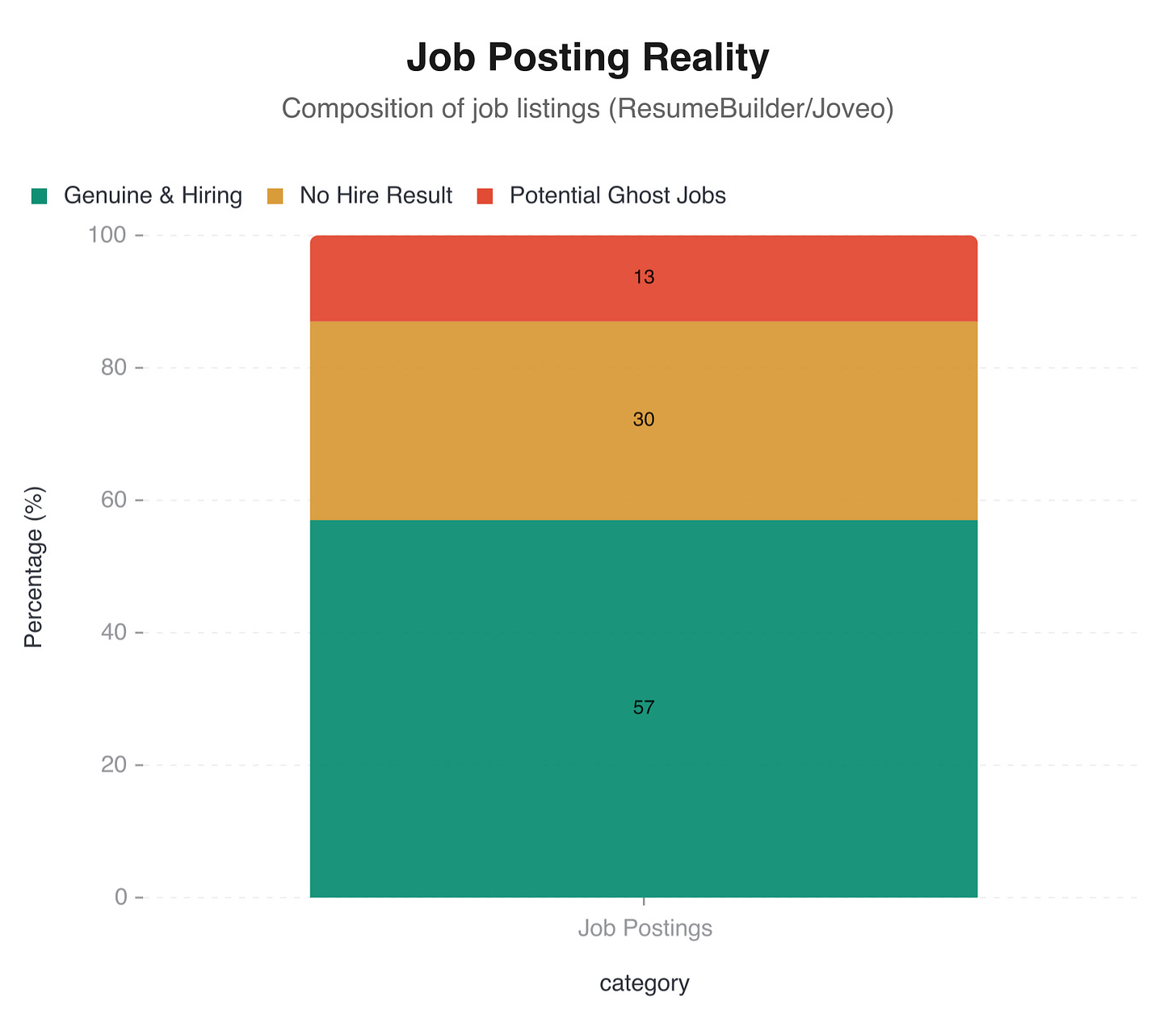

Roughly 30% of job openings don’t result in a hire. Some estimates suggest as many as 43% of posted positions may be ghost jobs: listings that were posted with no intention of filling them, or that were filled internally before the posting went live, or that exist solely to collect resumes for a future pipeline.

Ghost jobs exist for several reasons. Companies sometimes keep listings active to project growth to investors or the public. Sometimes the listing stays up because nobody remembered to take it down. Sometimes hiring managers post a role to “test the market” with no budget approved to fill it. Maddening.

If you’ve applied to a hundred jobs and heard back from three, some of those ninety-seven were never real.

Experience inflation

The requirements listed on job postings have crept upward, particularly in technology roles. The percentage of tech positions requiring five or more years of experience rose from 37% to 42% between 2023 and 2025. Entry-level positions increasingly demand mid-level experience, and mid-level positions increasingly demand senior-level credentials.

The “hidden job market” myth

You may have heard that “80% of jobs are never posted” and exist in a hidden job market accessible only through networking. The evidence for this claim is thin. Research suggests that only 6-10% of jobs are genuinely hidden, meaning they’re filled through referrals or direct outreach without ever being publicly posted. Job boards remain the dominant channel, filling about 47% of positions.

The hidden job market is real in the sense that networking provides a meaningful advantage (more on that shortly). But the idea that most jobs exist in some secret market accessible only by knowing the right people is exaggerated, and it’s often invoked to sell networking courses and career coaching.

The boring strategies that produce results

The strategies that work best in the current job market are, without exception, unglamorous. None of them are exciting. None of them make good social media content. They work.

The two-resume strategy

Multiple job seekers have independently arrived at the same approach: maintain two versions of your resume.

Version one is the ATS-safe resume. This is the one you submit through online application portals. It’s a single-column layout in a standard font (Arial, Calibri, Garamond, Times New Roman). It uses standard section headings: “Work Experience,” “Education,” “Skills.” It’s saved as a .docx file or a clean PDF exported from a word processor, not from a design tool. It’s visually plain and functionally reliable. This is the resume that machines read.

Version two is the portfolio resume. This is the one you bring to interviews, send directly to contacts, or link from your LinkedIn profile. This version can use creative layouts, custom fonts, colour, and visual hierarchy. This is the resume that humans look at.

An art director and graphic designer shared a story that illustrates the logic perfectly. Despite having a visually impressive portfolio-style resume, they stripped all the design out of their ATS submission version: no columns, no graphics, no colour, no custom fonts. Just plain text in a standard format. It worked.

The copy-paste test (step by step)

Before submitting your resume anywhere, do this:

Open your resume file (PDF or .docx)

Press Ctrl+A (or Cmd+A on Mac) to select all text

Press Ctrl+C (or Cmd+C) to copy

Open a blank text document (Notepad, TextEdit, whatever)

Press Ctrl+V (or Cmd+V) to paste

Read what appears

If the pasted text matches your resume’s content, in roughly the right order and with no garbled characters, your resume is likely parsing correctly. If you see missing sections, reordered text, garbled characters, or placeholder content you didn’t write, your resume is broken.

Do this test every time you update your resume or export it from a new tool.

Keyword mirroring

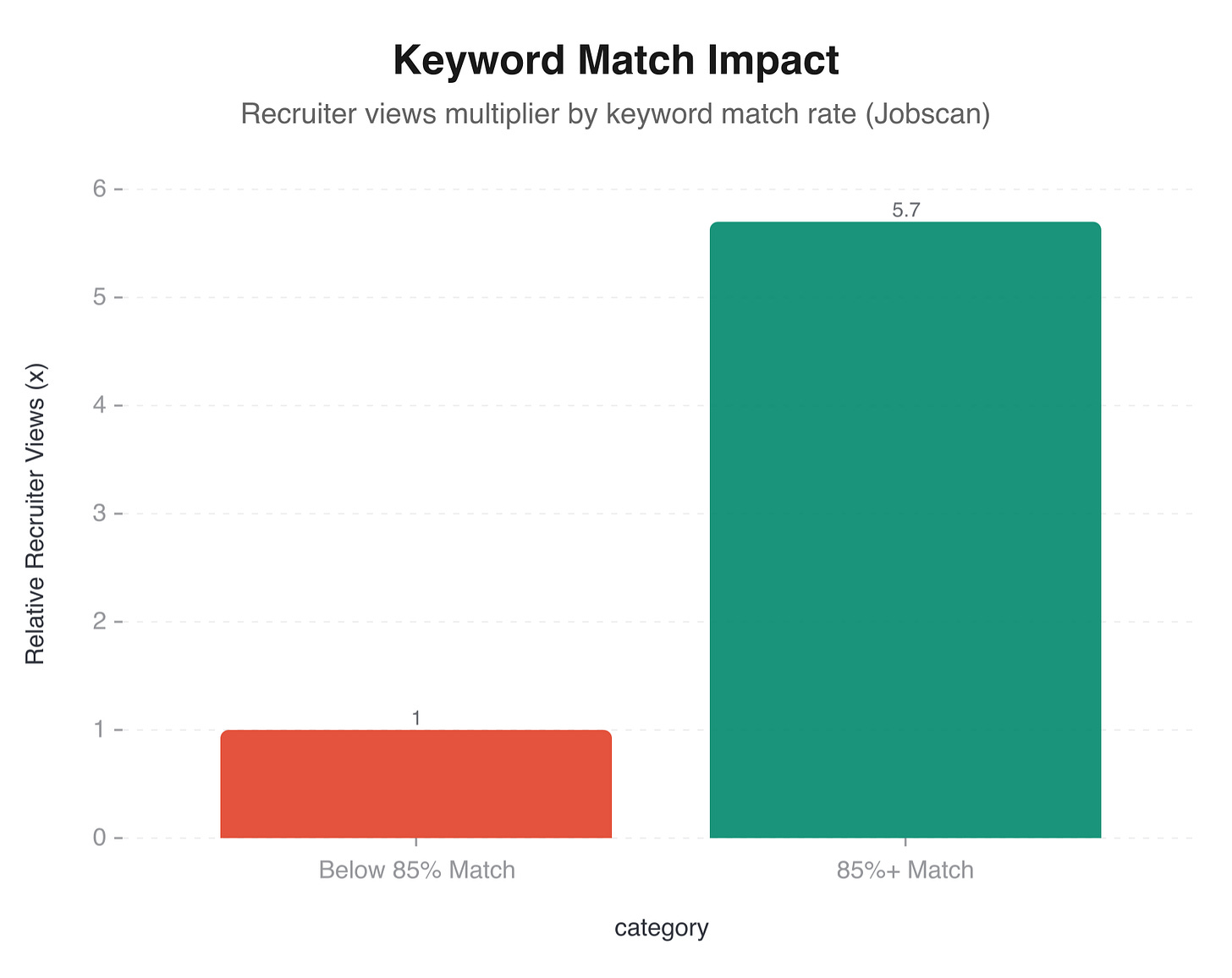

ATS systems match your resume against the job description. The closer your language mirrors the job posting’s terminology, the higher you rank in the recruiter’s search results. Candidates whose resumes achieve an 85% or higher keyword match with the job description receive 5.7 times more recruiter views than those with lower match rates.

This isn’t keyword stuffing. Keyword stuffing means cramming irrelevant terms into your resume to game the system, and it doesn’t work: modern ATS systems use contextual analysis and can spot terms that appear without supporting context. Keyword mirroring means using the same language the employer uses to describe the skills and experience you genuinely have.

If the job description says “project management,” your resume should say “project management,” not “programme oversight.” If it says “data analysis,” use “data analysis,” not “quantitative research.” Same skills, same terminology.

Metrics in every bullet point

73% of hiring managers identify quantified achievements as the number-one differentiator between resumes that get interviews and resumes that don’t.

The difference between a weak and a strong bullet point is almost always a number:

Weak: “Managed social media accounts and grew the audience.”

Strong: “Grew Instagram following from 4,200 to 31,000 in 14 months, generating an average of 340 link clicks per post.”

The second version gives a recruiter something concrete to evaluate. The first version could describe anyone.

If you don’t have exact numbers, estimate responsibly. “Reduced customer complaint resolution time by approximately 30%” is still better than “improved customer service processes.” Recruiters understand that not every metric is precise; they care that you think in terms of impact.

AI as editor, not author

The LHH data on interview-to-offer conversion rates makes the case clearly: use AI to improve your writing, not to replace it. The most effective approach is to write your resume yourself, drawing on your own experience and your own language, and then use AI to refine what you’ve written.

Ask ChatGPT or Claude to check your bullet points for clarity. Ask it to suggest stronger action verbs. Ask it to identify skills from the job description that you’ve forgotten to include. Use it as a proofreading tool, a thesaurus, a structure checker.

Don’t ask it to write your resume for you. The moment you do, your application joins the pile of identical, AI-generated documents that recruiters have learned to recognise and deprioritise.

Use AI to improve your writing, not to replace it. The moment AI writes your resume for you, your application joins a pile of identical documents that recruiters have learned to recognise.

Apply early

52% of recruiters review applications in the order they arrive. Apply within 48 to 72 hours of a job being posted. The earliest applicants receive disproportionate attention, partly because recruiters begin forming their shortlist immediately and partly because later applicants are competing against candidates who have already been flagged as strong matches.

Set up job alerts. Check listings daily. When you see a role that matches your profile, apply that day.

The networking advantage, measured

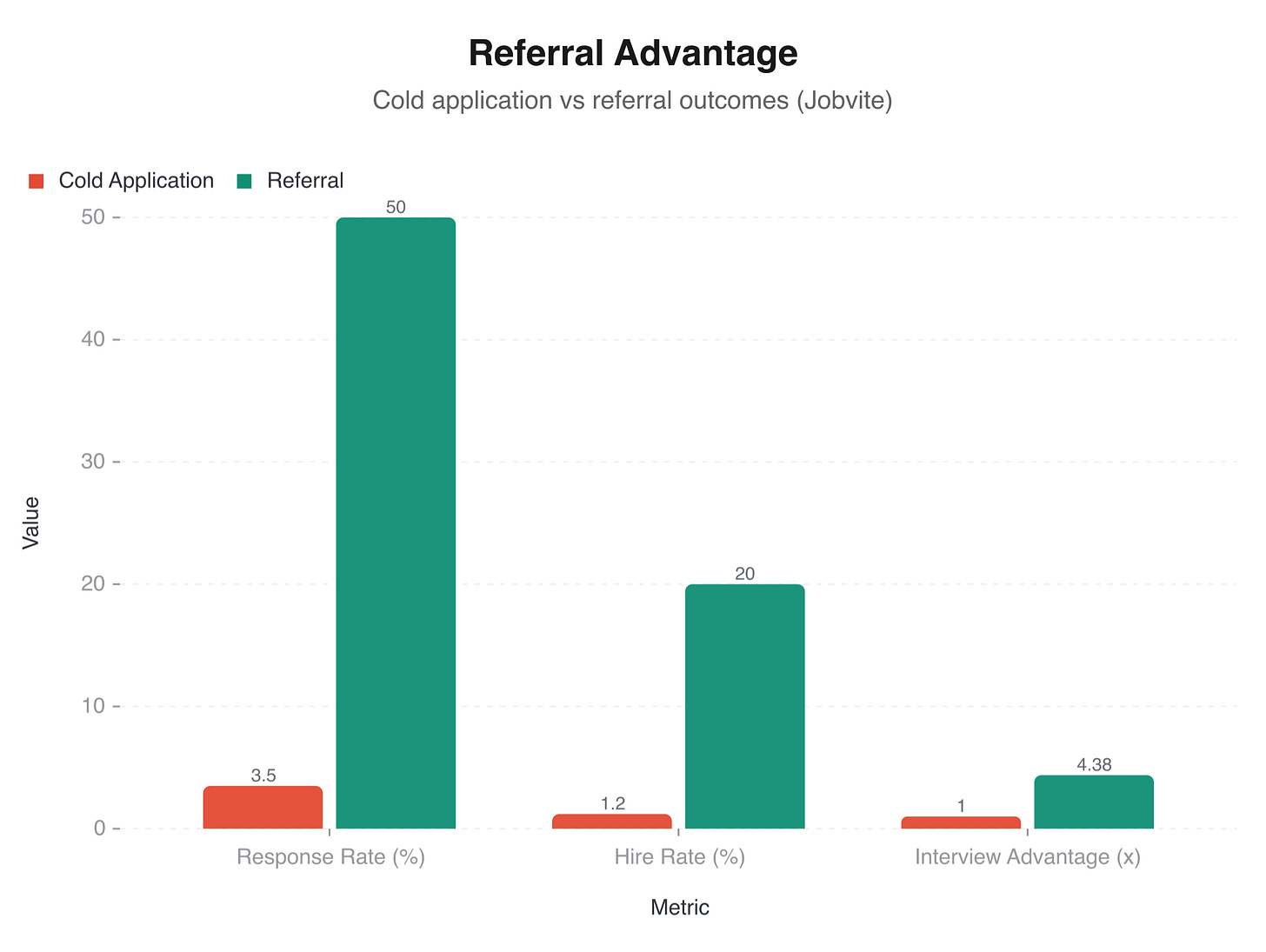

Referrals account for roughly 7% of all job applications but lead to 40% of hires. A referred candidate has a hiring rate of approximately 20%, compared to 1.2% for a cold application. You are 4.38 times more likely to get an interview if you’re connected to someone at the company.

Networking isn’t a nice-to-have supplement to your job search. It is, by a wide margin, the most effective strategy available.

The response rate gap

Cold applications (submitted through job boards with no internal connection) have a 2-5% response rate. For every twenty to fifty applications you send, expect one response. Not one interview. One response, which might be a rejection.

A referral short-circuits the entire process. The recruiter already has a signal that you’re worth talking to, because someone they trust has vouched for you. Your resume gets read more carefully, your application moves faster, and you enter the process with a degree of credibility that no amount of resume optimisation can replicate.

The 80/20 approach

Career advisers often recommend spending 80% of your job search effort on networking and 20% on online applications. Given the data, that ratio makes sense. Twenty cold applications might yield one response. Four genuine networking conversations might yield an introduction, which might yield an interview, which has a dramatically higher conversion rate. Do the maths.

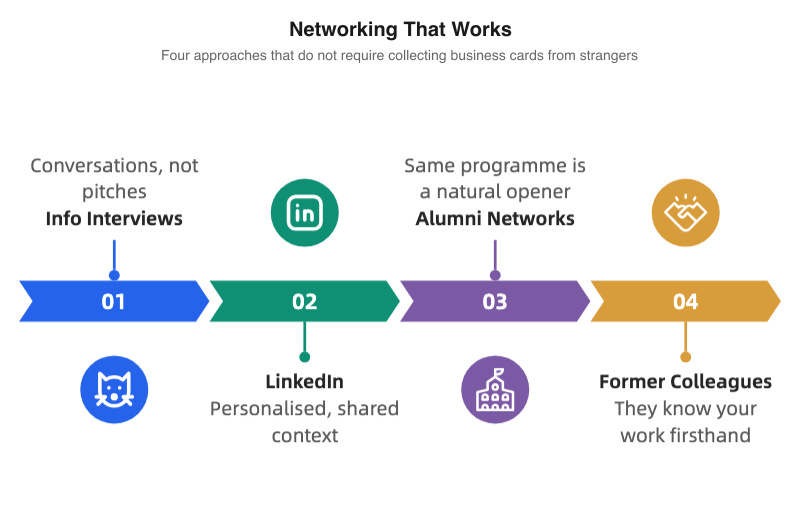

Networking that doesn’t feel awful

Most people hate networking because they imagine it as attending awkward events and collecting business cards from strangers. It doesn’t have to work that way.

Informational interviews are conversations, not pitches. Reach out to someone who works at a company or in a role you’re interested in. Ask them about their experience. Ask what the team is working on. Ask what skills they wish they’d developed earlier. People are remarkably willing to talk about their jobs when they’re not being sold to.

LinkedIn, used strategically. Don’t blast connection requests to strangers. Find people you share something with: same university, same previous employer, same professional group, same city. Send a personalised message that references the specific connection. Follow up with genuine engagement on their posts. The goal is to build a relationship, not to extract a referral.

Alumni networks are underused. Most universities maintain alumni directories, and most alumni are willing to help recent graduates or fellow alumni. Being able to say “I noticed you graduated from the same programme” is a natural conversation starter that doesn’t feel transactional.

Former colleagues and managers are the strongest referral sources. They’ve already worked with you. They know your capabilities. They can vouch for you with specificity that no cover letter can match. Stay in touch with people you’ve worked with, even if you’re not currently job searching.

Referrals account for 7% of applications but lead to 40% of hires. No amount of resume optimisation matches the signal of a trusted colleague saying “you should talk to this person.”

The feedback loop nobody wins

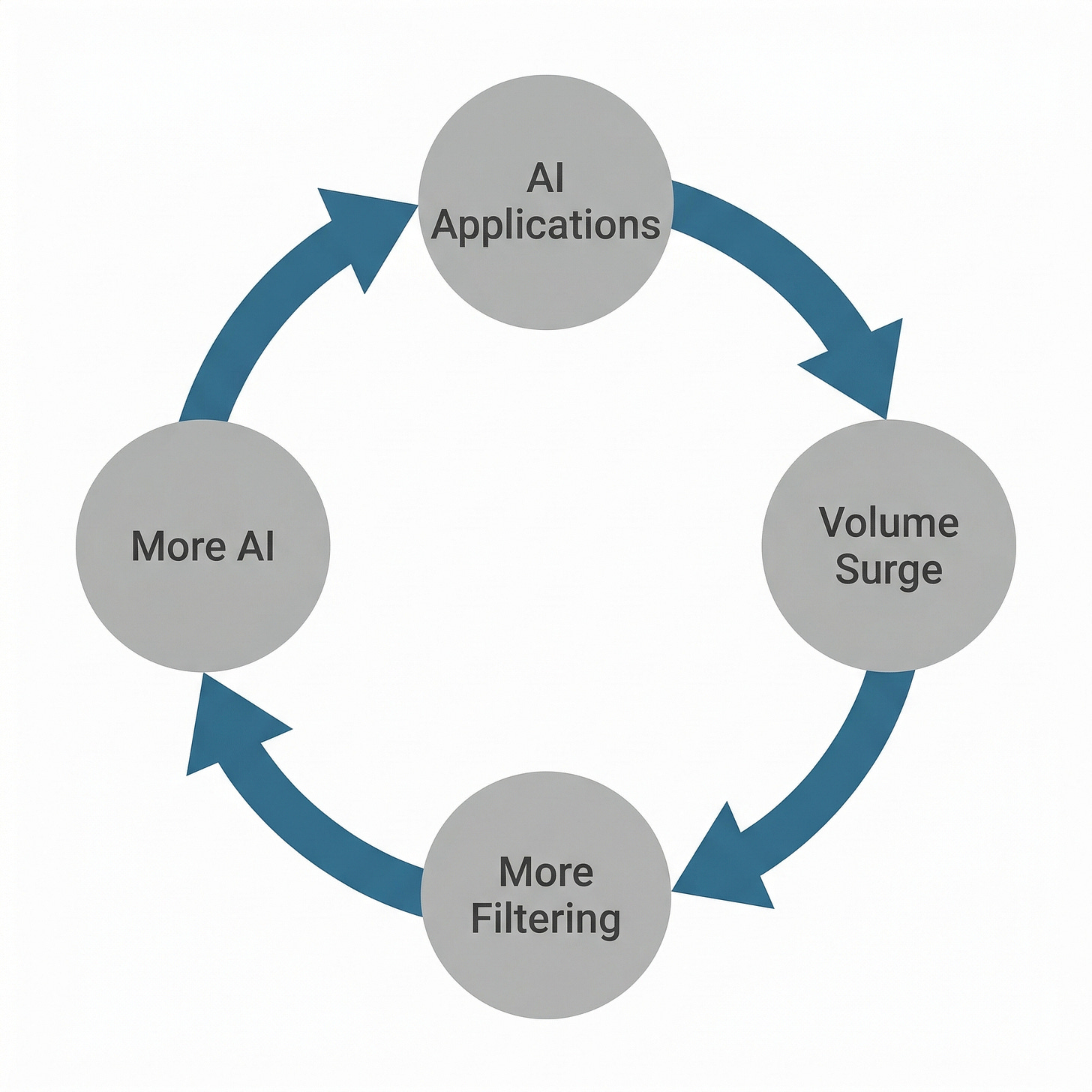

The individual problems (Canva’s broken PDFs, AI-generated resume homogeneity, the myth-fuelled anxiety about ATS) are symptoms of something larger. The job application process is experiencing a systems failure where every participant’s rational behaviour makes the collective outcome worse.

Job seekers use AI to apply faster and to more positions, which increases application volume, which overwhelms recruiters, who respond by relying more heavily on automated filtering, which incentivises applicants to optimise further for those filters, which makes applications more homogeneous, which makes it harder for anyone to stand out.

An arms race with no winners. What a mess.

The tools aren’t villains

Canva is a good product used in the wrong context. It was built for designing social media graphics, presentations, and marketing materials. It wasn’t built to produce machine-readable documents for enterprise HR software. Blaming Canva for resume parsing failures is like blaming a hammer for being a bad screwdriver. The tool does what it does; the problem is the mismatch between the tool and the task.

NotebookLM is a genuinely useful research tool. It can help you understand an industry, identify patterns across job descriptions, and prepare for interviews. The problem isn’t the tool but the temptation to let it replace your own voice and experience.

The comment that resonated most with job seekers was also the simplest: “Perhaps there should be more humans and less robots scanning resumes.” There’s a clean truth to that. But it ignores the economics. When a single job posting attracts 257 applications, no recruiter has time to read each one carefully. The ATS exists because the volume demands it.

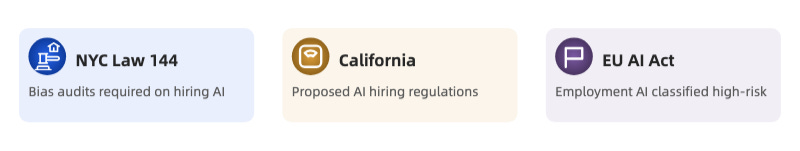

Regulation is catching up

Governments are starting to respond. New York City’s Local Law 144 requires employers to conduct bias audits on automated employment decision tools before using them. California has proposed regulations governing AI use in hiring decisions. The EU’s AI Act classifies employment-related AI systems as “high-risk,” subjecting them to requirements around transparency, data quality, and human oversight.

These regulations are early and inconsistent. Different jurisdictions, different standards, significant gaps. But they signal a growing recognition that when machines make decisions about people’s livelihoods, even ranking-and-sorting decisions, there need to be rules about how those machines work and what recourse people have when they get it wrong.

The privacy question

Every AI tool in the hiring pipeline, on both sides, involves processing personal data. When you upload your resume to an AI optimiser, your work history and personal details enter that company’s systems. When an employer runs your cover letter through an AI detection tool, your writing is analysed by a third party. The data flows are opaque, consent is buried in terms of service, and the long-term implications of feeding millions of resumes into AI training datasets are unclear.

A reasonable question for any job seeker to ask before using an AI tool: where does my data go, who else sees it, and what happens to it after I close the tab?

The fundamentals haven’t changed

The most effective job search strategies in 2026 are the same ones that worked in 2006: write clearly about what you’ve done, use numbers to show your impact, format your documents so the systems can read them, apply early, and spend most of your energy building real relationships with real people.

The tools have changed. The principles haven’t.

The candidate who spent eighteen months as a ghost in the system got a job within two weeks of switching to a plain Word document. The art director who stripped all design from their ATS resume started getting callbacks. The hiring managers who prize authenticity keep putting AI-generated materials at the bottom of the stack.

Your resume doesn’t need to be beautiful.

It needs to be readable, specific, and yours.

1 ATS parsing accuracy can vary significantly between platforms. iCIMS, Workday, and Greenhouse each use different parsing engines, meaning a resume that parses well in one system may fail in another. Testing with the copy-paste method catches the most common failures across all systems.

2 The 72% failure rate for Canva templates was measured across the 50 most popular resume templates on Canva’s platform as of March 2026. Individual templates may perform better or worse depending on their specific design elements and export settings.

3 NotebookLM’s terms of service state that uploaded documents are used to provide the service and are not used for model training. However, terms of service change, and the distinction between “providing the service” and “training” is not always clear in practice.