They shipped Qwen 3.5, then quit the next day

Alibaba wanted to split the Qwen team across business units. The team chose to split from Alibaba instead.

The exit

The graphic said “ALIBABYE.”

TBPN (ESPN for tech bros for the uninitiated) posted it recently, with photos of three faces that most seasoned AI engineers would recognise in a heartbeat.

A few days ago, Alibaba had released the Qwen 3.5 small model series, the latest additions to its open-weight family. Elon Musk had called the models “impressive intelligence density” on X. They were getting praised. The benchmarks were strong. The vibes were good.

Then four of the people most responsible for building it just walked out the door, their departures surfacing over the span of roughly 48 hours.

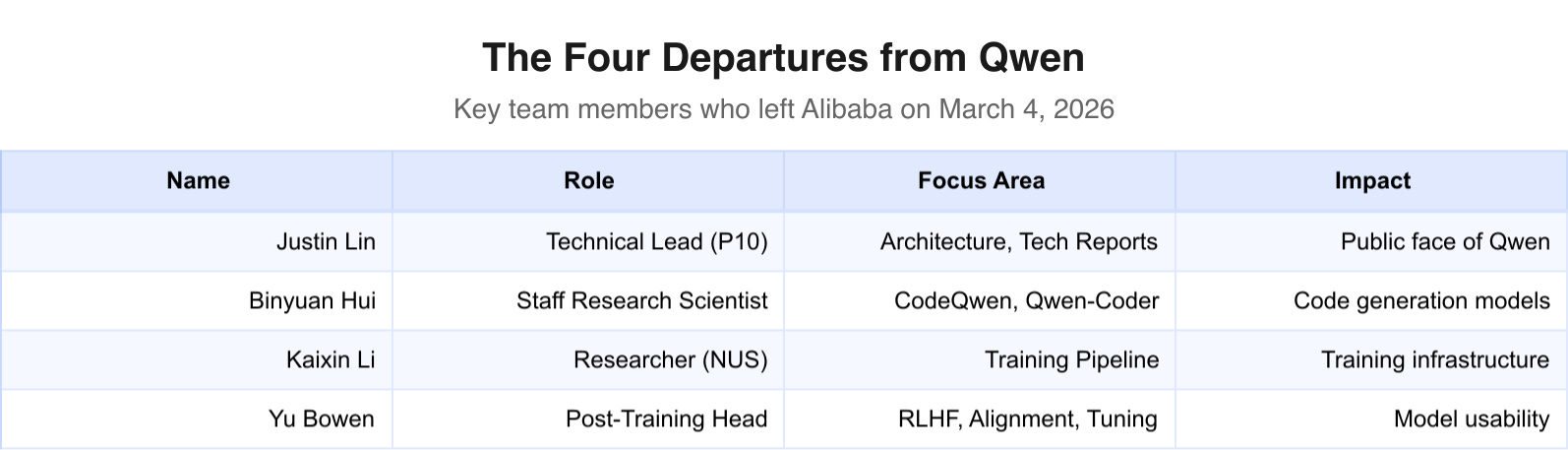

We’re talking about the technical lead. The post-training head. The code models specialist. A researcher who contributed to the training pipeline. The people whose names show up on the technical reports and whose GitHub commits sit inside thousands of production systems.

They shipped it, and then they left. Poof.

Who walked out

Junyang “Justin” Lin (@JustinLin610) was the technical lead and the public face of Qwen to the international developer community. Reportedly the youngest P10 at Alibaba, which, for the uninitiated, is roughly equivalent to Distinguished Engineer. It’s a rank most engineers never reach in an entire career; Justin reached it before his peers hit Senior. He was lead author on Qwen’s technical reports and the person developers tagged when something broke or something shipped.

Binyuan Hui (@huybery) was a staff research scientist who led the CodeQwen and Qwen-Coder work. If you’ve used Qwen for code generation, autocomplete, or anything involving a terminal, Hui’s fingerprints are on it. Co-author on multiple technical reports, deep in the weeds of making language models actually write software that runs.

Kaixin Li was a researcher who contributed to the training pipeline. Based at the National University of Singapore and described in reports as an intern on the Qwen team, Li was less public-facing than Lin or Hui, but his departure alongside senior members underscored the breadth of the exit.

Yu Bowen led post-training. This one deserves a beat of explanation, because post-training is the work that separates a raw language model from something you’d actually want to use in the real world.

When a model finishes pre-training, it’s like a student who’s read every textbook in the library but has no idea how to hold a conversation. Post-training, RLHF, alignment, instruction tuning, is what teaches it to be useful, safe, and not weird. Yu Bowen ran that for Qwen.

His departure is the most consequential of the four, and I don’t think it’s even close. You can backfill individual contributors. Replacing the person who understood how to align an entire model family, who held the institutional knowledge of what worked and what didn’t across every Qwen release? That’s not something you can fix overnight.

What they built

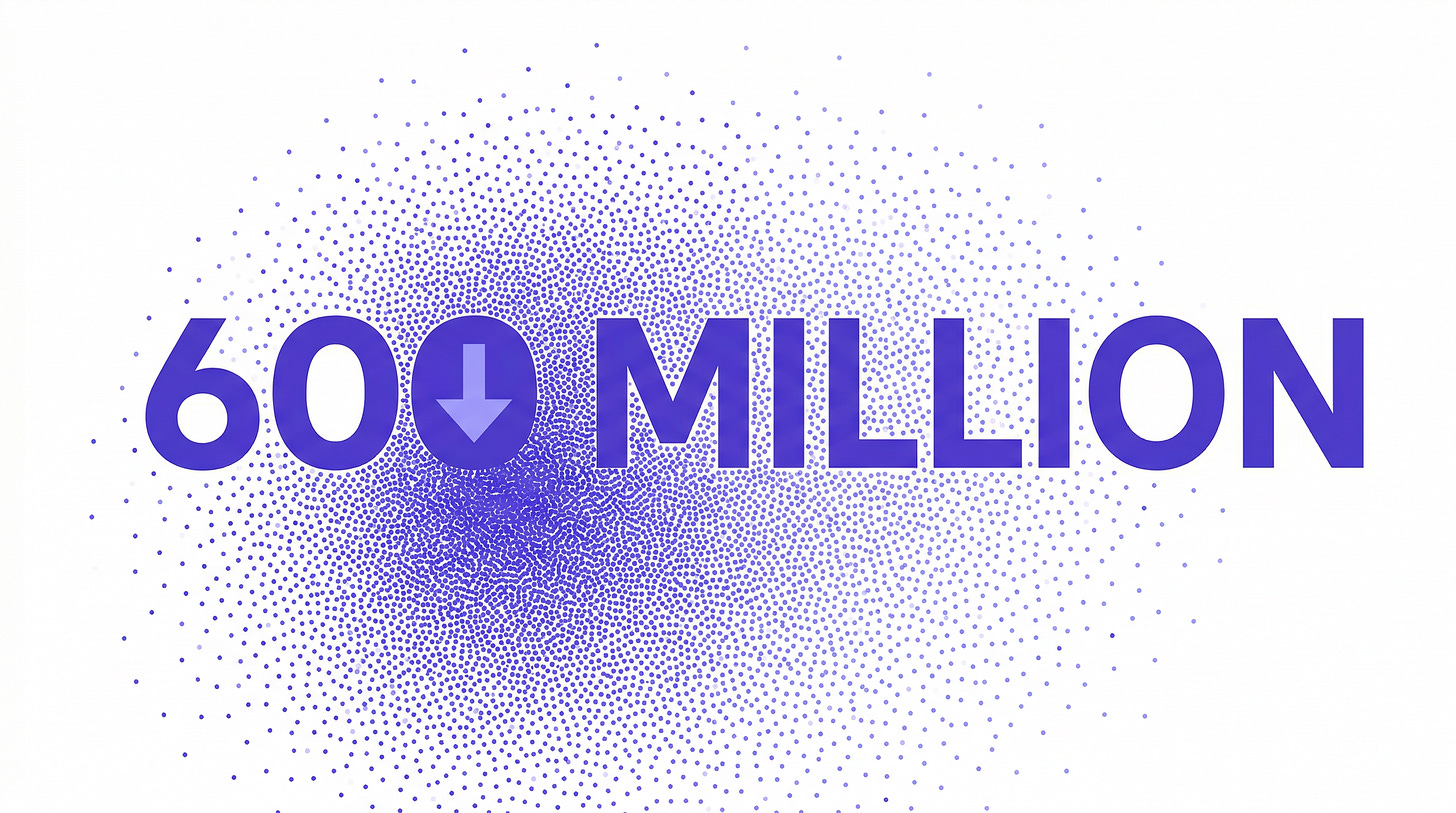

The Qwen model family has been downloaded more than 600 million times on Hugging Face.

Six hundred million.

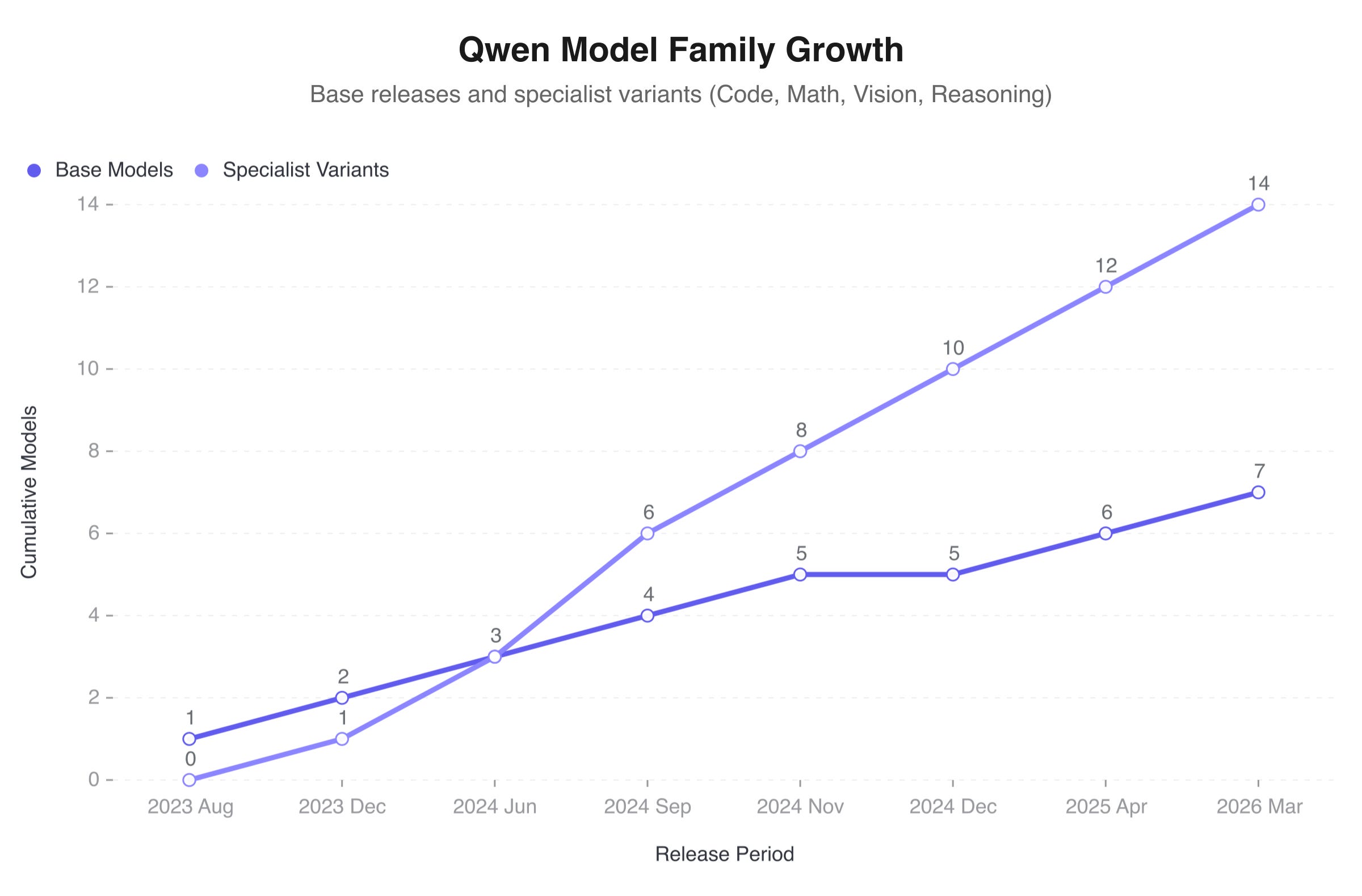

The lineage runs from the original Qwen through 1.5, 2, 2.5, QwQ, QvQ, 3, and now 3.5. Each generation brought specialised variants: code, maths, vision, reasoning. All Apache 2.0 licensed, meaning anyone can use them commercially, fine-tune them, build products on top of them, without asking Alibaba’s permission or paying a cent.

That licensing decision made Qwen one of the most important model families in the open-weight ecosystem. Developers building local AI tools, startups that can’t afford API costs, researchers in countries with limited cloud infrastructure: they all leaned on Qwen. The GitHub repositories became a dependency for thousands of projects worldwide.

And the Qwen 3.5 small model series, the final release this team shipped together, was by most accounts their best work in the compact model space. They didn’t leave after a failed release or during a quiet period. They finished the job, put out a release that drew praise from Elon Musk, and resigned within hours.

That’s professional commitment paired with principled departure. It says: we weren’t fired, we weren’t coasting, and we didn’t sabotage anything on the way out. We just aren’t staying.

The restructuring that broke it

So why did they leave?

Alibaba’s management proposed restructuring Tongyi Lab, the AI research group that houses the Qwen team, by splitting its researchers across different business units. Cloud would get some. Commerce would get others. Each division would get its own slice of AI talent, embedded directly into product teams.

If you’ve worked at a large tech company, you’ve seen this movie before. The pitch from leadership always sounds reasonable: move researchers closer to the products, shorten feedback loops, make AI “more integrated” with the business. On a whiteboard, it looks like efficiency.

In practice, it fragments a cohesive research team into isolated pockets serving different bosses with different priorities. The researchers who spent years building shared intuition about model architecture, training dynamics, and post-training techniques suddenly report to product managers who need a chatbot for customer service by Q3.

Centralised research labs produce breakthroughs. Distributed product teams produce features. These are different things, and the people who are good at one often hate doing the other.

Google went through a version of this when it merged Google Brain and DeepMind, a process that produced its own talent exodus and years of cultural friction. Meta has reorganised its AI research groups multiple times, each reshuffle sending ripples of departures across the industry. The pattern repeats every time: you restructure a research team to serve business units, and you lose the research culture that made the team worth restructuring around.

The four departing members saw the restructuring for what it was and chose to leave rather than watch their team get scattered.

Alibaba tried to better integrate AI into its business units and instead lost the people who made the AI worth integrating.

600 million downloads and counting

So what happens now for the thousands of developers building on Qwen?

The models are open-source. The code exists. The weights are on Hugging Face. Nobody can un-release Apache 2.0 licensed software. In that narrow sense, everything is fine.

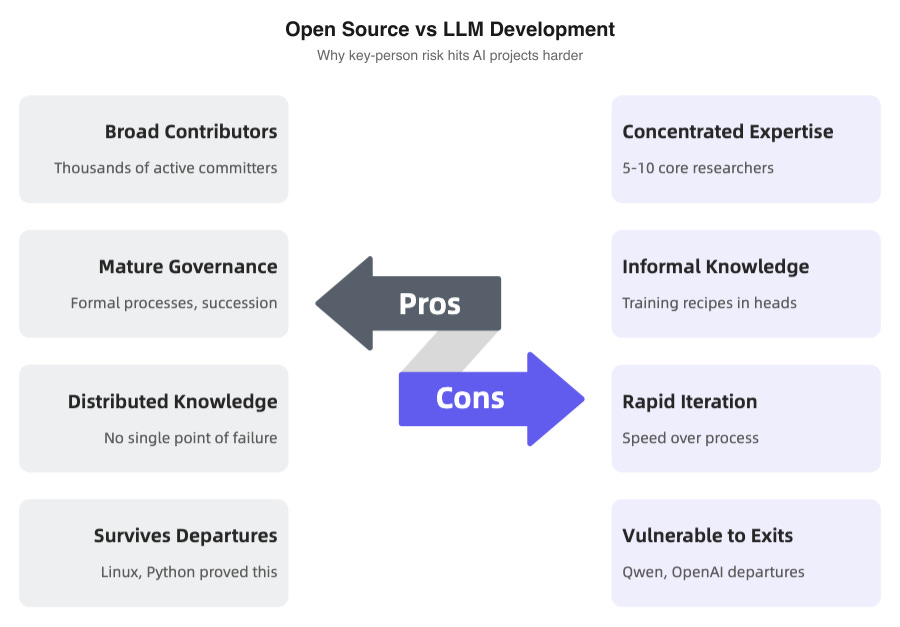

But LLM development isn’t like traditional open-source software. Linux survived Linus Torvalds stepping back because the kernel had accumulated decades of contributors and institutional processes. Python survived Guido van Rossum’s retirement because its governance structure was mature and its contributor base was enormous.

Large language model development concentrates knowledge in a small number of heads; geniuses at that. Why does a particular training recipe work? Why does a specific RLHF configuration produce better outputs? Why does the model behave oddly on certain edge cases? That knowledge often lives in the brains of five or ten people. When four of them leave at once, the knowledge walks out with them.

Alibaba still has researchers. Tongyi Lab still exists. Qwen 3.5 is already released and working. But the questions that matter are forward-looking: Can Qwen 4 match this quality? Can post-training hold without Yu Bowen, or the code models keep pace without Binyuan Hui? And will the international developer community still trust a project whose public face just walked away?

Open-source code can survive its creators leaving. The tacit knowledge of how to train frontier AI models is harder to replace than any codebase.

None of these questions have answers yet. But if you’ve bet your product on Qwen’s continued improvement, now’s a good time to start thinking about what your fallback looks like.

Where the talent goes

China’s AI talent war was already brutal before this. It just got worse.

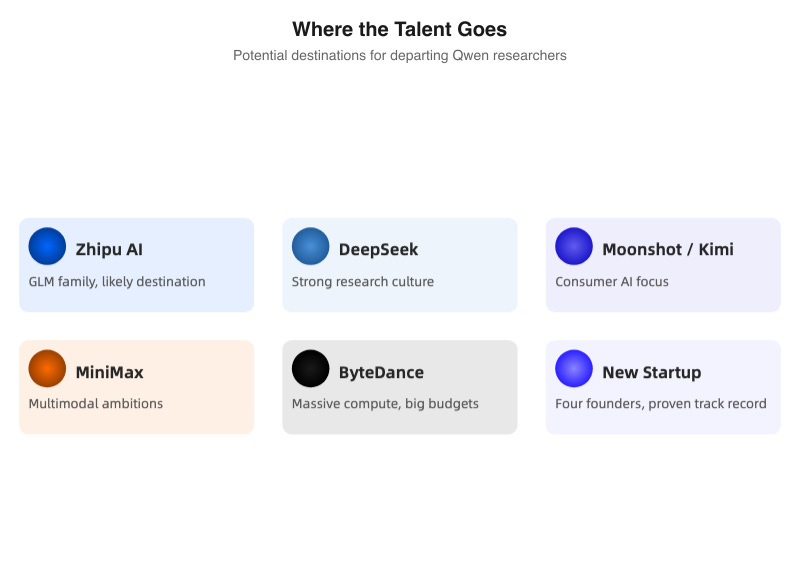

Reports point to Zhipu AI, the Beijing-based company behind the GLM model family, as a likely destination for at least some of the departing researchers. But the field of potential suitors is wide: DeepSeek, Moonshot (Kimi), MiniMax, and ByteDance are all competing for the same thin slice of people who’ve actually trained frontier models.

There’s also the possibility the four start something new together. A team that’s already built one of the world’s most downloaded model families, that has the track record and the technical chops, would have no trouble raising money in the current climate. The “big company to startup” pipeline is well-worn in China’s tech scene, and founding-team-quality ML researchers are rarer than capital.

We’ve seen this before. In 2024, OpenAI went through its own talent exodus. Ilya Sutskever left to start Safe Superintelligence. Jan Leike went to Anthropic. Other senior researchers scattered across a half-dozen startups. OpenAI survived and kept shipping, but the departures reshaped the competitive field in ways we’re still tracking. Each person who left carried knowledge that seeded something new elsewhere.

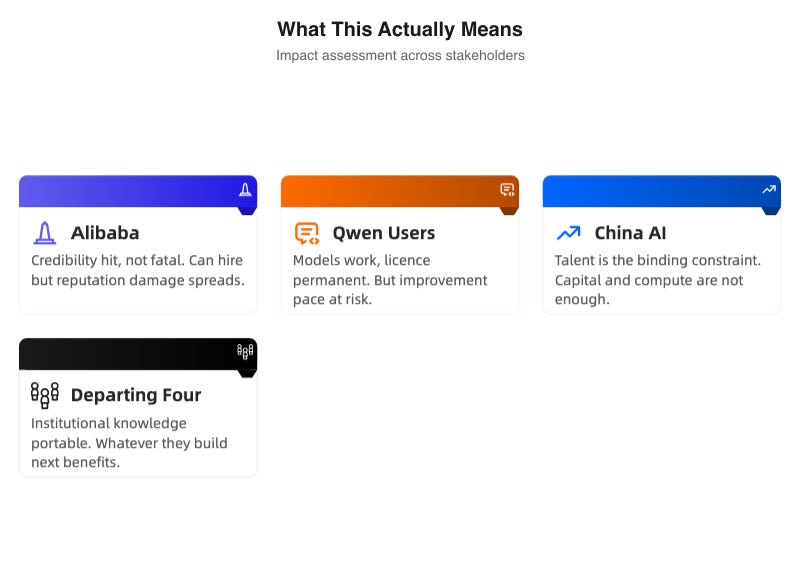

The same dynamic is about to play out in China’s AI ecosystem. Four people carrying years of institutional knowledge about training open-weight models at scale are about to pour that knowledge into something new. Whatever they build benefits from everything they learned at Alibaba.

Alibaba doesn’t get that exchange in reverse.

The fallout

For Alibaba, this is a credibility hit. Not a fatal one, but real. The company can hire replacements, and it will. But in an industry where top researchers talk to each other, where reputation spreads through conference hallways and WeChat groups, the message is clear: Alibaba prioritised corporate org charts over the team that was winning.

For Qwen users, it’s uncertainty without catastrophe. The models work. The Apache 2.0 licence is permanent. But the pace of improvement may slow, and the subtle quality that comes from deep institutional knowledge (the difference between a model that benchmarks well and a model that feels right to use) is at risk.

For China’s AI scene more broadly, this is another data point in a pattern that’s getting hard to wave away. Capital is abundant. Compute is getting there. But the people who know how to do this work, really know, number in the hundreds. Talent is the binding constraint. It was before March 4. More so now.

Four people walked out of Alibaba within hours of shipping their latest work. They left behind 600 million downloads, models that impressed Elon Musk, and an Apache 2.0 licence that ensures the code survives their departure.

What they took with them is harder to quantify, and even harder to replace.