Perplexity's best move was using everyone else's AI

Perplexity Computer runs Opus, Gemini, Grok, and Codex as specialised workers inside one system. The future of AI might be the conductor, not the instrument

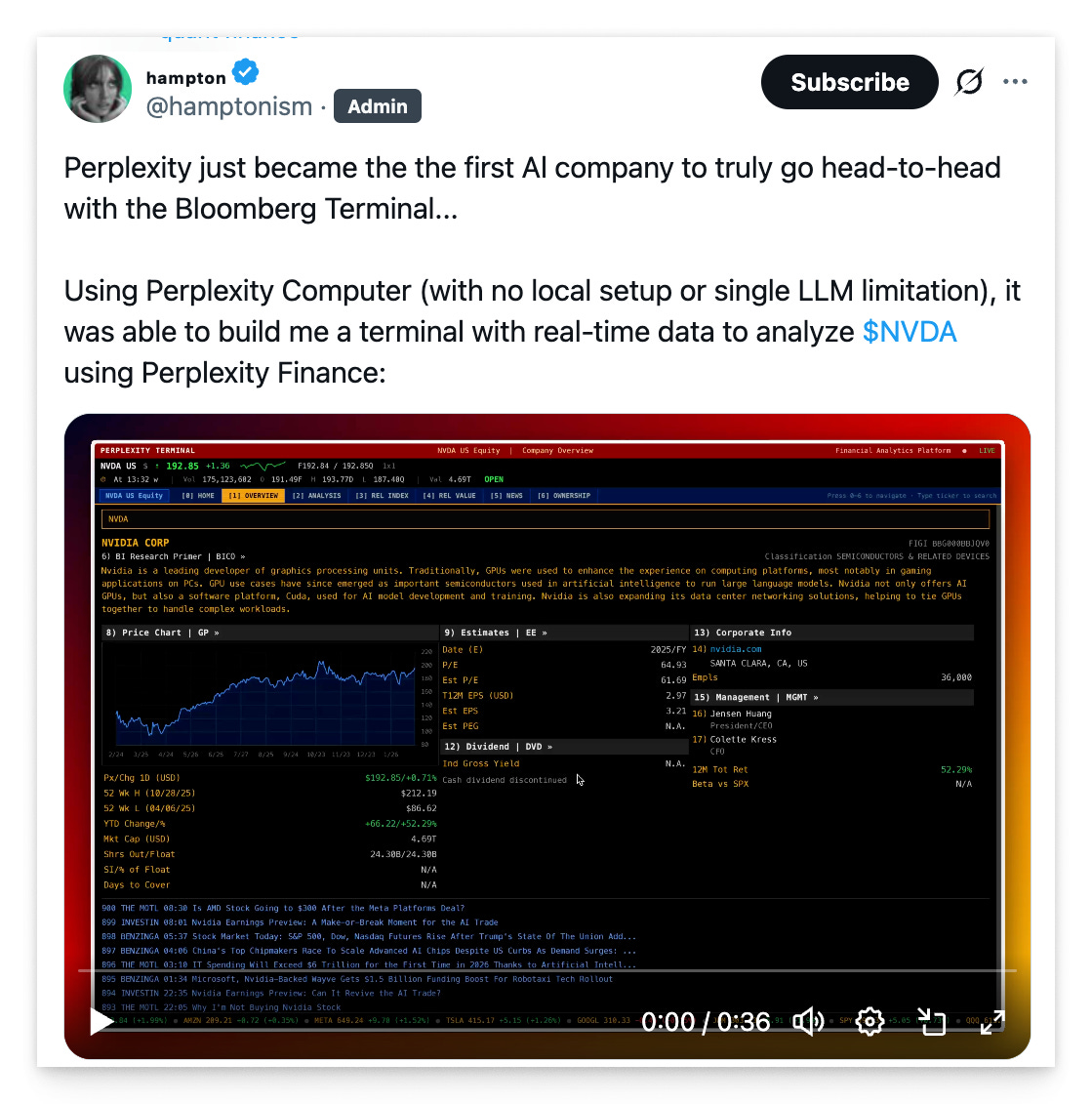

On February 25, a tweet from the quant community got people thinking.

The demo showed Perplexity’s new product building a real-time financial terminal that tracked $NVDA, pulled live data, and rendered interactive charts. The comparison everyone reached for? Bloomberg Terminal.

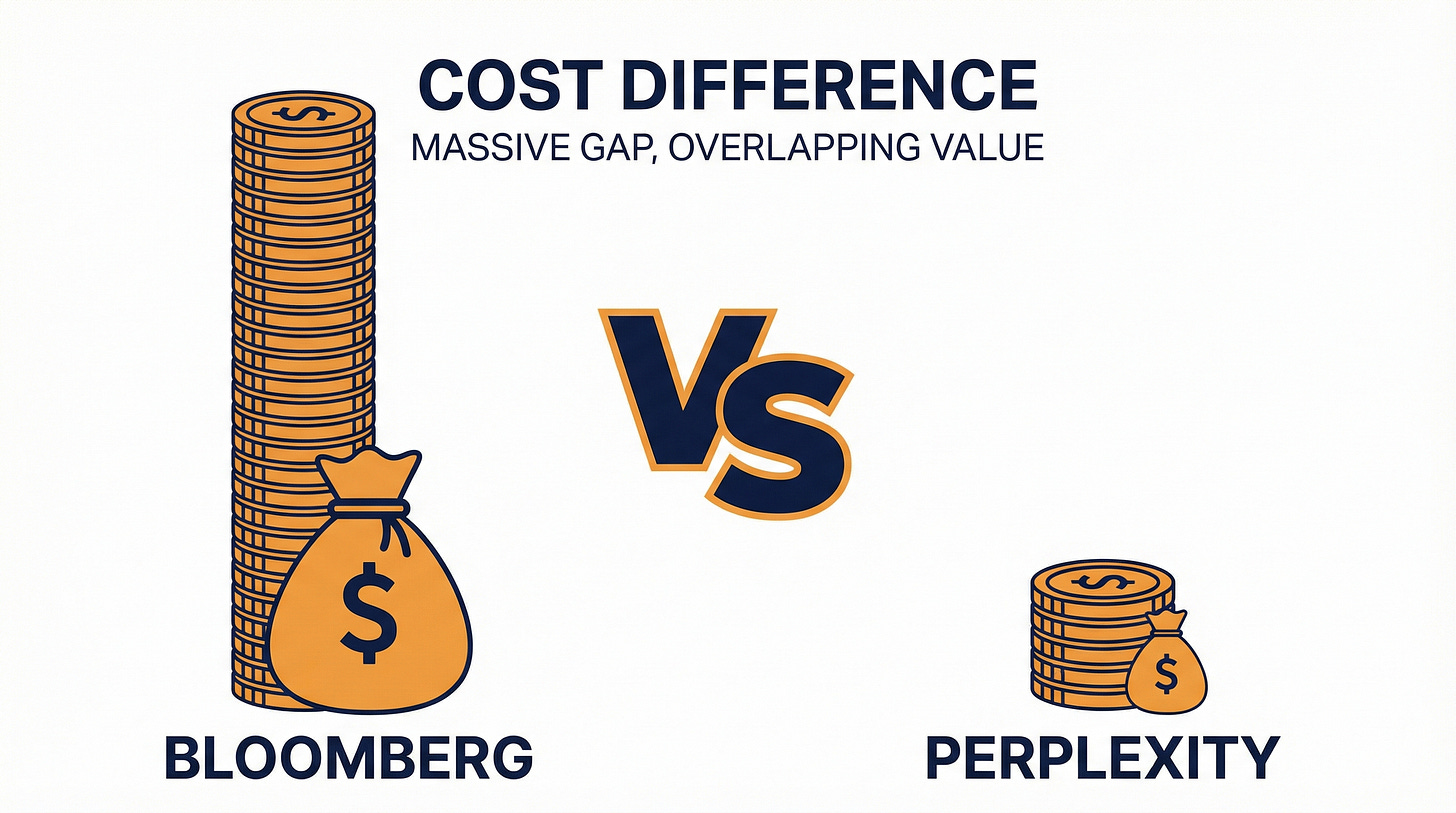

Bloomberg Terminal can costs upwards of $24,000 a year per seat. An estimated 325,000 people worldwide pay for it. Perplexity Computer, the thing in the demo, costs $200 a month as part of Perplexity Max.

Two hundred dollars a month versus 24 grand a year.

$24,000 a year for Bloomberg. $200 a month for the thing that made 9 million people say “close enough.” Check the calendar; it’s not April.

Now, does Perplexity Computer actually replace Bloomberg? No. Bloomberg has decades of proprietary data feeds, compliance tooling, and an entire culture built around those chunky keyboards. But the fact that the comparison felt reasonable to 9 million people tells you something about where the value in software is shifting. The terminal in the demo wasn’t a toy. It pulled real market data, rendered it beautifully, and did it without anyone writing a single line of code.

The sticker shock gets you in the door. The architecture underneath is what’s worth staying for.

What Perplexity Computer actually does

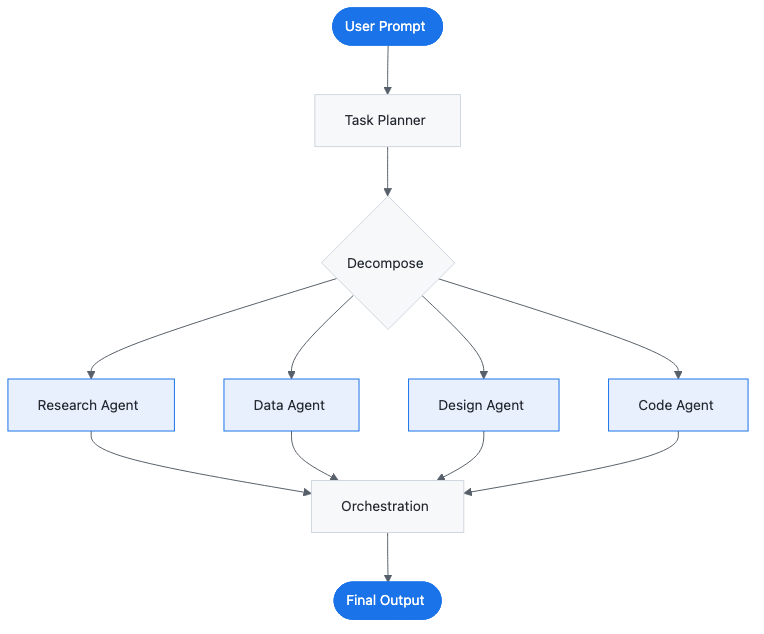

Perplexity Computer isn’t a chatbot. You don’t type a question and get a paragraph back. You describe a goal, and it builds a plan, assigns sub-agents to each step, and runs the whole thing across isolated compute environments with real filesystems, browsers, and tools.

Think of it like hiring a small team. You say “build me a financial dashboard tracking semiconductor stocks,” and Computer breaks that into research, data gathering, design, and assembly. Each sub-agent works independently, in its own sandbox, with access to the web and the ability to write and run code.

The tasks can run for hours. It’s asynchronous by default: you fire off a job, close your laptop, and come back to results.

That financial terminal demo? Computer didn’t just fetch stock prices. It researched the relevant metrics for semiconductor analysis, decided on a layout, wrote the frontend code, connected live data sources, and assembled a working application. Several sub-agents, each handling one piece, all coordinated by a layer that decided who does what and when.

All of this ships to Perplexity Max subscribers at $200/month. The price is striking, sure, but the real story is how it works.

Six models, one conductor

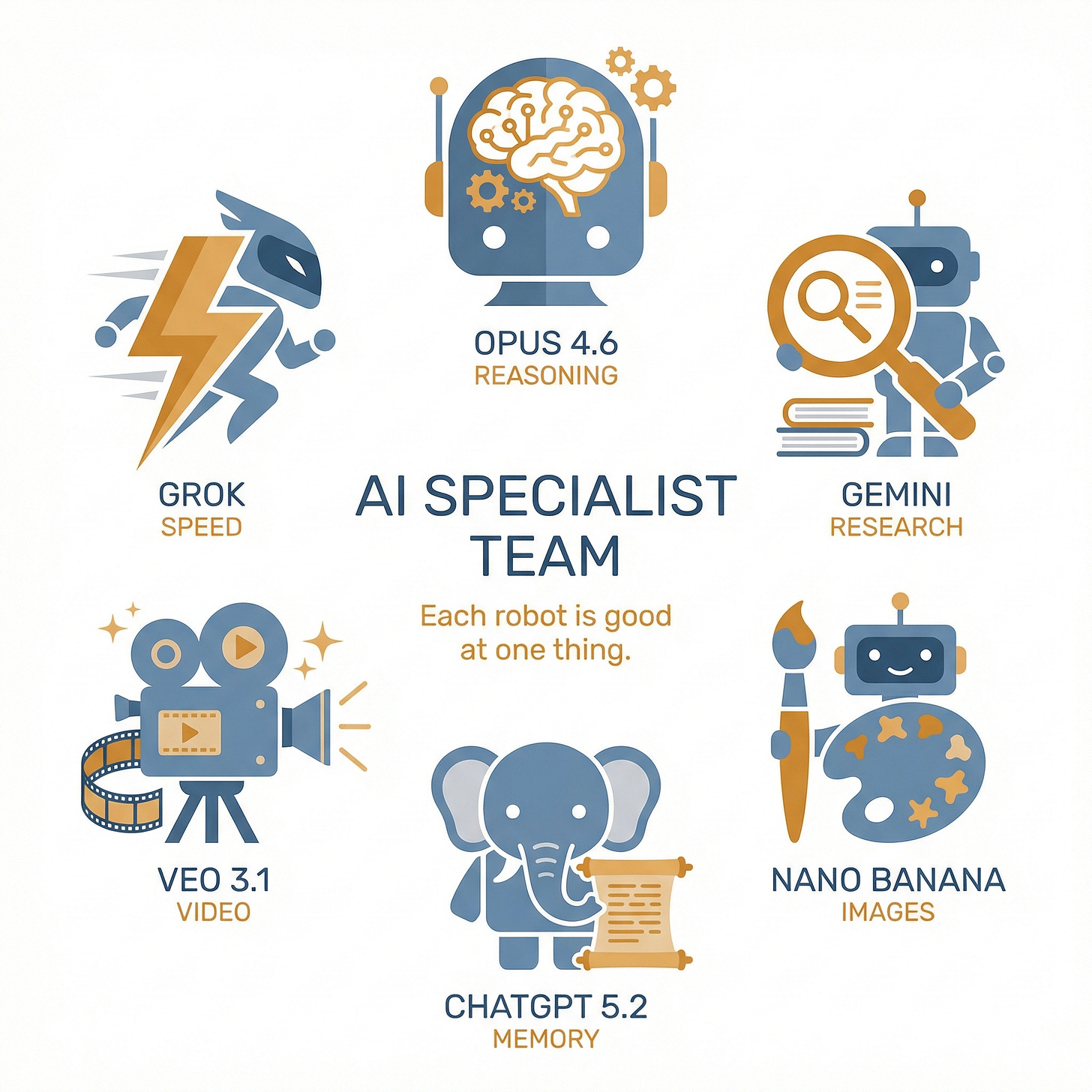

Here’s what’s different about Perplexity Computer. It doesn’t run on one AI model. It runs on six.

Claude Opus 4.6 from Anthropic handles core reasoning. Google’s Gemini does deep research. Nano Banana generates images. Google’s Veo 3.1 produces video. Grok from xAI handles speed-critical lightweight tasks. OpenAI’s Codex 5.2 manages long-context recall.

Six models from four different companies, each assigned to the thing it does best.

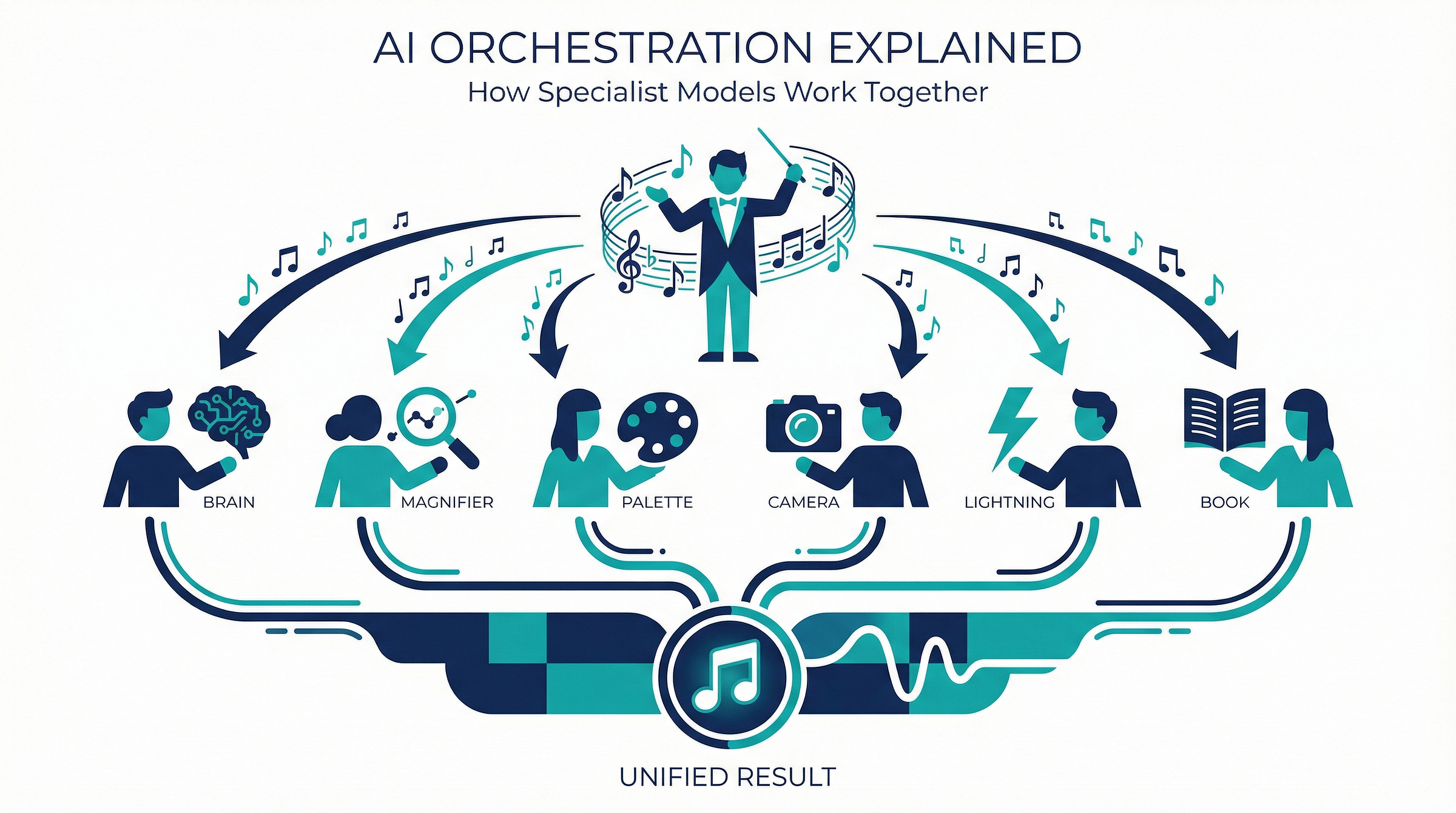

The thesis that makes Perplexity interesting: no single AI model excels at everything, and the future belongs to whoever builds the best conductor.

Think about it like an orchestra. The London Philharmonic doesn’t manufacture its own violins. It doesn’t need to. Its value comes from knowing which instruments to bring in, when, and how to make them work together. The violins come from Stradivarius. The oboes come from Loree.

The Philharmonic’s job is coordination.

Perplexity is making the same wager with AI models. Anthropic builds great reasoning models. Google builds great research models. xAI builds fast models. Rather than try to beat all of them at their own specialities, Perplexity built a coordination layer that uses all of them.

“Who has the best model” is last year’s question. The new one: who builds the best system around the models?

The architecture is model-agnostic by design. Users can choose which model handles which subtask. When a new model from any provider outperforms the current one for a given task, Perplexity swaps it in. Your workflows get better without you doing anything.

The big model labs are building increasingly powerful instruments. Perplexity is building the operating system that runs them all. And operating systems, historically, tend to capture more value than any single application.

From search engine to something else entirely

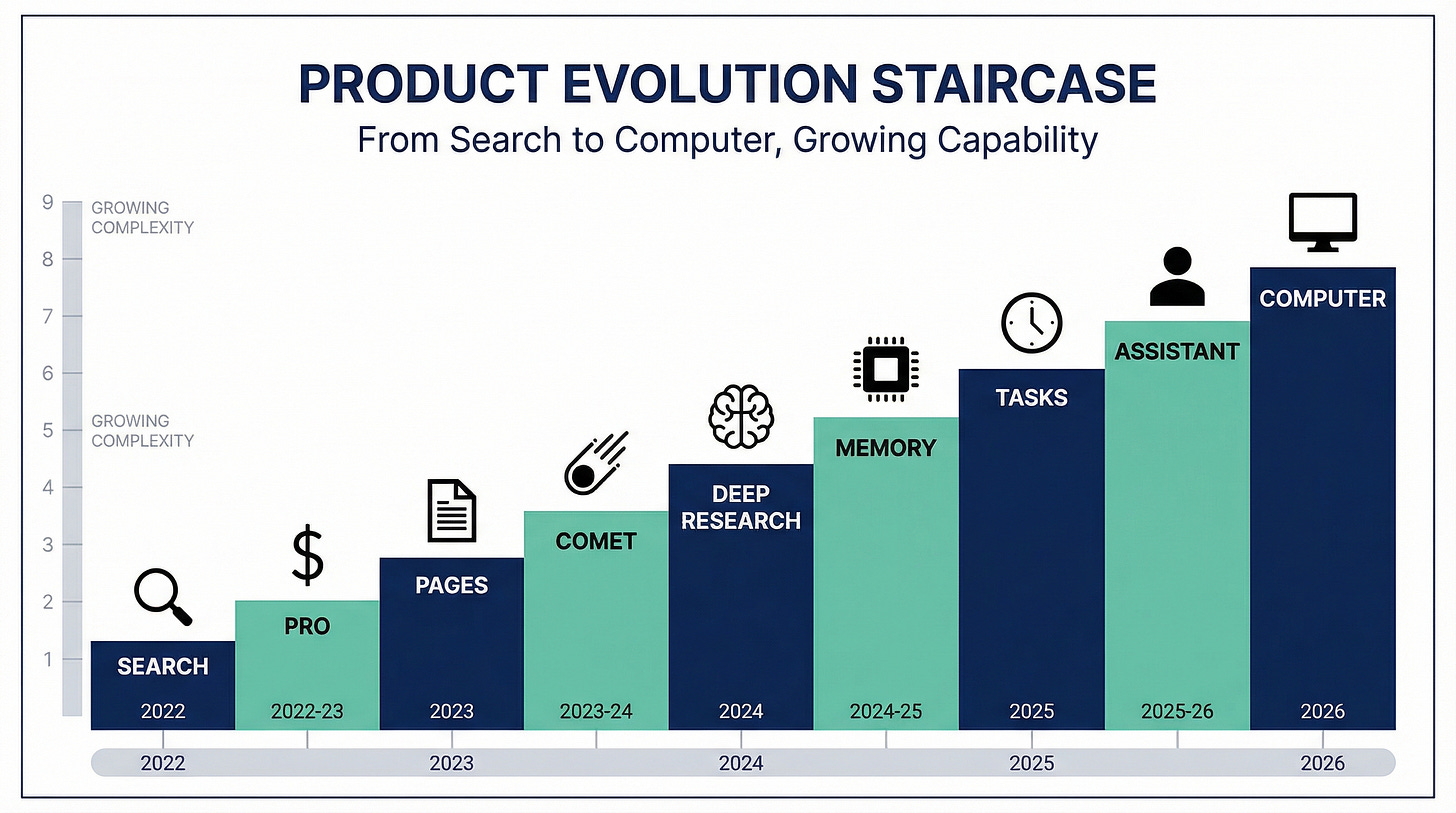

Perplexity didn’t start here. It started as a search engine.

Founded in 2022 by Aravind Srinivas, the company launched as an AI-powered alternative to Google Search. You’d ask a question, and instead of ten blue links, you’d get a synthesised answer with citations. Clean, useful, and for a lot of queries, obviously better.

But Aravind wasn’t building a search engine. He was building a stack.

Each product was a building block. Search taught the team retrieval and citation. The Pro subscription ($20/month) proved users would pay for AI-powered answers. Comet taught them browser-native AI. Comet Assistant taught them agentic browser control. Deep research taught them multi-step reasoning. Memory taught them user context. Tasks taught them scheduling and automation.

Computer is the synthesis: everything the company learned about retrieval, browser control, multi-step reasoning, and user context, assembled into a single product that can do sustained, complex work.

The company hit a $9 billion valuation in late 2024, climbing to $20 billion by September 2025, backed by Jeff Bezos and NVIDIA. They started as “the Google competitor.” Two years later, they’re picking fights with OpenAI, Anthropic, Google, and Microsoft all at once.

That’s either brilliant positioning or a death wish. Possibly both.

Everyone’s building agents, but differently

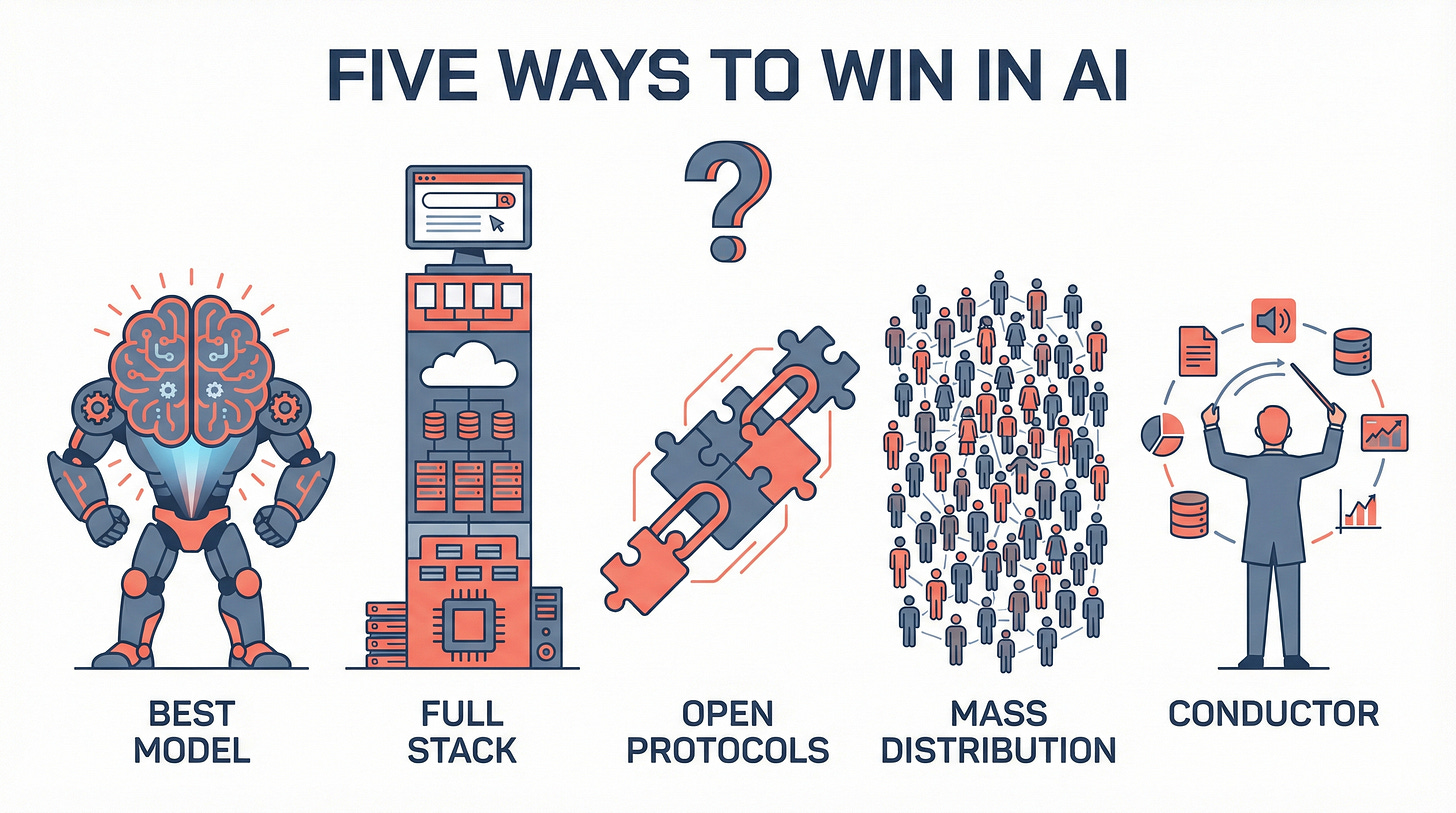

Perplexity isn’t alone in building AI agents. Every major lab has one. But the architectural choices differ in ways that’ll matter a great deal over the next few years.

OpenAI built Operator, a browser-based agent that lives inside the ChatGPT ecosystem. Their approach is vertical: one model family, one platform, one experience. If GPT gets good enough at everything, you don’t need six models. You need one really, really good one.

Google has Gemini agents, Project Mariner for browser control, and Jules for coding. Google’s play is integration: they own the models, they own Search, they own Chrome, they own Android. Why coordinate third-party models when you can own the entire stack from silicon to screen?

Anthropic went a different direction entirely. They built Claude Code (a CLI agent), computer use APIs, and the Model Context Protocol (MCP), an open standard that lets AI models talk to tools and data sources. Their angle is protocols: control the connective tissue between AI and the rest of the computing world, and it doesn’t matter whose model sits on top.

Then there’s Microsoft. Copilot agents across Office 365, Azure AI Agent Service for enterprises. Three hundred million Office users already have Microsoft’s AI in their toolbar. If you’re already everywhere, you just need to be good enough.

Each company is answering the same question differently: where does the value in AI actually accumulate? In the model, the platform, the protocol, or the coordination layer?

Perplexity’s stance is the most counterintuitive of the five. They’re saying, explicitly, that the model layer will keep improving and they don’t need to own it. They need to own the layer above it. The conductor.

Nobody knows which approach wins. But a company valued at $20 billion, choosing to build on top of its competitors’ models rather than competing with them directly? That’s a genuinely unusual strategic choice.

“Computer” was always a job title

The word “computer” didn’t always refer to a machine. It referred to a person.

In 1757, the French mathematician Alexis Clairaut needed to predict the return of Halley’s Comet. The calculations were staggeringly complex for the era, far beyond what one person could complete in time. So Clairaut partnered with two human “computers”: Joseph Lalande, an astronomer, and Nicole-Reine Lepaute, a mathematician. Lalande handled one set of orbital calculations, Lepaute handled another, and Clairaut coordinated the effort. The story didn’t end in a good way, but that’s not the point of our comparison.

Three specialists, each assigned to what they were best at, led by someone who understood the whole problem.

Perplexity Computer might be the most literal use of the word since 1757. Six AI models, each a specialist, working together to solve problems no single one could handle alone.

The question for the next few years won’t be which AI model is smartest. It’ll be who builds the best team.

I write about AI strategy regularly. If this kind of analysis is useful to you, consider following so you don't miss the next one.