Claude before Claude: How a juggling, unicycling mathematician invented the information age

He invented the bit, befriended Turing, beat roulette, and built a machine whose only purpose was to turn itself off. His intellectual DNA may also run through the AI that shares his name.

The machine that turns itself off

In Claude Shannon’s house in Winchester, Massachusetts, there sat a small wooden box with a single toggle switch on its lid. Flip the switch, and the box would whir to life. The lid would crack open. A mechanical hand would emerge, reach over, and flick the switch back off.

Then the hand would retreat.

The lid would then close.

Silence.

That was it. That was the entire function of the machine.

Shannon called it the “Ultimate Machine.” The concept came from Marvin Minsky, the future co-founder of MIT’s AI lab, who had sketched the idea but never bothered building it. Shannon, who could never resist a good gadget, built it. Arthur C. Clarke, the science fiction writer who dreamed up the satellite and co-wrote 2001: A Space Odyssey, saw the device and called it “unspeakably sinister.”

He wasn’t entirely joking.

There’s something about a machine whose sole purpose is to negate your action, to refuse the premise of its own existence, that crawls under your skin. You turn it on. It turns itself off.

You become intertwined in a loop with an object that wants nothing from the world except to be left alone.

Clarke reportedly couldn’t stop playing with it. Neither could anyone else who encountered the thing. Shannon kept several versions around the house, including one he liked to bring to dinner parties.

The Ultimate Machine is the perfect entry point to Claude Elwood Shannon because it contains all of his contradictions in a single wooden box.

Here was a man who produced one of the most important scientific papers of the twentieth century and spent roughly equal creative energy building a gasoline-powered pogo stick. A man who laid the mathematical groundwork for every smartphone, every Wi-Fi signal, every streaming service, every piece of digital communication on earth, and who also built a flame-throwing trumpet. A man whose work is baked into the infrastructure of modern civilisation so thoroughly that most people have never heard his name.

The most important scientist most people can’t name was also, by every available account, the funniest.

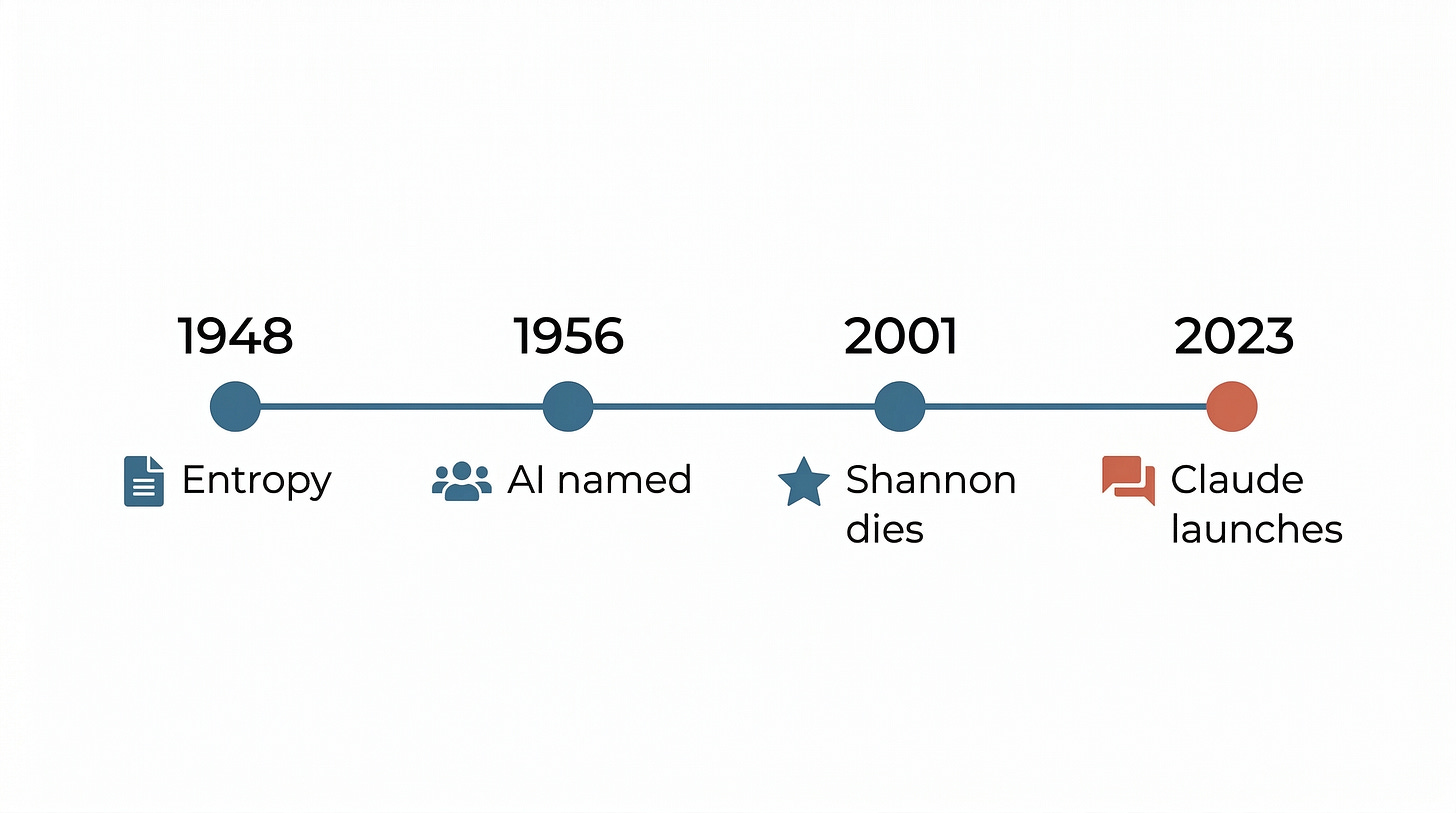

Shannon published “A Mathematical Theory of Communication” in 1948. With it, he created an entire scientific discipline from scratch, information theory, and gave the world the “bit,” the fundamental unit of the digital age. He proved theorems that told engineers exactly how much information could be squeezed through a noisy channel.

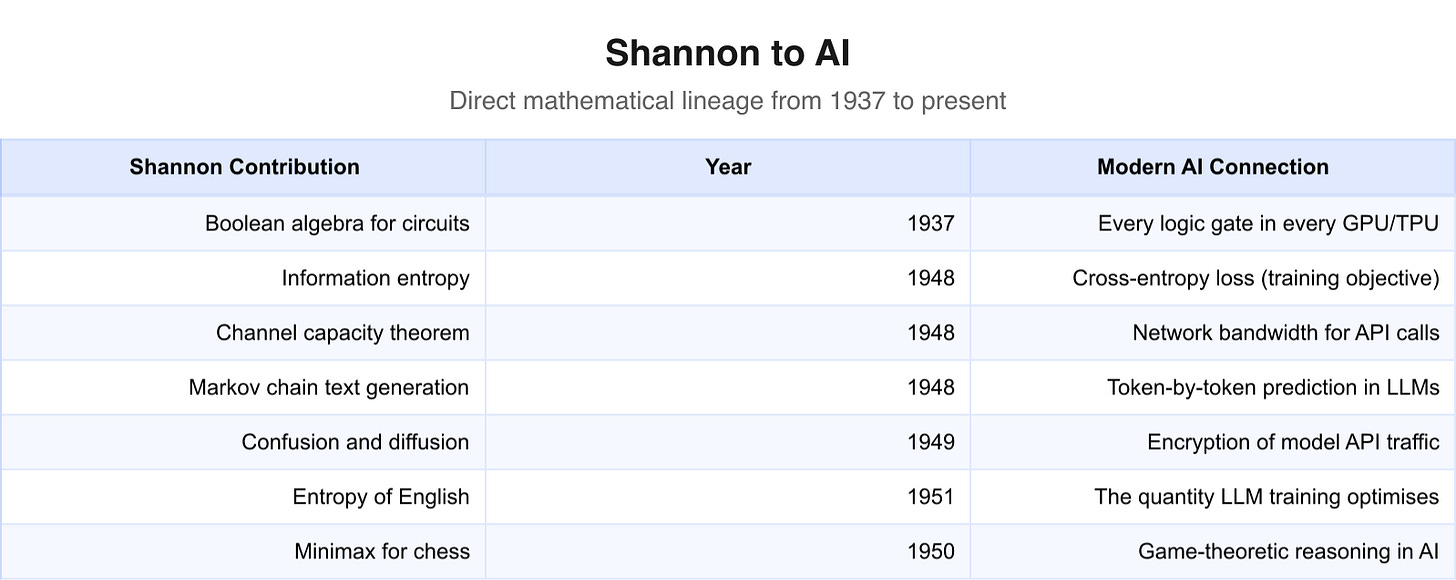

He connected Boolean algebra to electrical circuits, making the digital computer possible. He helped encrypt the phone calls between Roosevelt and Churchill during the Second World War. He built one of the earliest chess-playing programs and one of the first maze-solving robots. He co-organised the 1956 Dartmouth Workshop, the conference that gave artificial intelligence its name.

And then he went home and rode a unicycle through the hallways of Bell Labs while juggling three balls.

Shannon died in 2001, at eighty-four. By then the internet had remade the world along lines he had mathematically predicted were possible. But Alzheimer’s had taken him years earlier.

He never saw what his ideas became, and he’d probably be aghast and amused at the same time if he were still living.

Twenty-two years after his death, a company called Anthropic launched an AI assistant and named it Claude.

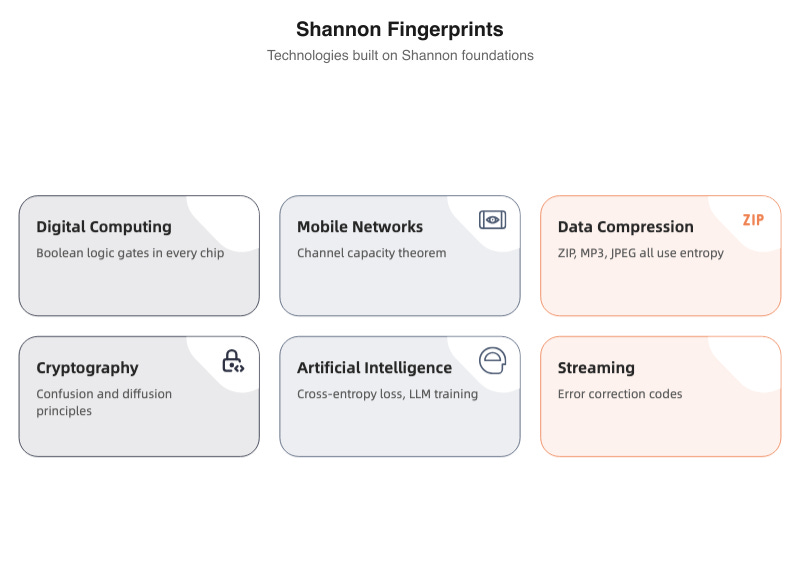

Whether the name honours Shannon specifically remains, as we’ll see, a matter of company lore rather than official confirmation. But the intellectual lineage is a different question entirely. Shannon’s entropy is in the loss function that trains the model. His Boolean algebra is in every transistor of the hardware that runs it. His theory of communication is the mathematical language the system operates in.

The machine that turns itself off. The ideas that never do.

The boy who wired Gaylord, Michigan

Claude Elwood Shannon was born on 30 April 1916 in Petoskey, Michigan, a small lakeside town on the shores of Lake Michigan. He grew up in Gaylord, about fifty kilometres south, population roughly three thousand. His father, also named Claude, was a probate judge and businessman. His mother, Mabel Wolf Shannon, was a high school principal and a language teacher. Neither was a scientist. Both were practical, educated midwesterners who valued competence over show.

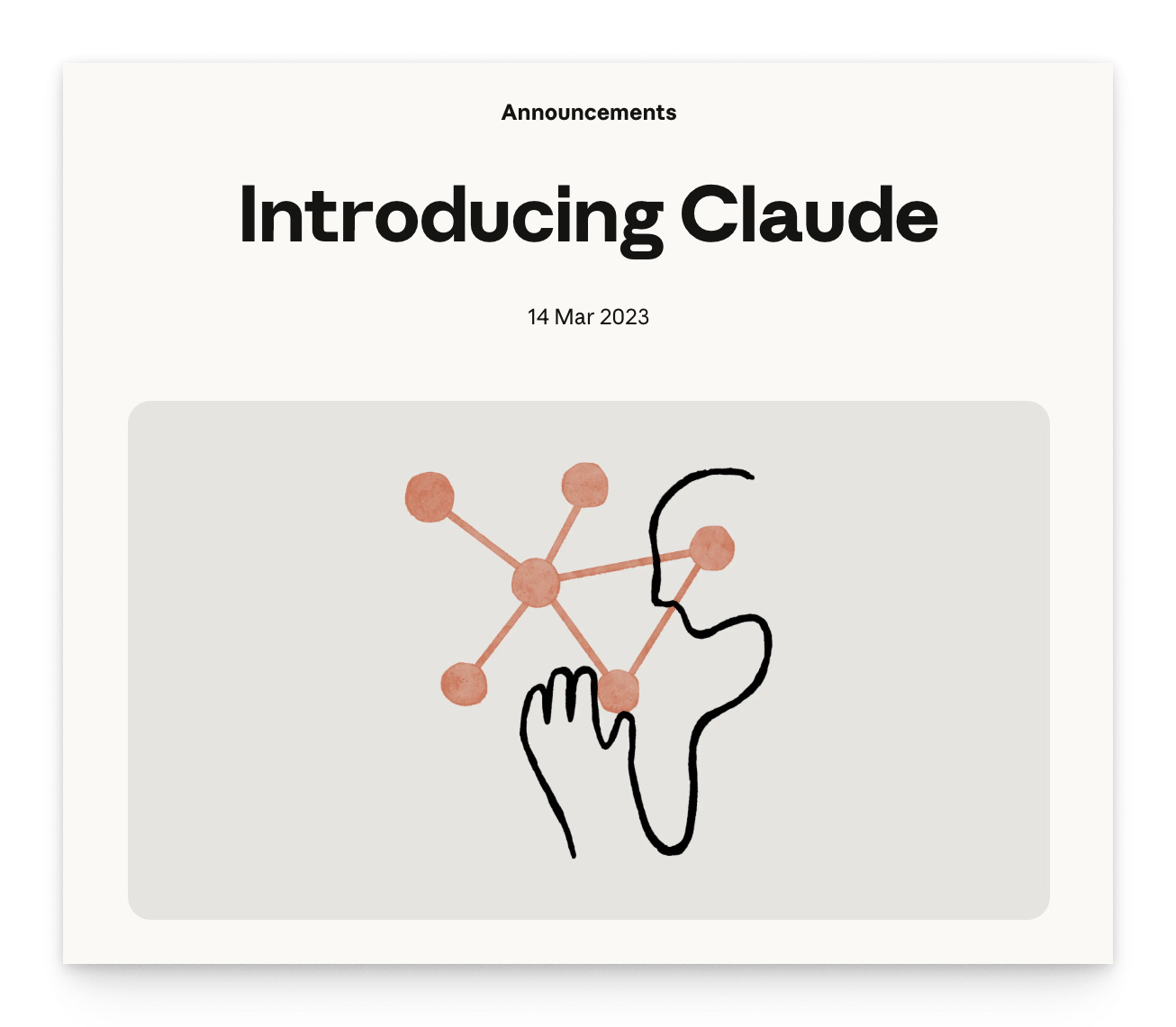

Young Claude took to the “competence” part immediately. He spent his childhood taking things apart. Radios, mostly. He’d fix them for a local department store, earning pocket money by doing work that most adults in Gaylord couldn’t manage. He became a Western Union messenger boy, delivering telegrams on his bicycle and absorbing, almost by osmosis, the mechanics of electrical communication.

The telegraph obsessed him. At some point during his childhood, Shannon and a neighbourhood friend strung a barbed-wire telegraph line between their two houses, half a mile apart. They used the barbed wire from the fences that lined the farmers’ fields between them. It worked. Two kids in rural Michigan, tapping out messages across a jerry-rigged wire held up by fence posts, years before either of them could have explained the physics of what they were doing.

His childhood hero was Thomas Edison. Much later, Shannon discovered this was more than idle admiration: he and Edison were both descended from John Ogden (1609-1682), a colonial settler, making them distant cousins.

Shannon loved the connection. Edison was the great American tinkerer, the man who turned invention into an industrial process. Shannon would become something different: a theorist who tinkered, or a tinkerer who theorised. The Edison kinship suited both halves of his personality.

He was also a reader. Edgar Allan Poe’s short story “The Gold Bug,” a tale of code-breaking and hidden treasure, captivated him as a boy. Poe’s story is about a man who decodes a substitution cipher to find Captain Kidd’s buried gold. It’s about the thrill of extracting a message from apparent nonsense, of finding signal in noise. Shannon would spend his professional life doing exactly this, except he’d do it with mathematics instead of a magnifying glass.

Shannon’s childhood contained the blueprint for everything that followed: wires, codes, puzzles, and an instinct for building things that worked.

In 1932, at sixteen, Shannon enrolled at the University of Michigan. He studied both mathematics and electrical engineering simultaneously, earning dual bachelor’s degrees in 1936.

The combination was unusual. Engineers built things. Mathematicians proved things. Shannon refused to pick a side, a refusal that would turn out to be the single most productive intellectual decision of the twentieth century.

After Michigan, he went to the Massachusetts Institute of Technology as a research assistant. His supervisor was Vannevar Bush, one of the most influential scientists in America, who would later direct the Office of Scientific Research and Development during the Second World War and write the famous essay “As We May Think.” Bush was building a machine called the differential analyser2, an analogue computer that could solve differential equations using a system of gears, shafts, and rotating discs. It was the most advanced computing device of its era, and it was entirely mechanical.

Shannon’s job was to maintain and operate the analyser. The work put him face-to-face with the practical reality of computation: the gears that slipped, the shafts that stuck, the relay circuits that controlled the whole assembly.

He was twenty years old, working with the most complex machine on the planet, and he started to notice something about those relay circuits that nobody else had formalised.

The circuits were either open or closed.

On or off. True or false.

Are you getting where we’re going?

He’d seen this somewhere before. In his maths classes.

The most important master’s thesis of the twentieth century

In 1937, at twenty-one years old, Claude Shannon submitted his master’s thesis at MIT. It was called “A Symbolic Analysis of Relay and Switching Circuits.” It was ninety pages long.

It changed the world.

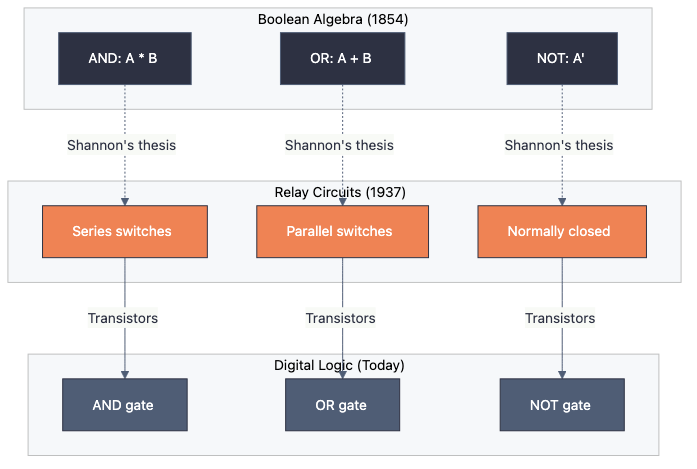

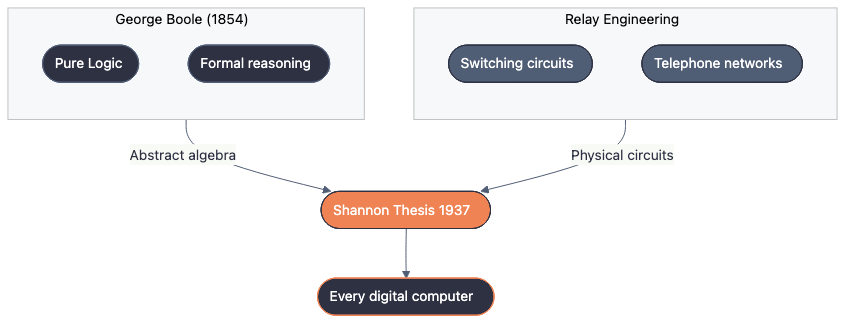

The insight was this: the on-off behaviour of electrical relay circuits could be described using Boolean algebra, the system of logic developed by George Boole in the 1850s that dealt in binary values, true and false. Shannon showed that Boole’s logical operators (AND, OR, NOT) mapped directly onto the physical behaviour of electrical switches arranged in series and parallel. A circuit with two switches in series performed a logical AND: both had to be closed for current to flow. A circuit with two switches in parallel performed a logical OR: either one could be closed. A normally-closed switch performed a NOT.

This sounds simple now. In 1937, it was a thunderclap.

Before Shannon’s thesis, engineers designed switching circuits by intuition and trial and error. They drew diagrams. They tested combinations. They relied on experience and guesswork.

There was no systematic method for designing a circuit to perform a specific logical function, and no way to prove that a design was optimal. Shannon provided both. He showed that any logical expression written in Boolean algebra could be directly translated into a circuit, and that the rules of Boolean algebra could simplify circuits, reducing the number of switches needed.

Howard Gardner called it “possibly the most important, and also the most famous, master’s thesis of the century.”

Herman Goldstine, the mathematician who helped build ENIAC, one of the first electronic general-purpose computers, was equally direct. He described Shannon’s thesis as “surely one of the most important master’s theses ever written,” noting that it “helped to change digital circuit design from an art to a science.”

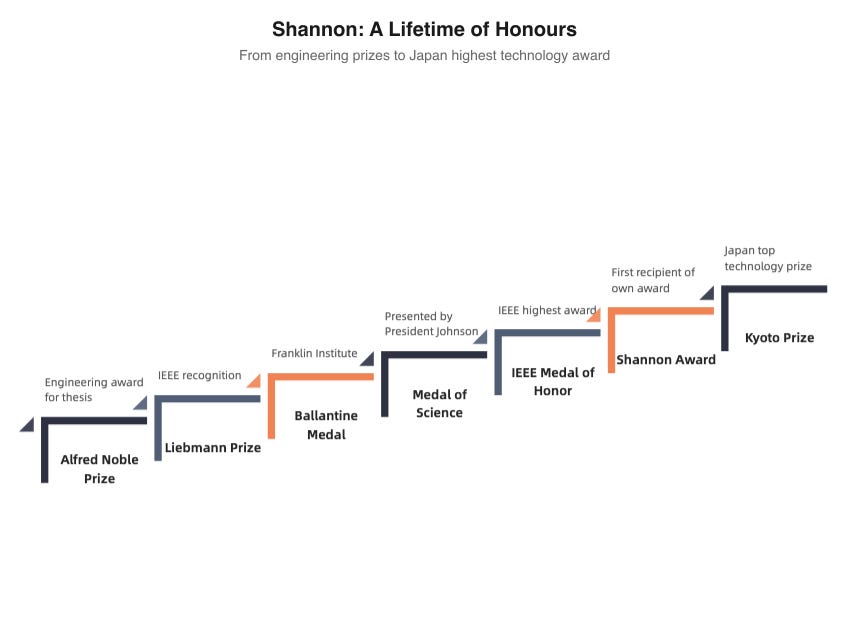

The thesis won Shannon the Alfred Noble Prize, an engineering award given jointly by several American engineering societies. (Not the Nobel Prize; the Nobel in physics or chemistry goes to different work entirely. But in engineering circles, the Alfred Noble Prize was serious recognition for a student barely old enough to drink.)

What made the thesis so powerful wasn’t just the insight itself but its generality.

Shannon hadn’t solved a specific engineering problem. He’d built a bridge between two entire fields (abstract logic and physical engineering) that had previously existed in total isolation from each other.

Boole had developed his algebra as a system of pure thought, a way to formalise human reasoning. He never imagined it would control electrical circuits.

And the engineers who designed telephone switching networks had never imagined that a branch of nineteenth-century philosophy could tell them how to arrange their relays.

Shannon saw the connection that both communities had missed and capitalised on it.

Every digital device you have ever used, every computer, every phone, every tablet, every smart speaker, every car with a microchip, runs on Boolean logic gates. The processors inside them are built from billions of transistors arranged to perform AND, OR, and NOT operations, exactly the operations Shannon described in 1937. The chain runs in a straight, unbroken line from a twenty-one-year-old’s master’s thesis to the device you’re reading this on.

Shannon wasn’t done at MIT. After completing his master’s in electrical engineering, he stayed on and earned a doctorate in mathematics in 1940. His PhD thesis, “An Algebra for Theoretical Genetics,” applied Boolean algebra to problems in population genetics. A completely different field.

Shannon had written two major theses in two different disciplines within three years.

The genetics thesis was solid work, but it didn’t reshape a field the way the switching circuits paper did. Still, the fact that Shannon produced it at all reveals something about how he thought. He wasn’t an engineer with a knack for maths, or a mathematician who liked circuits. He was something rarer: a mind that operated at the level of structure, spotting abstract patterns that connected apparently unrelated domains. Boolean algebra could describe circuits. Boolean algebra could describe genetics. The algebra was the thing. The domain was almost incidental.

He left MIT in 1940 with two graduate degrees, one in electrical engineering and one in mathematics, and a growing reputation as someone the scientific establishment should keep an eye on.

They would.

Bell Labs, the war, and tea with Turing

Shannon joined Bell Telephone Laboratories in 1941, just as America was entering the Second World War. Bell Labs, in Murray Hill, New Jersey, was the research arm of AT&T and arguably the most productive research institution in the history of science. Over the decades, its researchers would invent the transistor, the laser, the solar cell, the charge-coupled device, Unix, the C programming language, and information theory itself. Seven Nobel Prizes would eventually go to Bell Labs scientists.

Shannon fit right in.

His wartime work was classified. He contributed to fire-control systems for anti-aircraft guns, the mathematical machinery that helped gunners lead their targets through the sky. More importantly, he worked on cryptography.

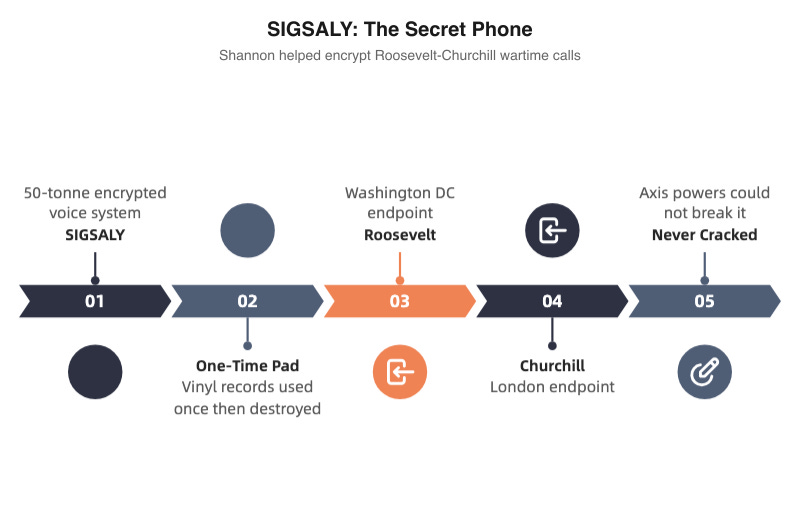

Shannon contributed to SIGSALY3, the system that encrypted voice communications between Franklin Roosevelt in Washington and Winston Churchill in London. SIGSALY was a monster: it weighed over fifty tonnes, filled an entire room, and used a one-time pad system encoded on vinyl records.

But it worked. The Axis powers never cracked it.

Shannon’s thinking about cryptography went far deeper than the engineering. In 1945, he wrote a classified paper called “A Mathematical Theory of Cryptography.” When it was declassified and published in 1949 as “Communication Theory of Secrecy Systems,” it became the founding document of mathematical cryptography. In it, Shannon proved that the one-time pad (a cipher where the key is as long as the message and used only once) is theoretically unbreakable. No amount of computing power, no cleverness of attack, could crack it, as long as the key was truly random and never reused.

He also introduced two concepts that would echo through cryptography for the next eighty years: confusion and diffusion. Confusion means making the relationship between the key and the ciphertext as complex as possible. Diffusion means spreading the influence of each plaintext character across many ciphertext characters, so that changing one letter of the message changes many letters of the encrypted output. These two principles became the theoretical backbone of the Data Encryption Standard (DES) and the Advanced Encryption Standard (AES), the ciphers that protect your bank transactions and your encrypted messages today.

Shannon has been called the “founding father of modern cryptography,” and the title is earned. His 1949 paper is to cryptography what his 1948 paper is to information theory: the moment a craft became a science.

Then there’s the Turing connection.

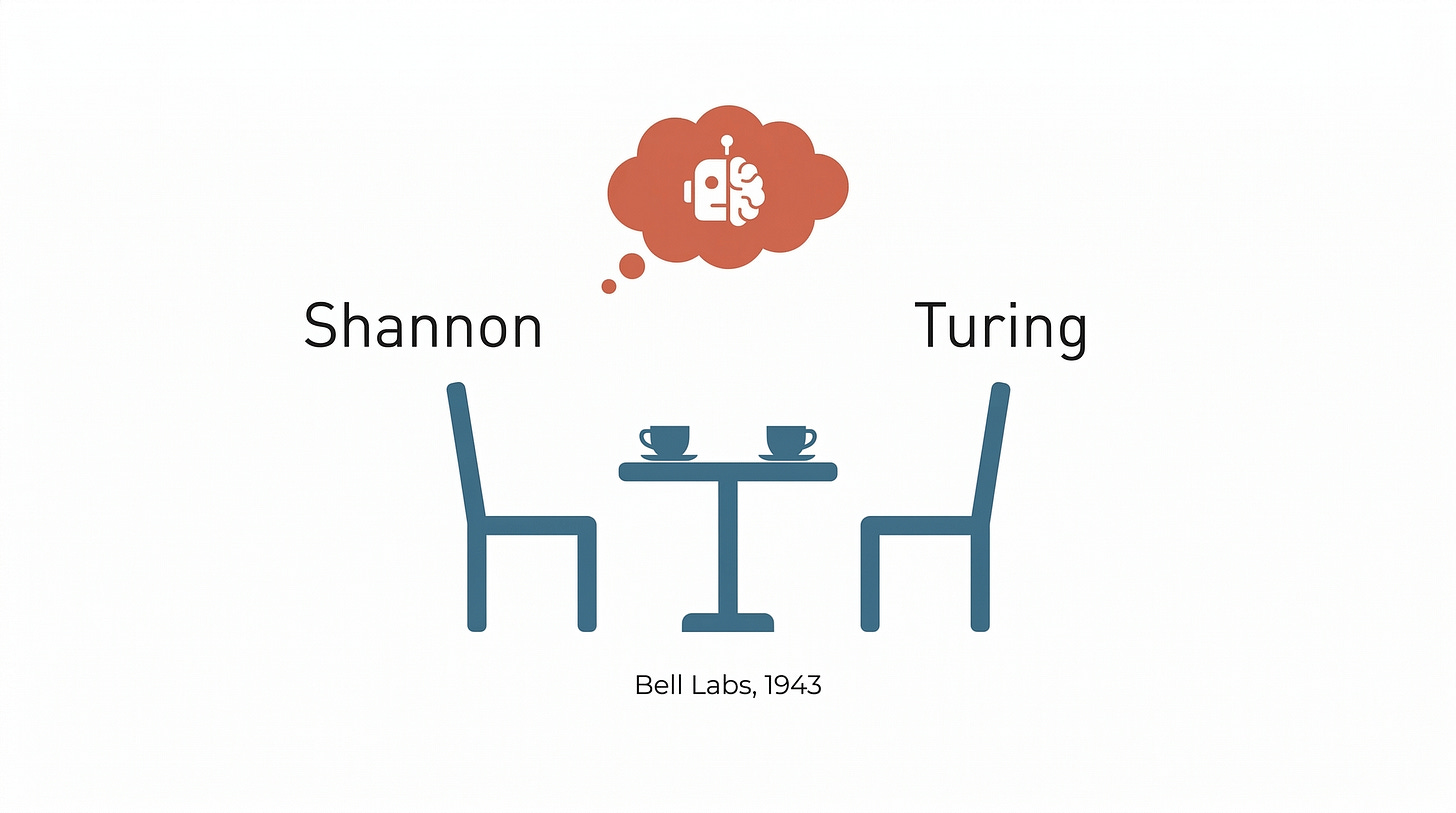

In early 1943, Alan Turing visited Bell Labs. Turing was in America as part of a liaison between British and American cryptographic operations. He and Shannon had tea together. Multiple times, by Shannon’s later account.

What did they talk about? Thinking machines.

Both men were already interested in the theoretical limits of computation and the possibility that machines might one day think. Shannon had been fascinated by the idea since his time with Vannevar Bush’s differential analyser. Turing had published “On Computable Numbers” in 1936, the paper that introduced the concept of a universal computing machine and laid the foundation for computer science itself.

Neither man disclosed the full details of their conversations, in part because much of their work was classified. But Shannon later confirmed that the discussions ranged across artificial intelligence, the design of computers, and what it would mean for a machine to exhibit intelligent behaviour. Two of the most important minds of the century, sitting in a Bell Labs cafeteria, drinking tea, and casually sketching the future of computing.

There’s a bitter irony in the security arrangements. Shannon himself wasn’t cleared for the major cryptographic projects at Bell Labs. He could explain his theoretical ideas to the cryptographers, but they couldn’t tell him what they were actually using them for. Shannon, characteristically, didn’t seem to mind. He was interested in the theory. What the government did with it was their problem.

Years later, Shannon would demonstrate that a universal Turing machine, the abstract device at the heart of all computing, could be constructed with only two internal states. It was a result of no practical importance and considerable theoretical beauty, exactly the kind of thing Shannon loved.

The paper that created a science

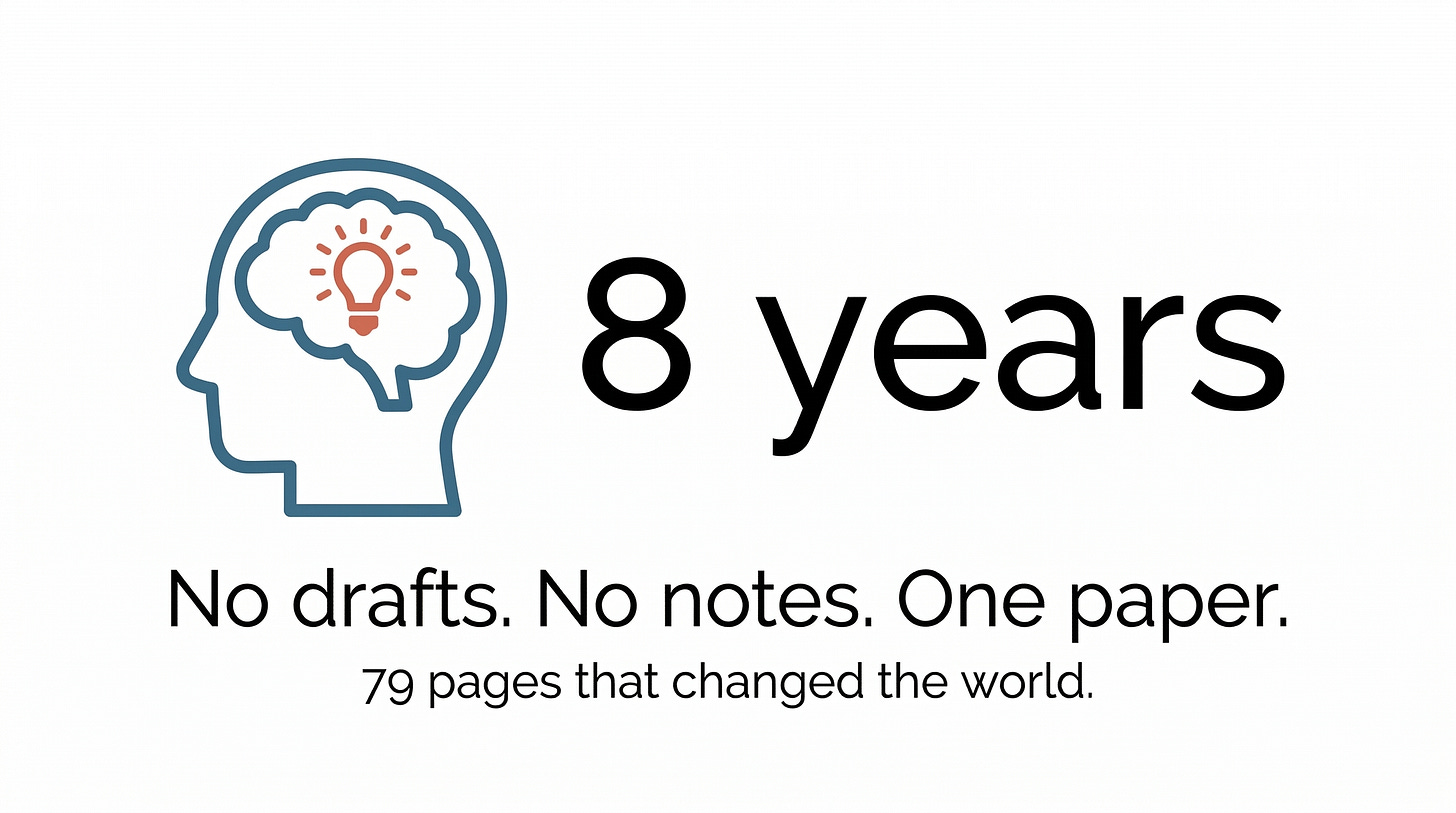

In the July and October 1948 issues of the Bell System Technical Journal, Claude Shannon published a seventy-nine-page paper called “A Mathematical Theory of Communication.” It is, by a reasonable consensus of engineers, mathematicians, and historians of science, one of the most important scientific papers ever written.

Scientific American called it the “Magna Carta of the Information Age.” It has been cited over 150,000 times on Google Scholar. It created an entire scientific discipline, information theory, from whole cloth. And Shannon, by the accounts of those who knew him, carried the entire thing around in his head for roughly eight years before writing it down.

Robert Gallager, the MIT professor and information theorist, noted that there were no known drafts, no partial manuscripts, no working papers. Shannon worked on the theory throughout the early and mid-1940s, kept it all in his mind, and then wrote it.

Just wrote it.

The bit

The paper’s most famous contribution is the smallest: the bit, short for “binary digit.” Shannon established that the bit, a single yes-or-no, on-or-off, 1-or-0 choice, is the fundamental, irreducible unit of information. Every piece of digital data you have ever encountered, every photograph, every song, every film, every text message, every email, every web page, is composed of bits.

The word “bit” was suggested by John W. Tukey, the Princeton mathematician, as Shannon acknowledged in a footnote in the paper. But the concept, the idea that all information, regardless of its form or content, could be measured and quantified in binary units, was Shannon’s.

“Shannon was the person who saw that the binary digit was the fundamental element in all of communication,” Robert Gallager said. “That was really his discovery, and from it the whole communications revolution has sprung.”

Shannon entropy

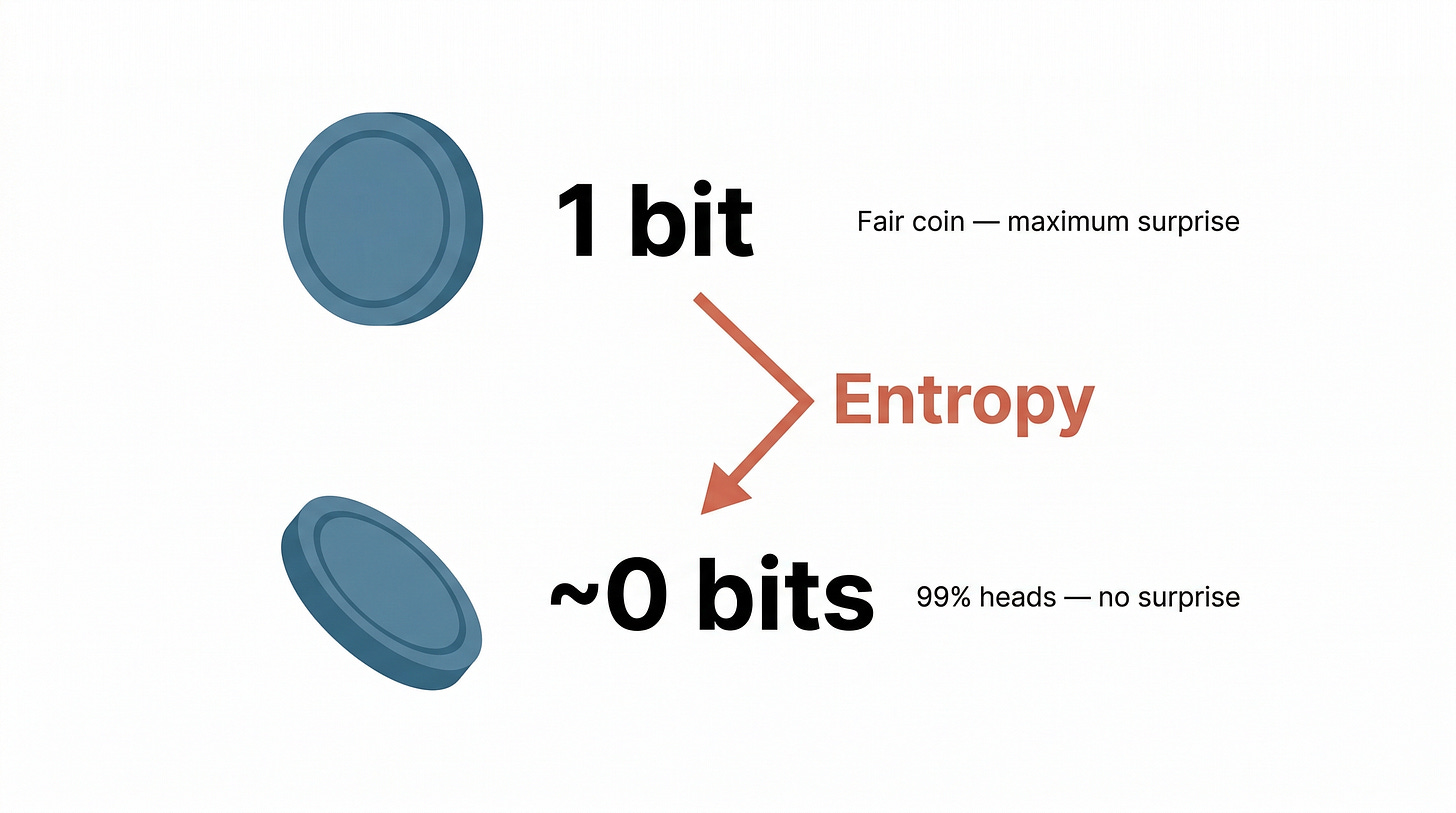

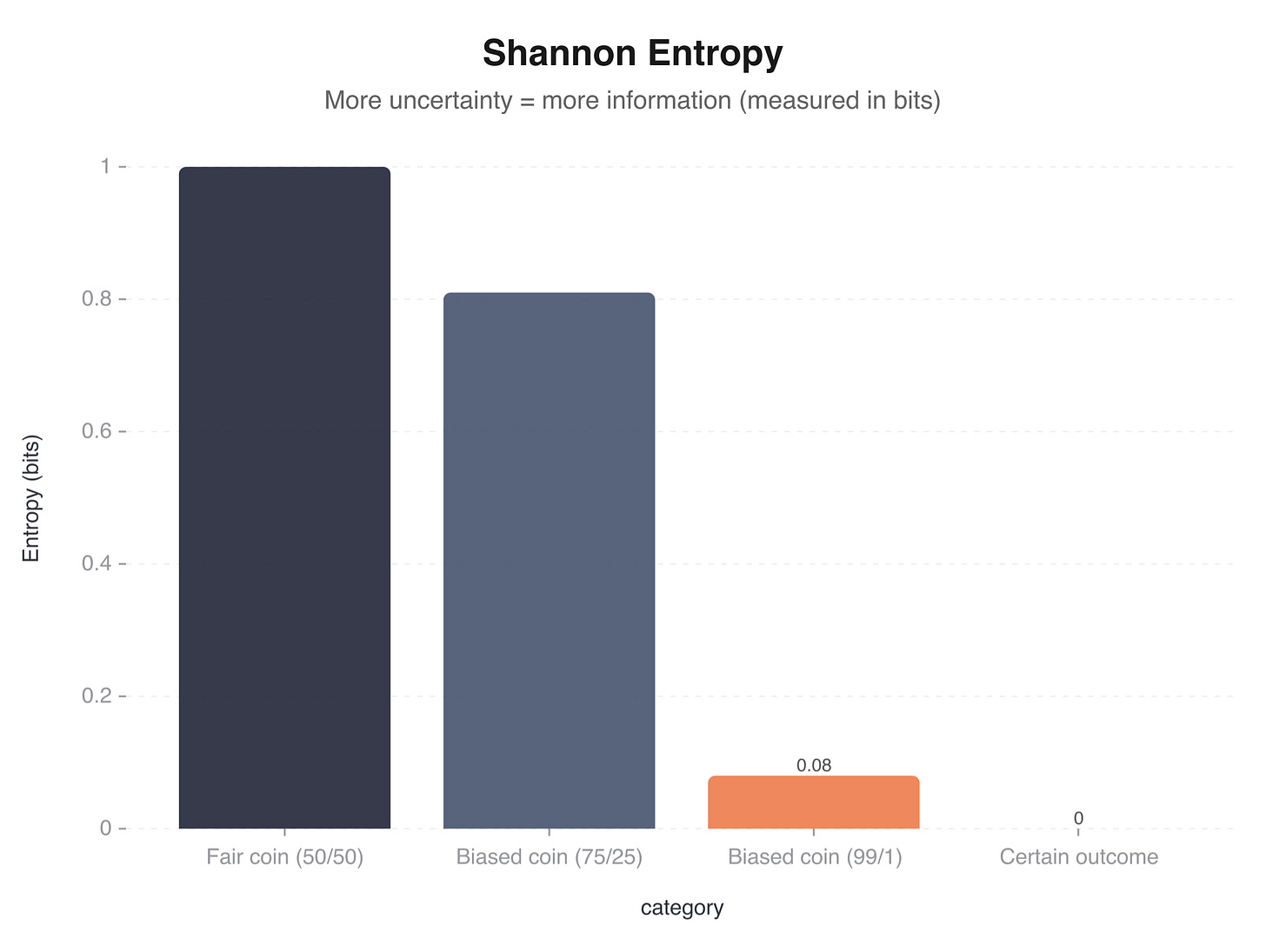

The paper’s deepest idea is entropy, Shannon’s measure of information content. Shannon entropy, written as H(X) = -Sum[p(x) log2 p(x)], quantifies the uncertainty or surprise in a message source.

The concept is best understood through a coin flip.

Flip a fair coin. Before it lands, you genuinely don’t know whether it will be heads or tails. The outcome carries maximum uncertainty for a binary event.

Shannon entropy: exactly 1 bit.

Now flip a loaded coin that comes up heads 99% of the time. Before it lands, you already know, with near certainty, that it will be heads.

The outcome carries almost no surprise. The loaded coin carries almost no information because you already knew what was going to happen.

Shannon entropy: close to 0 bits.

Information, in Shannon’s framework, is the resolution of uncertainty. A message is informative to the degree that it tells you something you didn’t already know.

A weather forecast that says “the sun rose this morning” carries essentially zero information. A weather forecast that says “snow in July in Auckland” carries a lot.

There’s a famous anecdote about the name “entropy.” John von Neumann, the mathematician, supposedly told Shannon to call his measure “entropy” because “in the first place, a mathematical development very much like yours already exists in Boltzmann’s statistical mechanics, and in the second place, no one understands entropy, and in a battle of wits you will always have the advantage.” The story is widely repeated. Shannon himself cast doubt on it. In an interview with the journalist John Horgan, Shannon said the remark “sounds like the kind of remark I might have made as a joke.” Whether von Neumann actually said it remains, charitably, unconfirmed.

Channel capacity

Shannon’s second major result was the channel capacity theorem, also called the noisy-channel coding theorem. It states that for any communication channel with a given level of noise, there exists a maximum rate, measured in bits per second, at which information can be transmitted reliably. Below this rate, it’s possible to communicate with an arbitrarily low error rate. Above it, reliable communication is impossible, no matter how clever your encoding scheme.

This was shocking. Before Shannon, engineers assumed that noisy channels would always produce some irreducible level of errors. Shannon proved that noise could be overcome entirely, as long as you stayed below the channel’s capacity and used sufficiently sophisticated coding. The theorem didn’t tell you how to build the codes. It just proved they existed. Engineers spent the next seventy years catching up to Shannon’s theoretical limit, and modern codes like turbo codes and low-density parity-check codes come within a whisker of it.

The Markov chain experiment

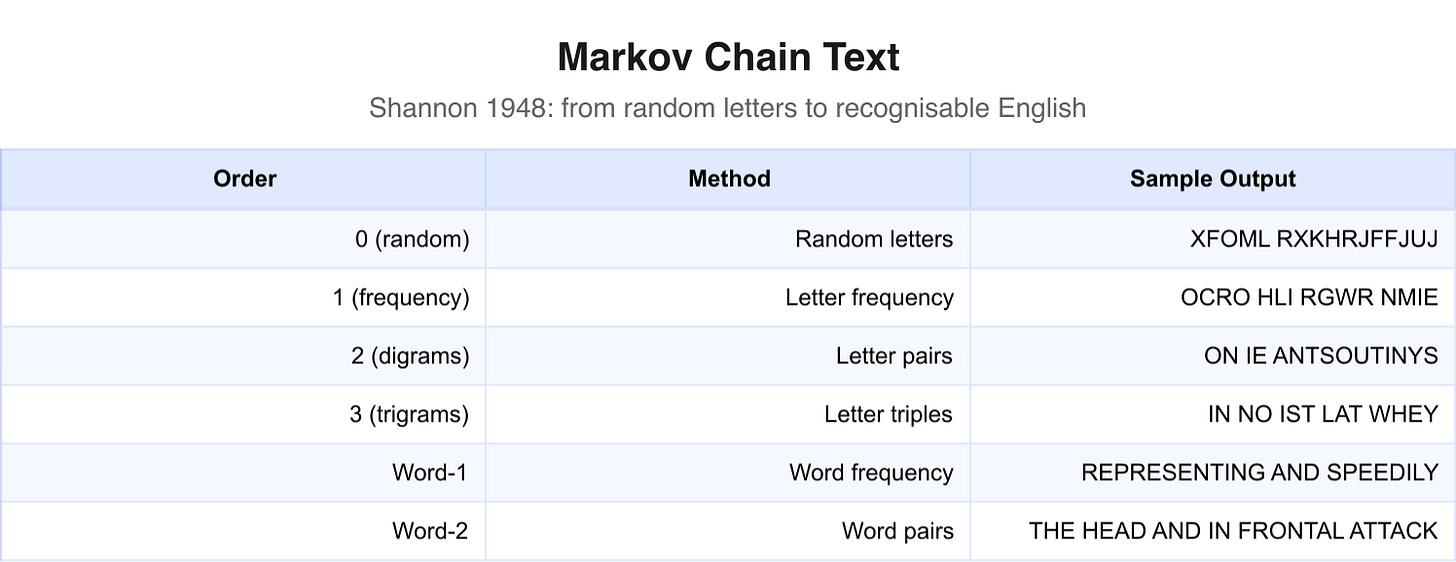

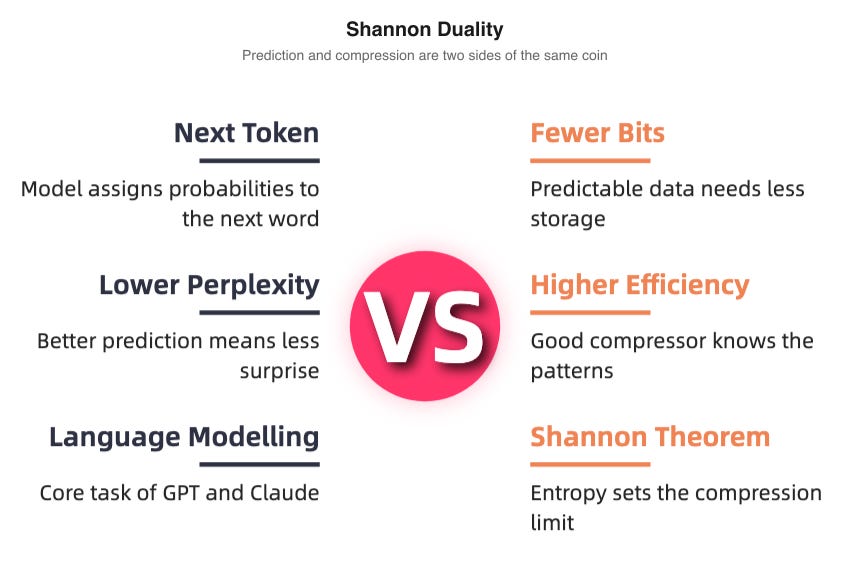

Buried in the paper is a section that reads, in retrospect, like a prophecy. Shannon demonstrated that you could generate surprisingly realistic-looking English text using Markov chains, statistical models that predict the next character or word based on the preceding ones.

He started simple. A “zero-order” model picks letters at random, producing gibberish. A “first-order” model picks letters with the same frequency they appear in English, producing something slightly less random. A “second-order” model considers pairs of letters, and the output starts to look vaguely like English. By the time you get to word-level models conditioned on one or two preceding words, the output produces recognisable fragments of English prose, grammatically plausible phrases that almost but don’t quite mean anything.

Shannon’s Markov chain experiment in 1948 is the direct conceptual ancestor of modern large language models. GPT, Claude, Gemini, and every other language model are, at their mathematical core, doing the same thing Shannon described:

They predict the next token based on the preceding context.

The models are incomparably larger and more sophisticated, trained on billions of parameters across trillions of words of text. But the underlying principle (statistical prediction of sequential text) is Shannon’s.

The bandwagon warning

In 1956, Shannon published a short, sharp editorial in the IRE Transactions on Information Theory called “The Bandwagon.” Information theory had become fashionable. Researchers in biology, psychology, linguistics, economics, and half a dozen other fields were rushing to apply Shannon’s framework to their own domains. Shannon was not impressed.

He warned that the “basic results of the subject are aimed in a very specific direction, a direction that is not necessarily relevant to such fields as psychology, economics, and other social sciences.”

He urged caution, intellectual humility, and the recognition that a mathematical theory of communication was not automatically a mathematical theory of everything. The man who created the field was the first to warn against its overuse.

Fun times. The warning went largely unheeded. Look at the world now.

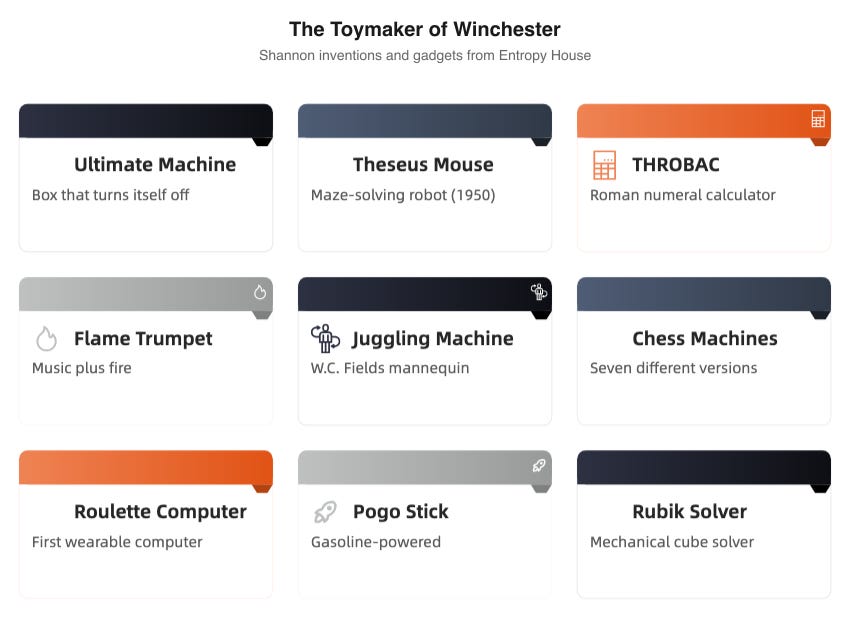

The toymaker of Winchester

Shannon and his second wife, Betty, a mathematician and former colleague at Bell Labs who had worked on fire-control computations during the war, lived in a large house in Winchester, Massachusetts, overlooking the Mystic Lakes. They called it “Entropy House.” It had a two-storey room that Shannon filled, floor to ceiling, with gadgets, contraptions, gizmos, and machines of every description.

The toy room was Shannon’s real laboratory. His office at MIT (where he held a professorship from 1956) and his position at Bell Labs were where he did the work the world cared about. The toy room was where he did the work he cared about.

The juggling mathematician

Shannon was obsessed with juggling. He built multiple juggling machines, including one that featured a W.C. Fields mannequin throwing balls in a cascade pattern. He developed a mathematical theorem for juggling1: (F+D)H = (V+D)N, where F is flight time, D is the “dead time” a ball spends in the hand, H is the number of hands, V is the time a hand is empty, and N is the number of balls.

Classic Shannon: take something playful, find the mathematical structure hiding inside it, formalise it, then go back to playing.

He rode a unicycle through the corridors of Bell Labs. While juggling. His colleagues, some of the most brilliant scientists and engineers in America, would flatten themselves against the walls as Shannon wobbled past, three balls arcing above his head, grinning.

The inventions

The list of Shannon’s gadgets reads like the catalogue of a very eccentric museum:

A flame-throwing trumpet (it played music and shot fire; the practical application was nil)

A rocket-powered Frisbee (exactly what it sounds like)

THROBAC (THrifty ROman numerical BAckwards-looking Computer), a calculator that worked in Roman numerals

A gasoline-powered pogo stick (dangerous; Shannon loved it)

A machine that solved Rubik’s Cubes

Seven different chess-playing machines

A hundred-blade jackknife (a Swiss Army knife taken to its illogical conclusion)

A mind-reading machine (more accurately, a penny-matching machine that used game theory to beat humans at a guessing game)

A chess automaton that made witty remarks while playing

Styrofoam shoes for walking on water (they didn’t work well, but he tried)

“I’ve always pursued my interests without much regard for financial value or value to the world,” Shannon once said. “I’ve spent lots of time on totally useless things.”

This wasn’t false modesty. Shannon genuinely followed his curiosity wherever it led, and he made no distinction between “serious” work and play. The information theory was serious. The juggling theorem was play. Both received the same quality of attention. Both emerged from the same mind.

Shannon saw no hierarchy.

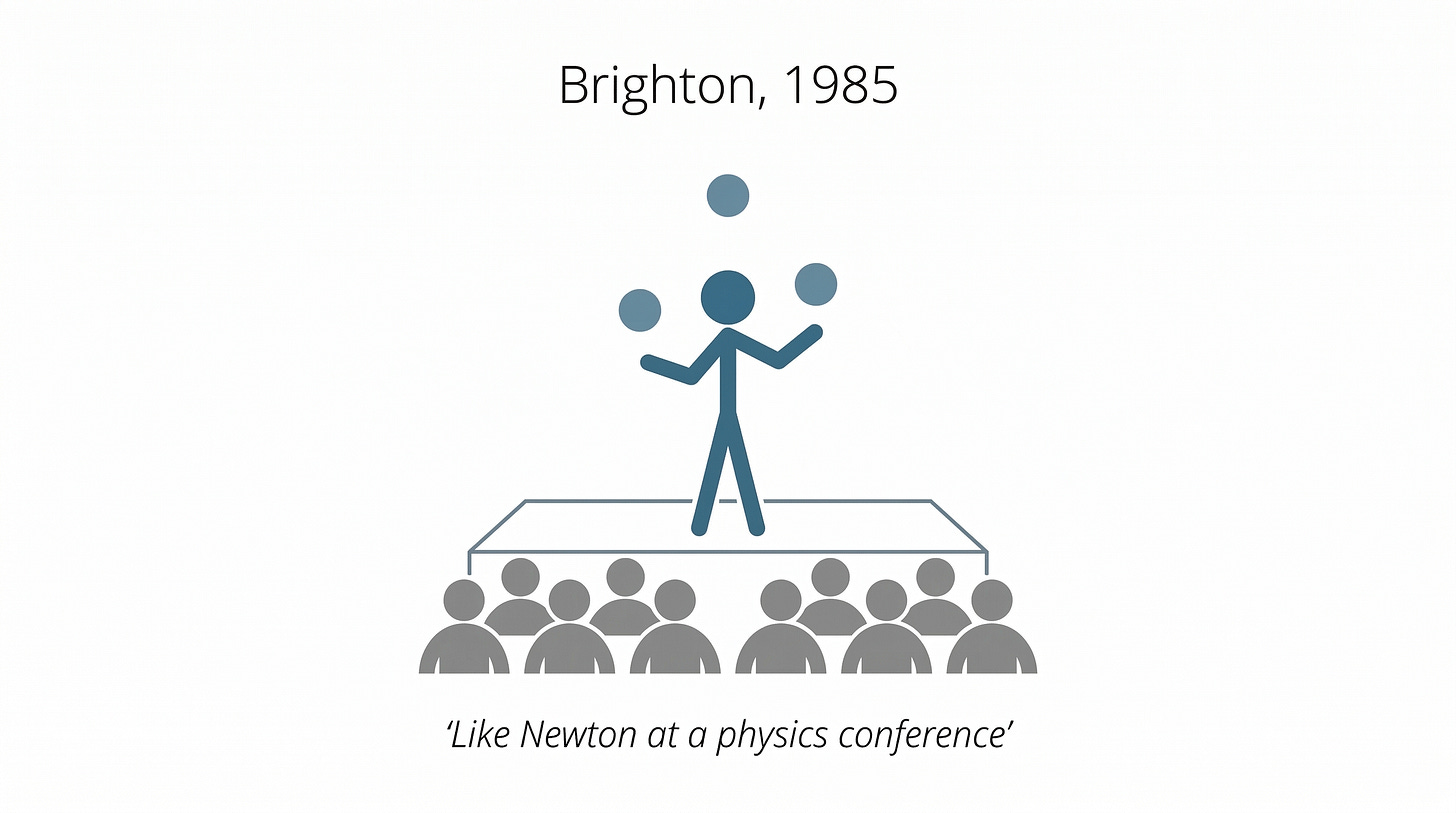

Brighton, 1985

In 1985, after years of growing reclusiveness, Shannon appeared at an information theory conference in Brighton, England. The attendees were stunned. Shannon hadn’t appeared at a major conference in years. He was a living legend, and most of the younger researchers in the room had never seen him in person.

He spoke briefly. The audience hung on every word.

Then he reached into his pockets, pulled out three balls, and juggled.

The room erupted. An attendee later described the scene: “It was as if Newton had showed up at a physics conference.”

Delegates queued up for autographs. The father of information theory had returned, and he’d brought his juggling balls.

Beating the house

Shannon’s playful side had a quantitative edge that occasionally made real money. In the early 1960s, he partnered with Ed Thorp, a mathematics professor at MIT (later at UC Irvine) who would go on to write Beat the Dealer and become one of the most successful hedge fund managers in history. Together, they built something remarkable: what is considered the first wearable computer.

The device was designed to beat roulette. It was small enough to fit inside a cigarette pack. The user controlled it with microswitches embedded in their shoes, operated by their big toes. The computer’s output was delivered as musical tones through a tiny earpiece. By timing the speed of the roulette wheel and the ball, the computer could determine which of eight segments of the wheel the ball was most likely to land in. The system was working by June 1961.

Shannon, ever the theorist, applied social network reasoning to their operation’s security. He demanded strict secrecy, arguing that he was only about “three degrees” from an enraged casino owner. One leak through a friend of a friend of a friend, and they’d be in serious trouble.

They chickened out. After one trial run in a Las Vegas casino, where the system reportedly performed as predicted, Shannon and Thorp abandoned the project. The hardware was finicky. The earpiece kept falling out. And, well, Shannon’s curiosity had been satisfied. Onto the next.

Gallager captured it well: Shannon “tired of the roulette scheme before becoming successful, as he really was not interested in making money with the scheme, but only in whether it could be done.”

(This is Shannon in a nutshell. Can the thing be done? Yes? Great. Moving on.)

The stock market

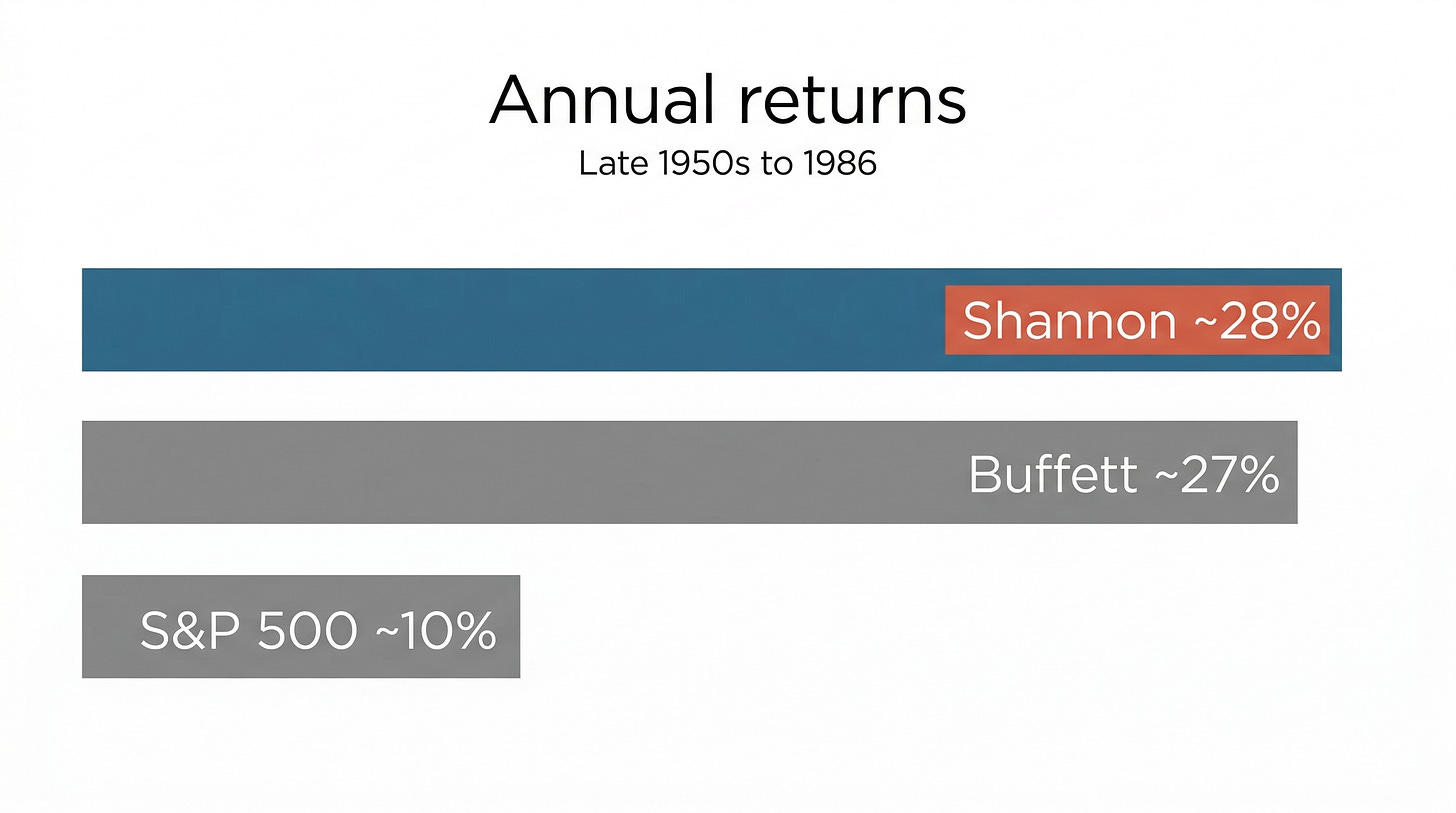

Shannon’s disinterest in roulette profits didn’t extend to the stock market. He was a remarkably successful investor, achieving roughly 28% annual returns over several decades from the late 1950s to 1986. According to Barron’s, this performance outperformed 1,025 of 1,026 mutual funds. He invested in companies like Teledyne, Motorola, and Hewlett-Packard, companies whose engineering cultures he understood and whose products he could evaluate from first principles.

The connection between Shannon’s information theory and his investment returns wasn’t entirely accidental. John Kelly, a colleague at Bell Labs, developed the Kelly criterion, a formula for optimal bet sizing directly inspired by Shannon’s information theory. Kelly showed that the same mathematics Shannon used to determine the capacity of a communication channel could determine how much of your bankroll to wager when you had an informational edge. Professional gamblers and quantitative investors still use the Kelly criterion today.

Shannon and Thorp discussed the Kelly criterion extensively. Thorp would later apply it with devastating effectiveness in both blackjack and the financial markets. Shannon applied it more quietly, to his personal portfolio, and did very well indeed.

The prophet who refused to prophesy

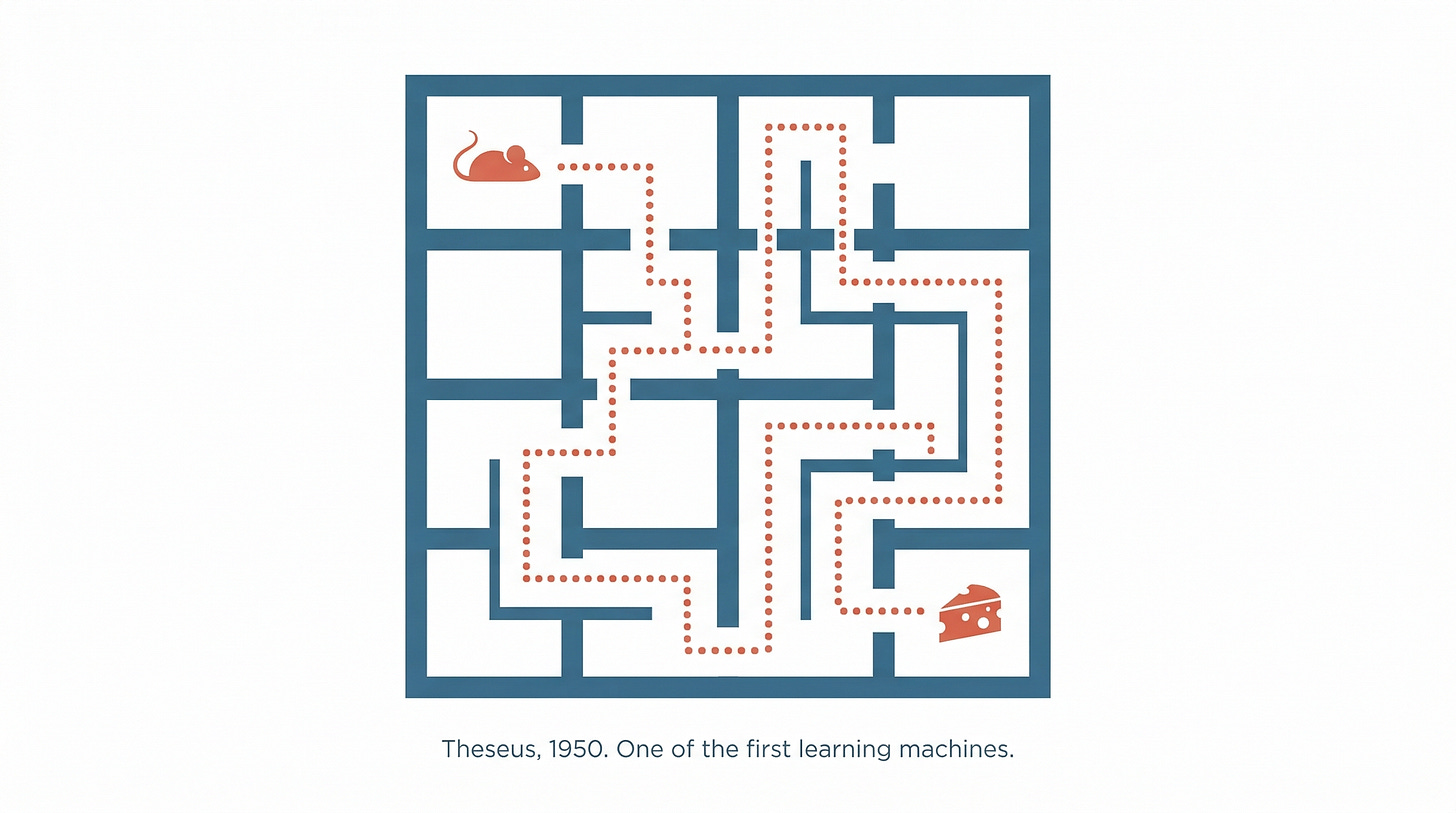

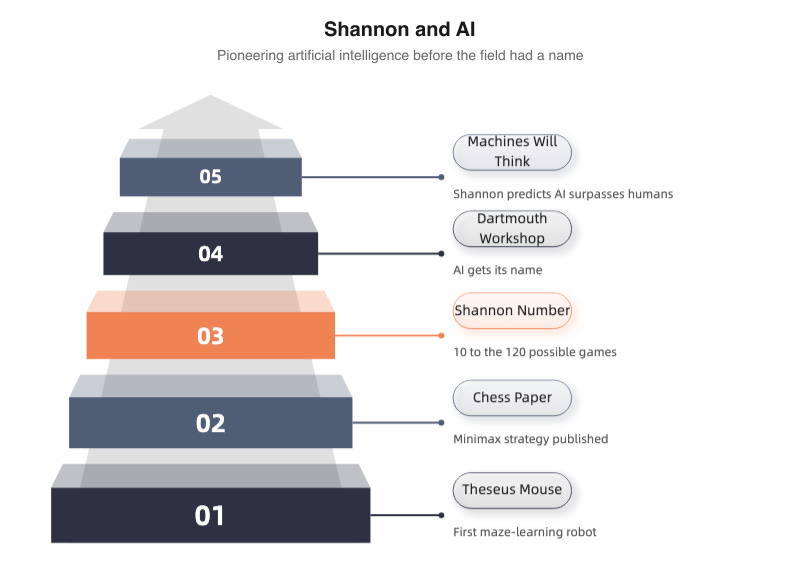

Shannon’s contributions to artificial intelligence began before the field had a name. In 1950-51, he built Theseus, an electromechanical mouse that could learn to navigate a maze. The mouse (named after the mythological hero who navigated the Minotaur’s labyrinth) was controlled by a circuit of roughly 90 telephone relays mounted beneath the maze. On its first attempt, Theseus would wander randomly until it found the goal.

On subsequent attempts, it remembered the correct path.

Honestly, the “intelligence” was in the relay circuit under the maze, not in the mouse itself. But the demonstration was visually arresting, and it became one of the earliest public demonstrations of machine learning. Shannon brought Theseus to conferences and cocktail parties with equal enthusiasm.

The chess paper

Also in 1950, Shannon published “Programming a Computer for Playing Chess,” the first detailed technical paper on how a computer could play chess algorithmically. He proposed the minimax strategy (evaluate all possible moves by assuming your opponent will always make the best counter-move) and distinguished between Type A strategies (brute-force evaluation of all possible moves to a given depth) and Type B strategies (selective evaluation of only the most promising moves, more like human play).

He also calculated what’s now called the Shannon Number: approximately 10^120, an estimate of the total number of possible chess games. For perspective, the number of atoms in the observable universe is roughly 10^80. The Shannon Number is so much larger that “astronomical” doesn’t begin to cover it. It proved that chess could never be solved by brute force, that any chess-playing computer would need to be selective and, in some functional sense, intelligent about which possibilities it explored.

Dartmouth, 1956

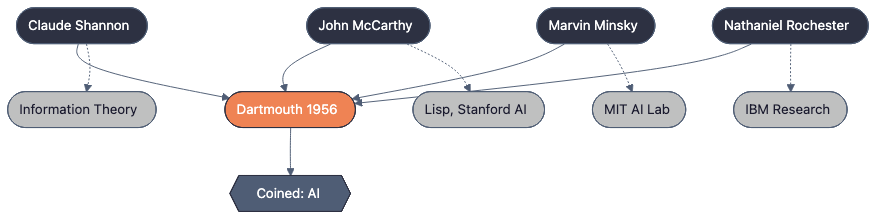

In 1956, Shannon co-organised the Dartmouth Workshop4 alongside John McCarthy, Marvin Minsky, and Nathaniel Rochester. The workshop’s proposal, written primarily by McCarthy, coined the term “artificial intelligence” and laid out the research programme that would define the field for the next half-century. It was a small gathering, about ten participants spending several weeks at Dartmouth College in New Hampshire. The attendees included many of the people who would shape AI for decades to come.

Shannon’s role at Dartmouth was characteristic. He participated, contributed, and then stepped back. Unlike McCarthy and Minsky, who became tireless evangelists for AI, Shannon kept a quieter, more cautious stance. He believed machines could think. He was optimistic about the long-term prospects. But he didn’t make bold predictions about timelines, and he didn’t build an institutional empire around AI research the way Minsky did at MIT or McCarthy did at Stanford.

In his own words

Shannon’s later comments on AI were direct and unhedged:

“You bet,” he said when asked if machines could think. “I’m a machine, and you’re a machine, and we both think, don’t we?”

He described himself and other humans as machines, meat machines operating on electrical impulses, and saw no principled reason why silicon machines couldn’t do the same. “It is certainly plausible to me that in a few decades machines will be beyond humans,” he said. In his 1985 Kyoto Prize acceptance speech, he suggested machines would “rival or even surpass the human brain.”

But Shannon was an optimist who kept his predictions vague. He never staked his reputation on a specific date. He never claimed intelligence was “just around the corner.” He saw where things were heading and declined to specify how far away the destination was.

Shannon’s ghost in the machine

Every time a large language model generates a sentence, it’s doing Shannon’s work. The connection between 1948 information theory and 2026 artificial intelligence isn’t metaphorical or inspirational. It’s mathematical. Shannon’s concepts are embedded in the literal machinery of modern AI at every level, from the training objective to the hardware.

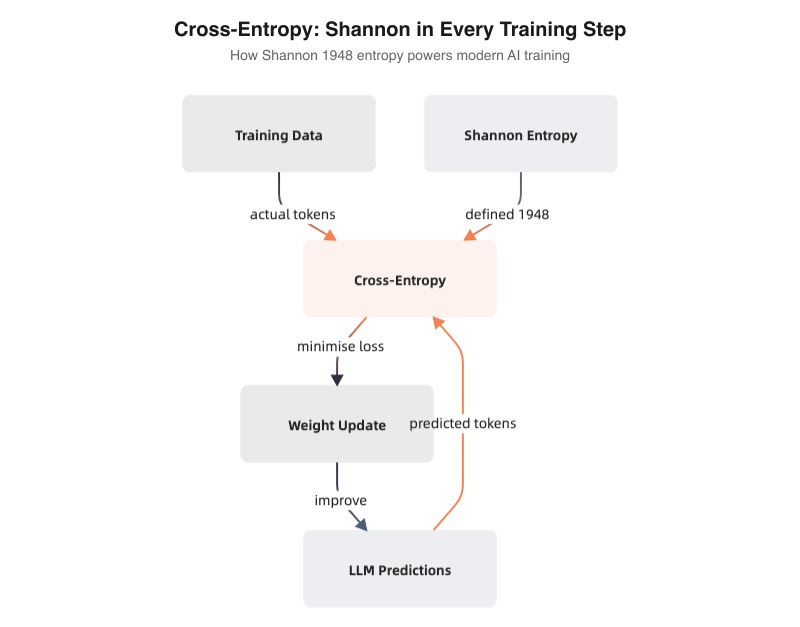

Cross-entropy loss: the training signal

When a language model like Claude or GPT is trained, it learns by minimising cross-entropy loss. This is, directly, Shannon’s entropy applied to the comparison between two probability distributions: the model’s predicted distribution over possible next tokens, and the actual distribution (the token that actually comes next in the training data).

In Shannon’s terms: the model is trying to become a better predictor of text. Every training step pushes it to assign higher probability to the tokens that actually appear and lower probability to the tokens that don’t. Cross-entropy measures how far the model’s predictions are from reality. Lower cross-entropy means better predictions. The entire multi-billion-dollar enterprise of training large language models is, at its mathematical root, an exercise in minimising a quantity that Claude Shannon defined in 1948.

KL divergence

Kullback-Leibler divergence, another information-theoretic measure descending directly from Shannon’s work, is central to modern machine learning. It quantifies how one probability distribution differs from another. KL divergence shows up in variational autoencoders (VAEs), in reinforcement learning from human feedback (RLHF, the technique used to align language models with human preferences), and throughout probabilistic machine learning. Every time a model is fine-tuned or aligned, KL divergence is there, measuring the gap between what the model predicts and what we want it to predict.

Prediction as compression

Shannon proved that prediction and compression are two sides of the same coin. A model that can predict the next symbol in a sequence with high accuracy can also compress that sequence efficiently, and vice versa. This equivalence is foundational to modern thinking about language models. A well-trained LLM is, in a precise information-theoretic sense, a good compressor of text. It has learned the statistical structure of language well enough to represent it efficiently.

This is why researchers sometimes describe language model training as “learning a compression of the internet.”

The model doesn’t memorise every webpage. It learns the patterns, the regularities, the statistical structure well enough to reconstruct plausible text. Shannon would have recognised this immediately.

Decision trees and information gain

In machine learning, decision trees split data at each node by choosing the feature that provides the most information gain, which is defined as the reduction in Shannon entropy. The algorithm literally asks: “Which question, asked at this point, would resolve the most uncertainty about the outcome?” Shannon’s entropy is the yardstick.

The hardware layer

Beneath all of this, beneath the training algorithms and the loss functions and the probability distributions, sits the hardware. Every GPU and TPU running an AI model is built from billions of logic gates performing Boolean operations: AND, OR, NOT. The operations that Shannon’s 1937 master’s thesis showed could implement any logical function.

The entire physical substrate of artificial intelligence, every transistor in every chip, exists because a twenty-one-year-old in 1937 noticed that electrical switches obeyed the same rules as Boolean algebra.

The English entropy experiment

In 1951, Shannon published “Prediction and Entropy of Printed English,” a paper in which he estimated the entropy rate of written English. He did this by asking human subjects to guess the next letter in a sequence of text and using their accuracy to bound the information content per character. He estimated English carries roughly 1 to 1.5 bits of information per character, far less than the theoretical maximum, because language is highly redundant and predictable.

This is, functionally, the same task a language model performs. A language model trained on English text is estimating the entropy rate of English, learning how predictable the language is at each point, and using that knowledge to generate plausible continuations.

Shannon measured English entropy with human guessers in 1951. Language models measure it with neural networks in 2026. The question is the same.

The semantic caveat

Shannon was careful to note, on the very first page of his 1948 paper, that “the semantic aspects of communication are irrelevant to the engineering problem.” His theory was about the transmission of messages, not their meaning. A channel that transmits “the cat sat on the mat” and a channel that transmits an equivalent number of random characters are, in Shannon’s framework, equally efficient if they use the same bandwidth.

Modern AI excels at the statistical patterns Shannon described: correlation, compression, prediction. Whether it achieves anything resembling understanding remains an open question, and Shannon’s own caveat is the reason it remains open.

Language models predict text with remarkable fluency. They compress the statistical structure of language with impressive efficiency.

But Shannon explicitly excluded meaning from his theory. The models built on his mathematics inherit that exclusion. They are brilliant at Shannon’s game (predicting the next token) and the question of whether that game produces genuine comprehension is one Shannon himself would have found fascinating.

What’s in a name?

In March 2023, Anthropic launched an AI assistant called Claude. The name immediately prompted a question: is it named after Claude Shannon?

The honest answer: probably, but nobody at Anthropic has said so on the record.

Anthropic’s “Introducing Claude” blog post from March 2023 does not mention Shannon. It doesn’t explain the name at all. No direct quote from Dario Amodei or Daniela Amodei, the company’s co-founders, confirms the Shannon connection in any public interview I can find.

But the circumstantial evidence is strong, and nobody has proposed a competing explanation.

The New Yorker, in a February 2026 piece, described the origin as “company lore,” reporting that the name was “partly a patronym for Claude Shannon... but it is also just a name that sounds friendly, one that, unlike Siri or Alexa, is male and, unlike ChatGPT, does not bring to mind a countertop appliance.”

Wired was vaguer but pointed in the same direction: “Depending on who you ask, the name can also be a reference to Claude Shannon.”

Britannica states it as fact. So does Wikipedia.

No alternative namesake has ever surfaced. The Boston Globe drew the connection explicitly. Nobody has suggested it’s named after Claude Debussy, Claude Monet, or Claude Rains. (Though a case for Claude Rains, the actor who played the Invisible Man, would be entertainingly on-brand for an AI system that has no physical form.)

Whether the name is intentional or not, the intellectual lineage is a matter of mathematics, not branding.

Shannon’s entropy is in Claude’s loss function. His Boolean algebra is in Claude’s hardware. His channel capacity theorem describes the theoretical limits of the networks that carry Claude’s outputs around the world. His Markov chain text generation experiment is the conceptual ancestor of Claude’s token-by-token prediction. His 1951 paper on the entropy of English measures the same quantity that Claude’s training process optimises.

If Anthropic named their AI after Claude Shannon, it was a fitting tribute. If they didn’t, it’s the most fortunate coincidence in the history of technology branding. Either way, Shannon is inside the machine.

Whether the name is homage or accident, Shannon would probably have appreciated the irony. A man who spent his career studying communication now has his first name attached to one of the most sophisticated communication systems ever built, and nobody can quite confirm whether that was the plan.

The last theorem

By the early 1980s, Claude Shannon was showing signs of Alzheimer’s disease. The mind that had held an entire theory of information without writing it down was beginning to lose its grip on the present.

The decline was gradual. Shannon withdrew from public life. He stopped attending conferences (the Brighton appearance in 1985 was a rare exception). He spent his final years in a nursing home in Medford, Massachusetts, cared for by staff and visited daily by Betty, his wife of nearly fifty years.

Gallager, who knew Shannon well, described his final years with spare precision: Shannon remained “good-natured as usual and enjoyed Betty’s daily visits.” The man who had given the world the mathematics of communication could no longer communicate. The cruelty of the disease was total.

Claude Elwood Shannon died on 24 February 2001. He was eighty-four years old.

By the time of his death, the internet had become a global phenomenon. Email, web browsing, digital music, mobile phones, fibre-optic networks, satellite communications: all of it built on his theoretical foundations. But Shannon was largely unaware. The world his work had made was invisible to him at the end.

The honours

The awards had come thick and fast during his working years. The National Medal of Science in 1966, presented by President Lyndon B. Johnson. The IEEE Medal of Honor, also in 1966. The Kyoto Prize in 1985, Japan’s equivalent of the Nobel for fields the Nobel doesn’t cover. In 1973, Shannon became the first recipient of the Claude E. Shannon Award, given by the IEEE Information Theory Society, the discipline’s highest honour and one that carries his own name.

Selected honours and recognition for Claude Shannon

Six statues of Shannon, sculpted by Eugene Daub, stand at various institutions including the University of Michigan and MIT. On his 100th birthday in 2016, Google honoured him with a Google Doodle, introducing Shannon to the millions of people who use Google daily and had never heard his name.

James Gleick, the science writer and author of The Information, put it as well as anyone: “It’s Shannon whose fingerprints are on every electronic device we own, every computer screen we gaze into, every means of digital communication. He’s one of these people who so transform the world that, after the transformation, the old world is forgotten.”

Rodney Brooks, the roboticist and former director of MIT’s Computer Science and Artificial Intelligence Laboratory, called Shannon “the 20th century engineer who contributed the most to 21st century technologies.”

The quiet centre

Shannon was never famous the way Einstein was famous, or even the way Steve Jobs or Elon Musk would become famous. He never sought public attention. He never launched a startup. He never appeared on magazine covers or cable news. He built the mathematical foundation of the modern world and then went home to juggle.

There’s something appealing about that. In an age of personal branding and founder mythology, Shannon offers a different model: the person who does the most important work of their generation and genuinely doesn’t care if anyone knows their name.

He cared about the work. He cared about the puzzles. He cared about whether a unicycle could be ridden down a hallway while juggling three balls (it can, but it takes practice). He cared about whether roulette could be beaten (it can, but the earpiece falls out). He cared about whether machines could think (they can, sort of, and the question is still open).

He did not care, by all available evidence, about being Claude Shannon, Famous Scientist. He was just Claude Shannon, a man with a toggle switch and a box and a mechanical hand that reaches out, gently, and turns it off.

The hand retreats. The lid closes.

But the bits keep flowing.

1 Shannon’s juggling theorem, (F+D)H = (V+D)N, has been extended by other mathematicians. It applies to “cascade” juggling patterns and doesn’t cover all possible juggling sequences, but it captures the fundamental constraint: what goes up must come down, and your hands had better be free when it does.

2 The differential analyser that Shannon worked on with Vannevar Bush was not a digital computer. It was an analogue machine that represented quantities as continuous physical measurements (shaft rotations, wheel positions). The irony is thick: Shannon’s work on an analogue machine led directly to the theoretical foundations of digital computing.

3 SIGSALY’s one-time pad records were manufactured at Bell Labs and shipped in matched pairs to Washington and London. Each record was used once and then destroyed. The system transmitted voice by digitising it into discrete levels, a process called “quantisation,” which was itself an early application of the digital principles Shannon would formalise in 1948.

4 The Dartmouth Workshop proposal’s full title was “A Proposal for the Dartmouth Summer Research Project on Artificial Intelligence.” The word “artificial intelligence” appears for the first time in this document. McCarthy later said he chose the name to distinguish the field from cybernetics, which Norbert Wiener had already claimed.