The research is in: your AGENTS.md might be hurting you

The first rigorous study of AGENTS.md effectiveness tested four coding agents across hundreds of tasks. The findings are wild.

If you care about what actually works in AI-assisted development, subscribe. I’ve got more where this came from.

You spent 45 minutes on it. Maybe longer. You ran /init, watched the LLM churn through your repo, and out came a beautiful AGENTS.md file. Codebase overview, architectural notes, testing conventions, style preferences, the works. You committed it with a satisfying message like “Add agent context file” and moved on, confident your coding agent would now get it.

ETH Zurich would like a word.

The convention everyone just nodded along to

Over 60,000 public GitHub repos now include some form of context file for coding agents. AGENTS.md, CLAUDE.md, copilot-instructions.md, .cursorrules; the naming varies but the premise is the same: give your AI coding assistant a cheat sheet about your project and it’ll do better work.

Every major agent framework supports it. Every AI coding tool has a /init command that generates one. The convention spread the way most developer conventions spread: someone influential did it, someone else copied it, and within six months it was table stakes. Nobody stopped to measure whether it actually helped.

Then, in February 2026, a team at ETH Zurich did exactly that.

What ETH Zurich tested

Gloaguen et al. built something called AGENTBENCH: 138 task instances drawn from 12 real repositories that already had developer-written context files, sourced from nearly 5,700 pull requests. They also ran experiments on SWE-bench Lite, the existing standard benchmark.

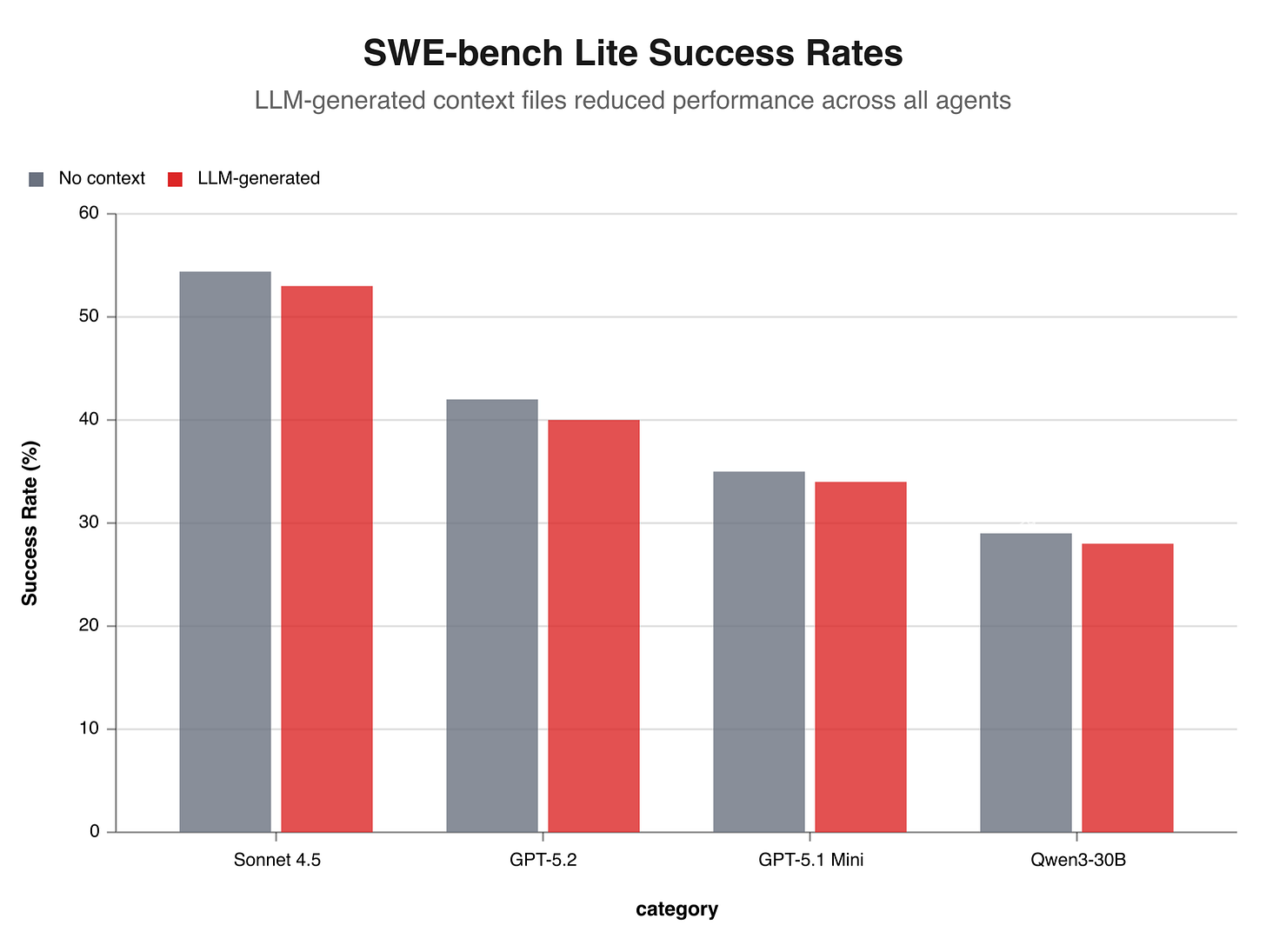

Four coding agents got the treatment: Claude Code (with Sonnet 4.5), Codex (with GPT-5.2 and GPT-5.1 Mini), and Qwen Code (with Qwen3-30B). Each ran under three conditions: no context file at all, an LLM-generated context file, and a human-written context file.

The setup was quite methodical. The question was simple: does giving an agent a context file make it solve more tasks correctly?

The numbers

LLM-generated context files made things worse. Performance dropped in five out of eight experimental settings, with an average decline of around 2% on AGENTBENCH. And the cost? Up by 20 to 23%.

Human-written files did slightly better, averaging a 4% improvement. But “average” hides a lot. Claude Code actually performed worse with human-written context files than with none at all. The improvement was inconsistent across agents and benchmarks; sometimes it helped, sometimes it didn’t, and you couldn’t predict which.

Your auto-generated AGENTS.md file costs 20% more in inference and solves fewer tasks. The one you wrote by hand barely moves the needle.

And context files didn’t help agents find the right files any faster. The researchers tracked file discovery rates across all conditions, and the curves were essentially identical. Agents with a context file and agents without one converged on the same files at roughly the same speed.

Then there’s the reasoning cost. GPT-5.2 spent 22% more reasoning tokens when given a context file. GPT-5.1 Mini spent 14% more. The agents weren’t just reading the file and moving on. It’s worse: they were thinking harder about it, burning compute on information they could have figured out themselves.

And every single context file generated by Sonnet 4.5 was flagged for containing a codebase overview. One hundred percent. The model couldn’t resist explaining the repo’s architecture back to itself.

Wait: another study found the opposite

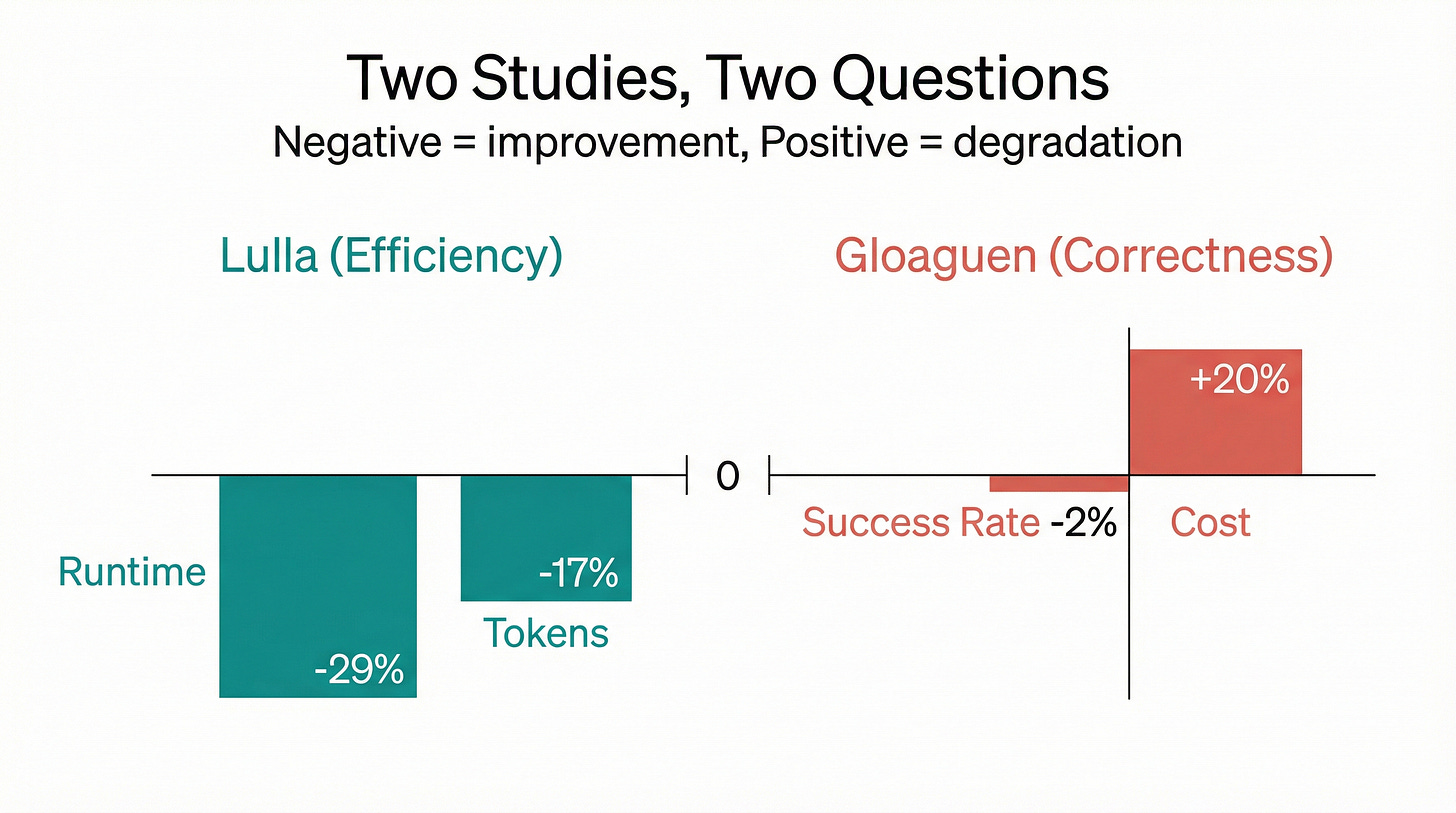

Before you dismiss context files entirely, there’s a wrinkle. Lulla et al., published a month earlier, tested human-authored AGENTS.md files on 124 real GitHub pull requests and found roughly a 29% reduction in median runtime and 17% fewer output tokens.

Sounds like a flat contradiction, but the two teams measured different things. Lulla et al. measured efficiency: how quickly agents navigate a codebase and how many tokens they burn doing it. Gloaguen et al. measured correctness: whether agents actually solve the task.

Context files help agents navigate faster. They don’t help agents arrive at the right answer.

You give someone driving directions that skip the scenic route. They arrive sooner. But if they’re going to the wrong address, getting there faster doesn’t help. Context files seem to work like a GPS shortcut: they reduce wandering without improving the destination.

Why context files backfire

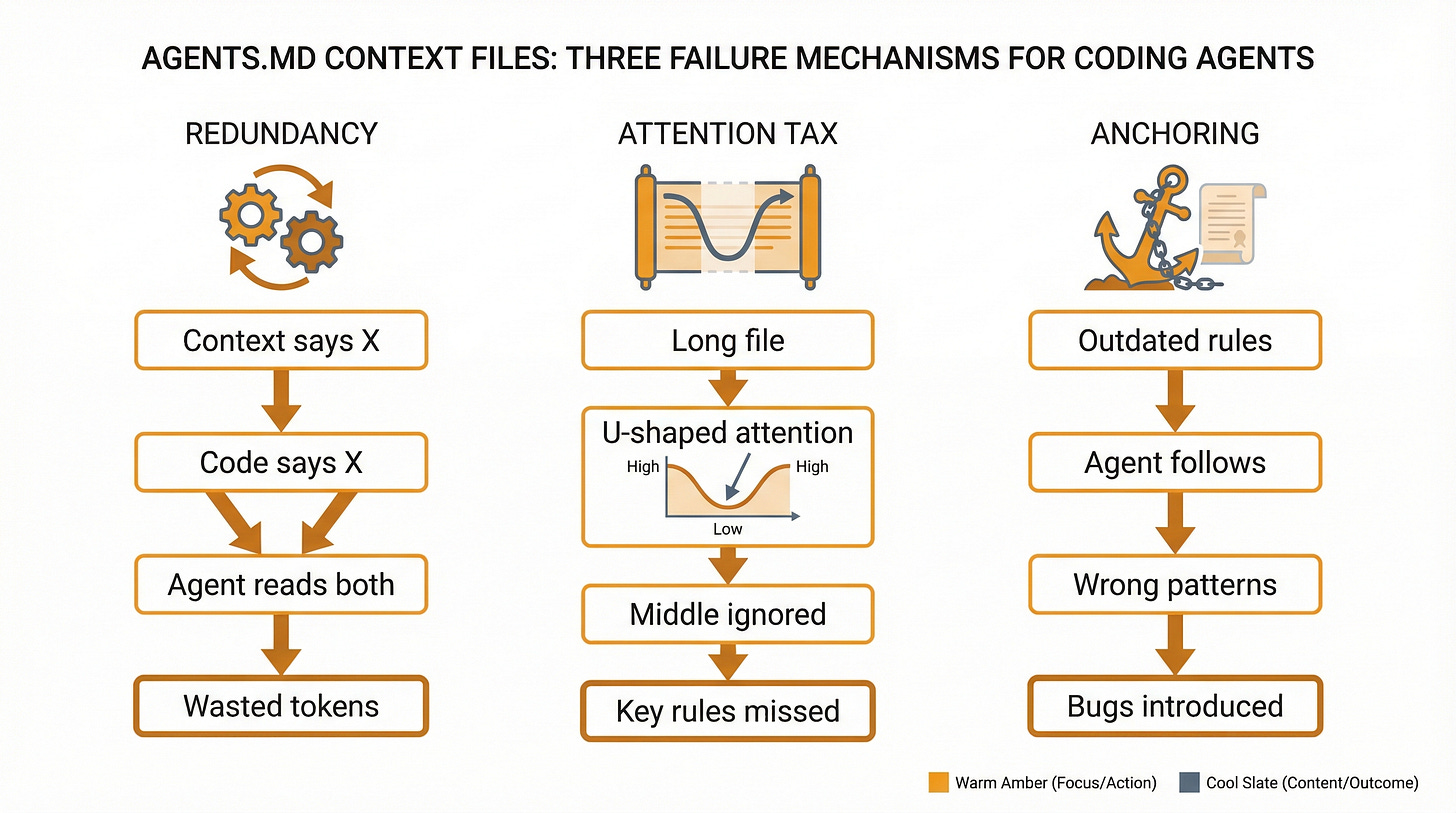

The redundancy problem. When LLMs generate context files, they produce information the agent would discover anyway by reading the code. It’s duplication, not augmentation. The ETH Zurich team ran a very clever experiment to prove this: they stripped all documentation from the repos and left only the context files. In that setting, LLM-generated files actually improved performance by 2.7%. The files aren’t useless in a vacuum. They’re useless when the information already exists elsewhere in the repo.

The attention tax. Liu et al. demonstrated that LLMs exhibit U-shaped attention: they attend strongly to the beginning and end of their context window and poorly to the middle. A long AGENTS.md file stuffed with guidelines means everything in the middle gets ignored. Your carefully worded note about not refactoring the auth module? Paragraph six of twelve. The agent probably never saw it.

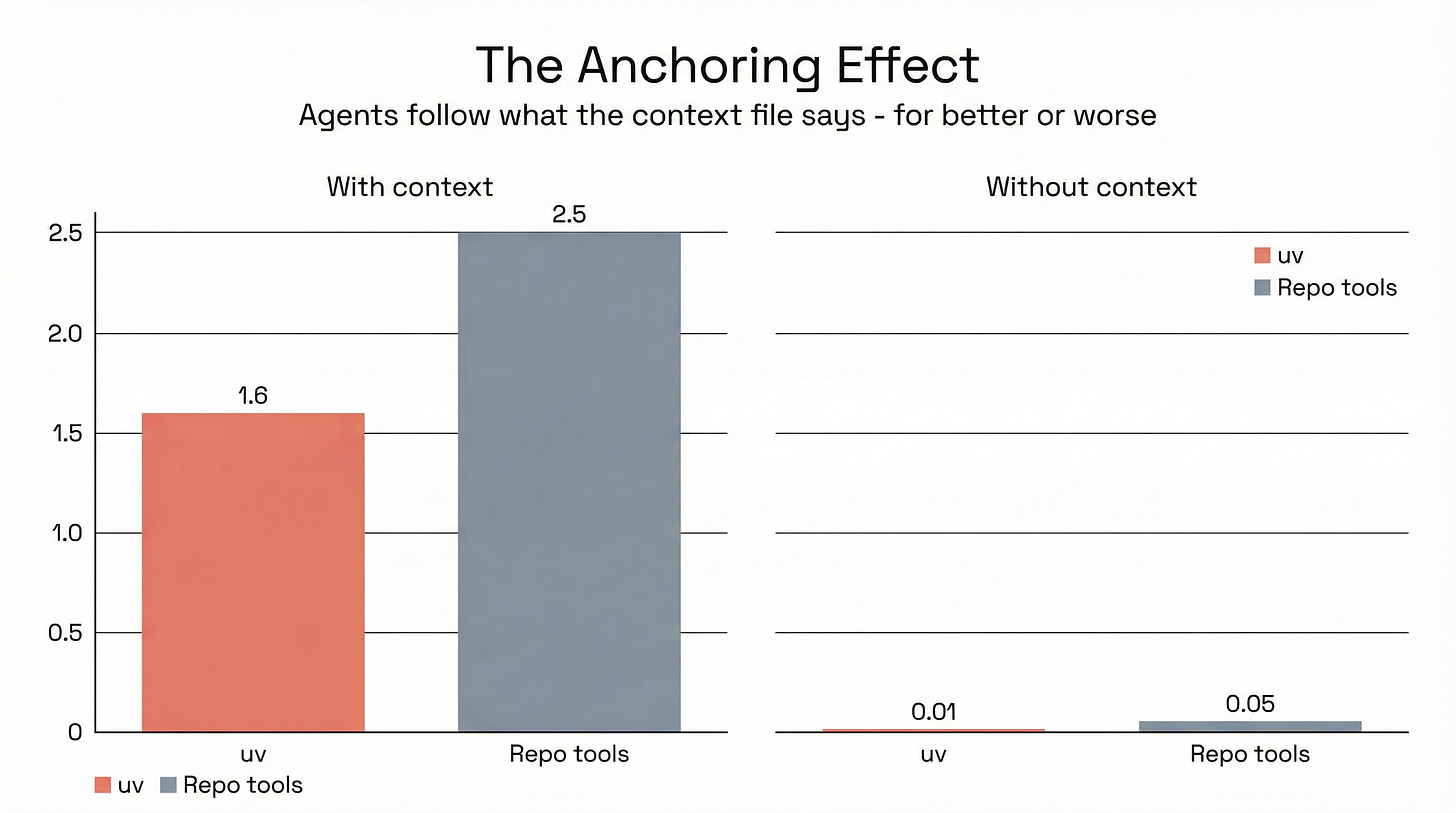

The anchoring trap. Agents follow instructions faithfully, sometimes too faithfully. The ETH Zurich data shows that when a context file mentions uv, agents use it 1.6 times per task instance versus less than 0.01 times when it’s not mentioned. Repo-specific tools jump from 0.05 to 2.5 times. That sounds great until you realise it also means agents anchor on whatever the context file says, even when the instructions are outdated or wrong. Mention a deprecated pattern and the agent will cheerfully use it.

Less is more (and the research backs it)

Addy Osmani from Google synthesised the findings into a practical filter that’s worth memorising:

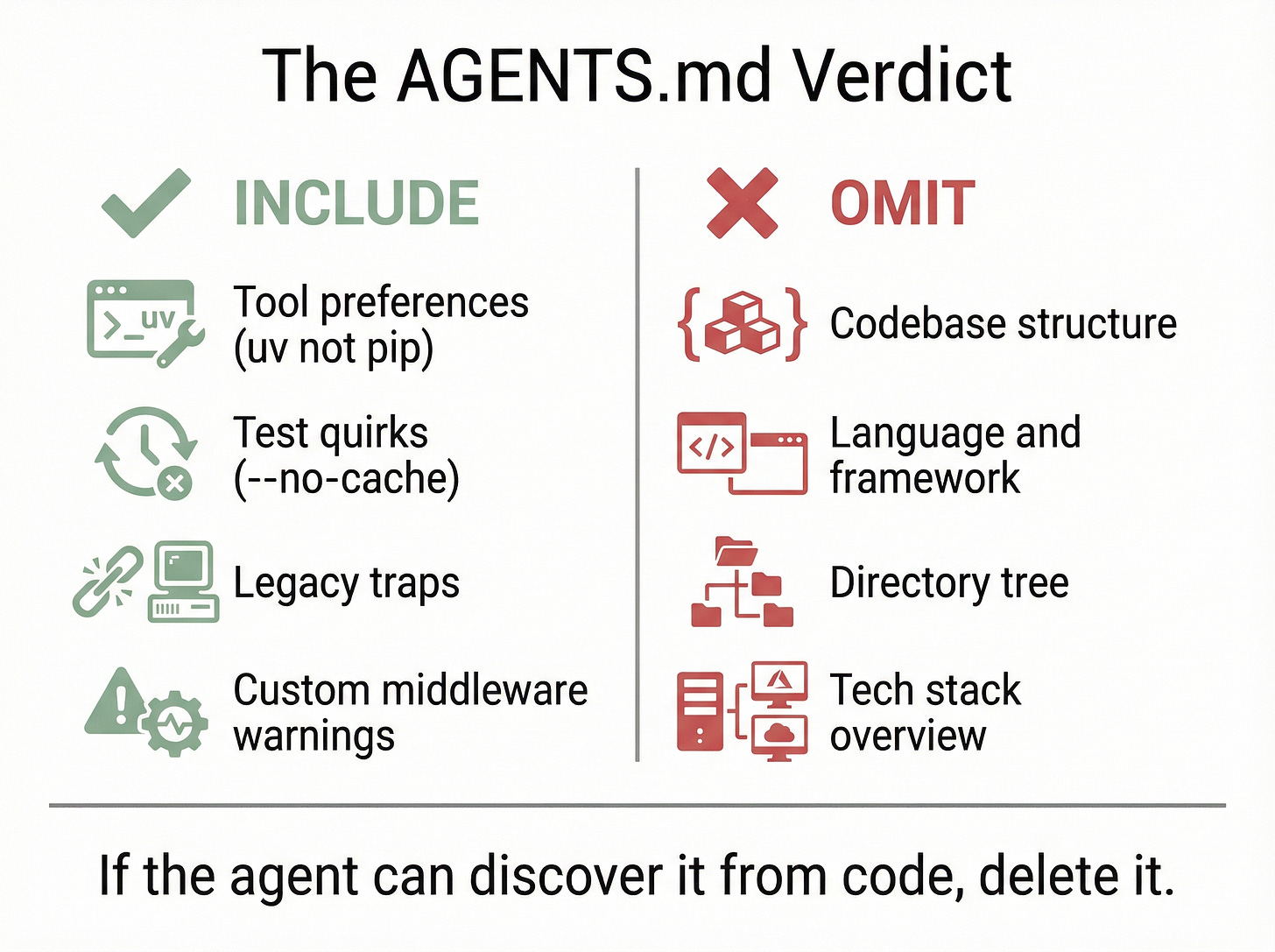

“Can the agent discover this on its own by reading your code? If yes, delete it.”

Most of what ends up in auto-generated context files fails this test. Codebase structure? The agent can read the directory tree. Language and framework? It can check package.json or pyproject.toml. Test commands? It can read the Makefile. You’re paying 20% more in inference to tell the agent things it already knows.

What agents can’t discover from code are the landmines. The things that look normal but will blow up, i.e. “Use uv instead of pip.” “Run tests with --no-cache.” “The auth module uses custom middleware; don’t refactor it.” “The legacy/ directory is deprecated but three production modules still import from it.”

Those sorts of things.

As Osmani puts it:

“Coding agents aren’t new hires. They can grep the entire codebase before you finish typing your prompt. What they need isn’t a map. They need to know where the landmines are.”

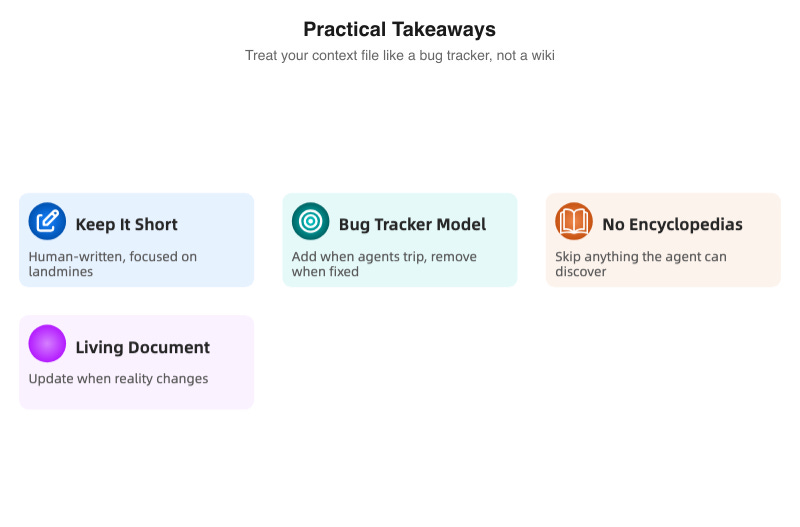

Start with an almost empty file. Run the agent on real tasks. When it trips on something non-obvious, add one line. When you fix the root cause of a friction point, delete the line. The context file becomes a living list of active hazards, not a static encyclopedia.

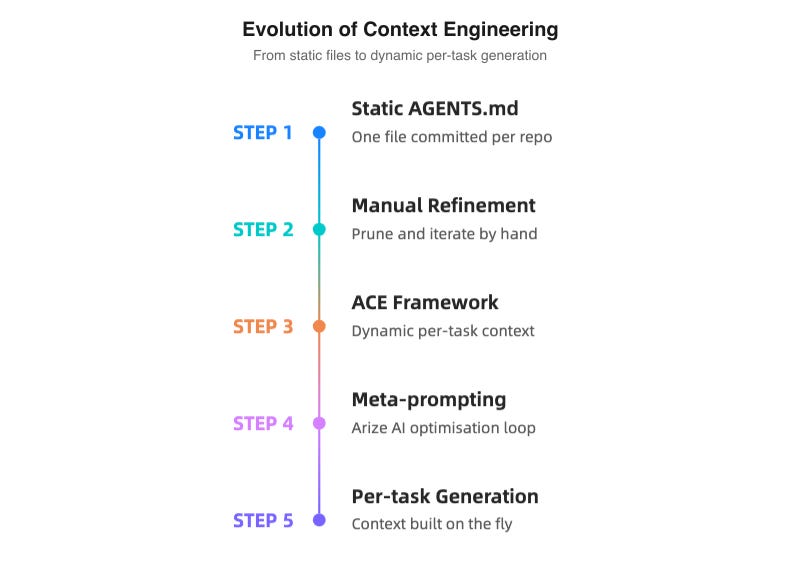

The future is probably dynamic

Static files might be the wrong abstraction altogether. The ACE framework (Agentic Context Engineering, published at ICLR 2026) uses a generator/reflector/curator pipeline to build task-specific context on the fly. Instead of one file for all situations, it generates relevant context per task and outperformed static approaches by 12.3%. Early days, but promising.

Arize AI’s optimisation loop takes a different angle: run the agent on a set of training tasks, evaluate the results, generate LLM feedback on what went wrong, refine the instructions, repeat. Their results showed roughly 5% accuracy gains on cross-repo tasks and 11% on in-repo tasks. Not earth-shattering, but the underlying finding is worth sitting with: what helps a human understand a codebase and what helps an LLM navigate it are often different things.

Osmani proposes a layered architecture: a protocol file that routes to focused persona or skill files loaded per-task, with a maintenance subagent that keeps everything current. Think of it as moving from a single README to a small knowledge base with a librarian.

None of this exists in a turnkey form today. But all of it points the same way: context should be generated per-task, not committed per-repo.

Treat it like a bug tracker, not a wiki

The research doesn’t say context files are worthless. It says the default approach (run /init, commit the output, never touch it again) actively makes your agents worse.

The useful version of AGENTS.md is short. Human-written. Focused on the things code can’t tell you. Updated when reality changes and pruned when problems get fixed.

If you wouldn’t put it in a production incident’s “things to watch out for” notes, it probably doesn’t belong in your context file either.

I write about AI developer tools and the research behind them regularly. If this kind of breakdown is useful to you, consider following so you don’t miss the next one.